If your content pipeline feels like a relay race with missing batons—Slack pings, doc links, “who owns this?” moments—you don’t have a writing problem. You have an orchestration problem.

In this guide, you’ll map a research-to-distribution workflow as a set of owned steps, handoff artifacts, and gates. Then you’ll see exactly where AI agents help—and where humans should stay firmly in charge.

What “content orchestration” actually means

Content orchestration is the practice of connecting the whole content lifecycle—creation, review, approval, publishing, and distribution—across teams and tools so it runs as a coordinated system instead of a pile of tasks. Pantheon frames it as an integrated, automated approach that improves governance and time-to-market across the content lifecycle (see Pantheon’s overview of content orchestration).

That definition matters because it’s easy to confuse orchestration with:

Content management (storing and publishing content)

Project management (tracking tasks and deadlines)

Automation (triggering rules)

Orchestration is the layer that makes those pieces work together—with clear ownership and handoffs.

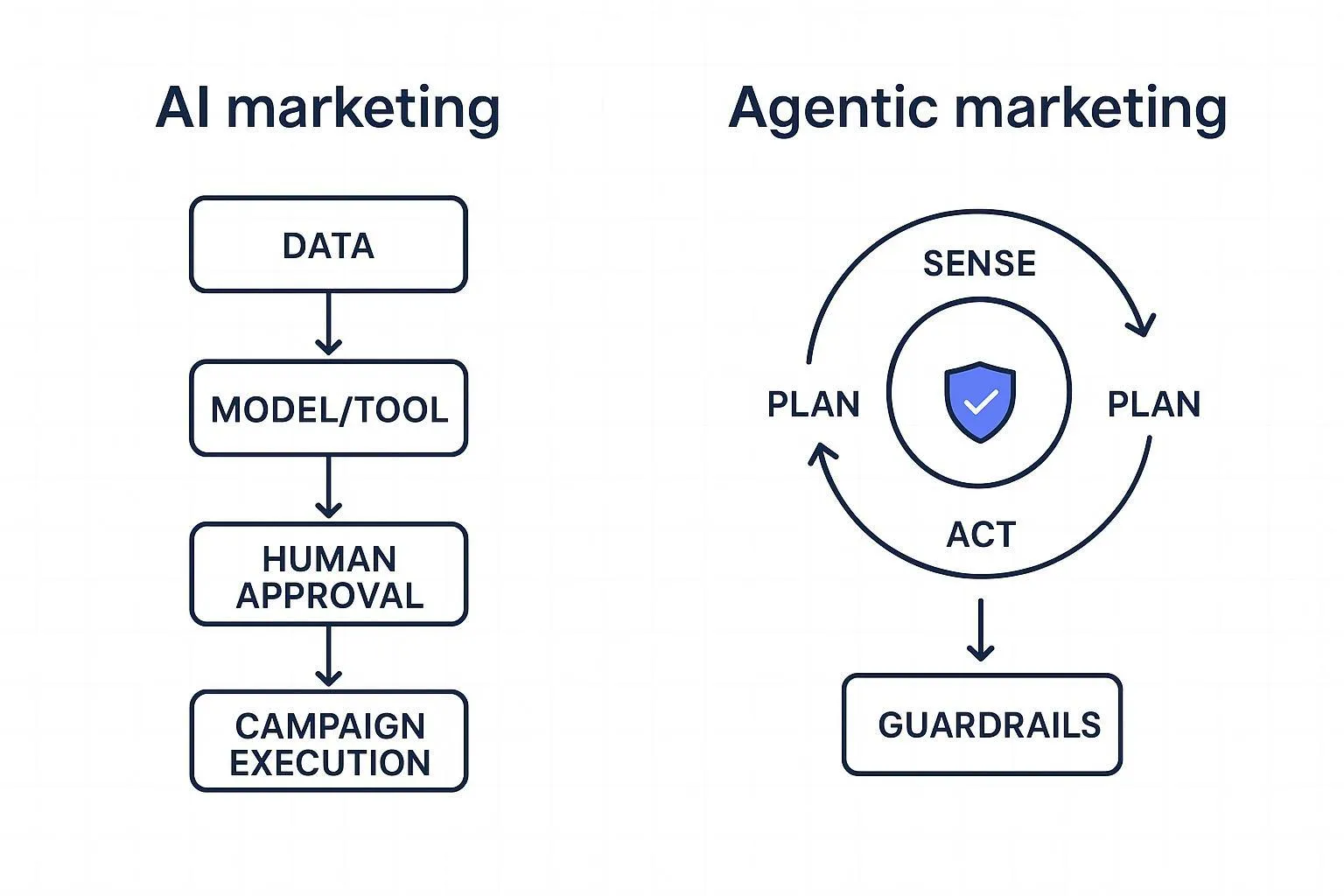

Where AI agents fit (and where they don’t)

A useful definition for AI agents in a content workflow is simple:

They can take a goal, plan a sequence of actions, produce artifacts (briefs, outlines, drafts), and iterate with feedback.

They’re best at work that is repeatable, artifact-driven, and verifiable.

What they shouldn’t own—especially for scaling SMB teams:

Final brand decisions (“Is this us?”)

Final claims and risk judgments (“Can we say this?”)

Final publish authority (“Ship it.”)

Pro Tip: If you can’t describe a step’s expected output in one sentence, it’s not ready for automation.

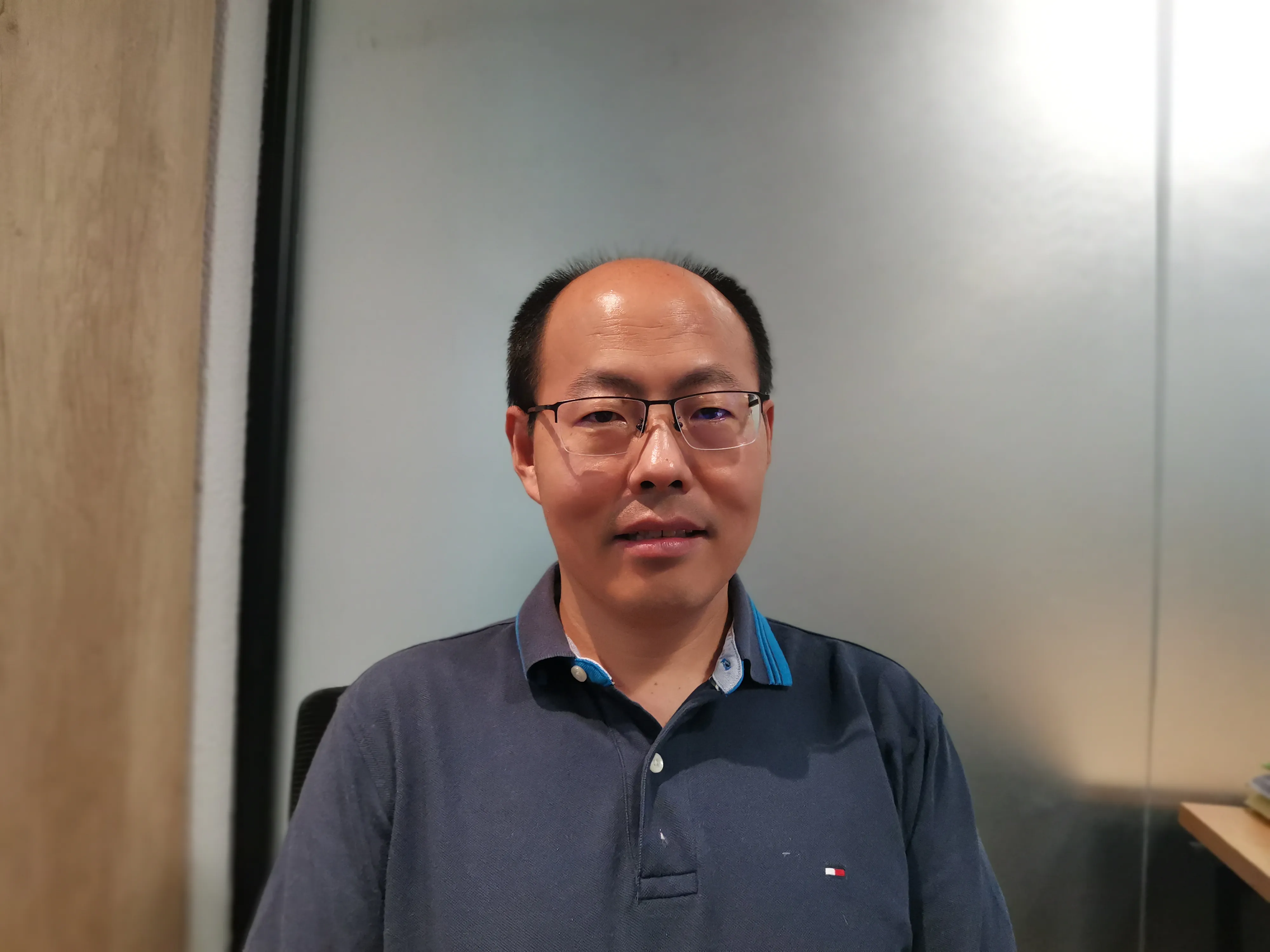

A swimlane view of content workflow orchestration

Your workflow, with ownership

Swimlane diagrams map a process across participants so you can see responsibilities and handoffs at a glance. Atlassian describes swimlanes as a way to make “who does what” explicit and to reveal cross-team handoffs and bottlenecks (see Atlassian’s swimlane diagram guide).

Here’s a neutral template you can adapt. (QuickCreator-style agent roles come later as an example.)

If you’re searching for this topic, you’ll see a few recurring phrases—content orchestration, a swimlane diagram for content workflow, and the idea of a human-in-the-loop content workflow. This guide uses those terms plainly and shows what they mean in practice.

Swimlanes: HUMAN OWNER | AI AGENTS | SYSTEMS | CHANNELS

1. Define outcome + guardrails (Human)

2. Research + source pack (Agent)

3. Create content brief (Agent → Human approval)

4. Outline + angle (Agent → Human approval)

5. Draft v1 (Agent)

6. Brand voice gate (Agent check → Human sign-off)

7. SEO/GEO optimization pack (Agent)

8. Publish + distribute (Systems + Channels, Human final publish)

9. Measure + feedback loop (Systems → Agent suggestions → Human priorities)

Notice what this does:

Makes handoffs visible (where work changes hands)

Creates gates (where quality/safety is enforced)

Forces human ownership for decisions that affect brand and risk

Step-by-step guide: from research to distribution

You can think of this as a reference model for AI agents in content marketing workflow design: not a tool list, but an ownership map you can implement and iterate.

This is the workflow you can run weekly—even with a small team.

Step 1) Define outcome + guardrails (human-owned)

Input: A business goal + a channel priority (e.g., “publish 2 SEO posts/month that drive demo interest”).

Action: Write a one-paragraph “definition of done.” Include:

target reader (ICP)

what the post must help them do/understand

what must be true before publishing (your gates)

Output (handoff artifact): Outcome & guardrails card (one doc or ticket).

Done when: Anyone on the team can read it and answer: what are we making, for whom, and what can’t we get wrong?

Common failure: Starting with “we need a blog post” instead of a reader outcome.

Step 2) Build a research + source pack (agent-led)

Input: Outcome & guardrails card.

Action: An agent (or a human with help from an agent) compiles:

5–10 bullet insights (not quotes)

3–5 credible sources worth citing

3 common objections/questions to address

Output (handoff artifact): Source pack with links + 10 bullet insights.

Done when: The pack contains enough trustworthy material that the writer doesn’t have to “make things up” to sound authoritative.

Common failure: Treating search snippets as “research.” If the claim matters, you need an actual source.

Step 3) Generate the content brief (agent drafts, human approves)

Input: Source pack + outcome & guardrails.

Action: The agent drafts a brief that includes:

working title + primary keyword

target angle (what you’re not covering)

section outline (H2/H3)

internal link plan (which pages on your site support the reader next)

Output (handoff artifact): Approved content brief.

Done when: A human owner can say “yes—this is the story we want to tell,” in 2 minutes.

Common failure: A brief that’s just a title. If you skip the brief, the review cycles show up later.

Step 4) Create an outline that makes handoffs easy (agent drafts, human approves)

Input: Approved brief.

Action: The agent produces an outline where each section has:

the claim the section will make

the evidence/source it will cite

the example the section will use

Output (handoff artifact): Approved outline with evidence mapping.

Done when: The outline reads like a set of decisions—not a list of topics.

Common failure: Vague headings that start with “Understanding…” or “Exploring…”. If the heading can’t commit, the section won’t either.

Step 5) Write draft v1 (agent-led, human edits)

Input: Approved outline with evidence mapping.

Action: The agent writes the first full draft, using:

the source pack for factual grounding

your style guide for tone and structure

your link plan so the post sits inside your site’s topical cluster

Output (handoff artifact): Draft v1.

Done when: The draft is publishable in structure (headings, flow, citations), even if it still needs human taste.

Common failure: “Pretty text” without citations, definitions, or a clear reader outcome.

Step 6) Run the brand voice gate (non-negotiable)

This is the gate you asked for—and it’s the one most teams skip until something goes wrong.

Input: Draft v1 + your blog style guide.

Action: Enforce brand voice and brand safety:

check vocabulary and tone against your style rules

remove generic AI filler and empty claims

verify the draft uses your product terms consistently

confirm the post matches the audience’s sophistication level

A platform example: QuickCreator’s Brand Intelligence Agent is positioned as a persistent “intelligence layer” that “learns your voice… and references your private knowledge base” to reduce off-brand, generic output (see QuickCreator’s Brand Intelligence Agent). Even if you don’t use QuickCreator, the principle holds: treat brand voice as a test, not a preference.

Output (handoff artifact): Brand-approved draft (with tracked changes or a short change log).

Done when: A human owner can read three paragraphs and say, “Yes, that sounds like us.”

Common failure: Confusing “grammatically correct” with “on-brand.”

⚠️ Warning: A brand voice gate without a written style guide becomes a taste argument. Write the style guide first.

Step 7) Produce the SEO/GEO optimization pack (agent-led)

Input: Brand-approved draft.

Action: The agent prepares a small “optimization pack”:

title options (without clickbait)

meta description

suggested internal links + anchor text

FAQ candidates (based on the draft)

GEO checks: definitions early, sources inline, extractable sub-answers

You can treat this as a specialized role (“Optimization Agent”) in your workflow.

Output (handoff artifact): Optimization pack + updated draft.

Done when: The post is both readable and “extractable”—a section can be lifted as a direct answer without losing context.

Common failure: Keyword stuffing or over-formatting. SEO is not a formatting contest.

Step 8) Publish + distribute (system-led, human-owned)

Input: Final approved draft + optimization pack.

Action: Systems push the content live and distribute it:

publish in CMS

send to email/newsletter

schedule LinkedIn posts

add UTM tags where needed

A platform example: QuickCreator describes a coordinated pipeline that includes a writing role plus distribution automation as part of an end-to-end workflow (see How to automate multi-channel marketing with AI agents).

Output (handoff artifact): Published URL + distribution checklist completed.

Done when: The post is live, the first distribution wave is scheduled, and tracking is in place.

Common failure: Hitting “publish” and calling it done.

Step 9) Measure + close the loop (system signals, agent suggests, human prioritizes)

Input: Performance data (traffic, CTR, conversions, engagement).

Action:

Systems collect signals.

An agent summarizes: what changed, what decayed, what to test.

A human decides what’s worth doing next week.

Output (handoff artifact): Optimization backlog (3–5 prioritized actions).

Done when: The workflow feeds itself—publishing creates learning, not just output.

Common failure: Treating “analytics review” as a meeting instead of a decision list.

QuickCreator-style swimlanes (neutral example)

If you like to think in roles, here’s how the same workflow maps to a “specialist agent team” model:

Brand / Brand Intelligence: owns brand voice gate rules and knowledge grounding

Topic Strategy: turns goals into a prioritized topic + keyword plan

Research: builds the source pack and objection list

Writer: produces draft v1 with structure and citations

Optimization: produces SEO/GEO pack and on-page improvements

Distribution: schedules, publishes, and repurposes across channels

You can run these as actual agents, as humans wearing different hats, or as a hybrid.

The handoff artifacts that keep small teams fast

If you only adopt one thing from this guide, make it this: handoffs should move artifacts, not conversations.

Minimum set:

Outcome & guardrails card

Source pack

Approved brief

Approved outline with evidence mapping

Brand-approved draft + change log

Optimization pack

Published URL + distribution checklist

Optimization backlog

FAQ

Do I need a swimlane diagram, or is a checklist enough?

A checklist can work when one person owns the whole flow. Once you have multiple owners (writer, editor, designer, marketer), a swimlane view helps you see handoffs and bottlenecks—the places work gets stuck.

What’s the “minimum viable” version of this workflow?

For a 1–3 person team: keep Steps 1–6 tight (outcome, source pack, brief, outline, draft, brand voice gate). If distribution is manual, at least use a distribution checklist so nothing gets forgotten.

How do I enforce brand voice without slowing everything down?

Make the gate predictable. Use a short style guide, a short checklist, and a clear “reject → revise → approve” loop. The gate is faster when it’s written down.

What should humans always own?

Strategy decisions, brand risk decisions, and publish authority. Agents can propose; humans should approve when it affects reputation, compliance, or positioning.

Next steps

Copy the step list and artifacts above into your project tool, then run it once as a pilot.

If you want a concrete example of a coordinated “agent team” workflow, you can skim QuickCreator’s beginner guide to agentic workflows and compare it to your current handoffs.