Scattered ideas. Slack threads. A spreadsheet of keywords that never becomes a plan. If that sounds familiar, you’re not alone. The real promise of AI agents for content marketing isn’t “more content”—it’s turning chaos into a data-driven roadmap tied to the buyer journey and, ultimately, to pipeline. In this guide, we’ll show how to make strategy lead, keep brand quality safe with human-in-the-loop governance, and prove impact in MQL→SQL conversion, influenced/sourced pipeline, and sales cycle.

What AI agents for content marketing really change in strategy

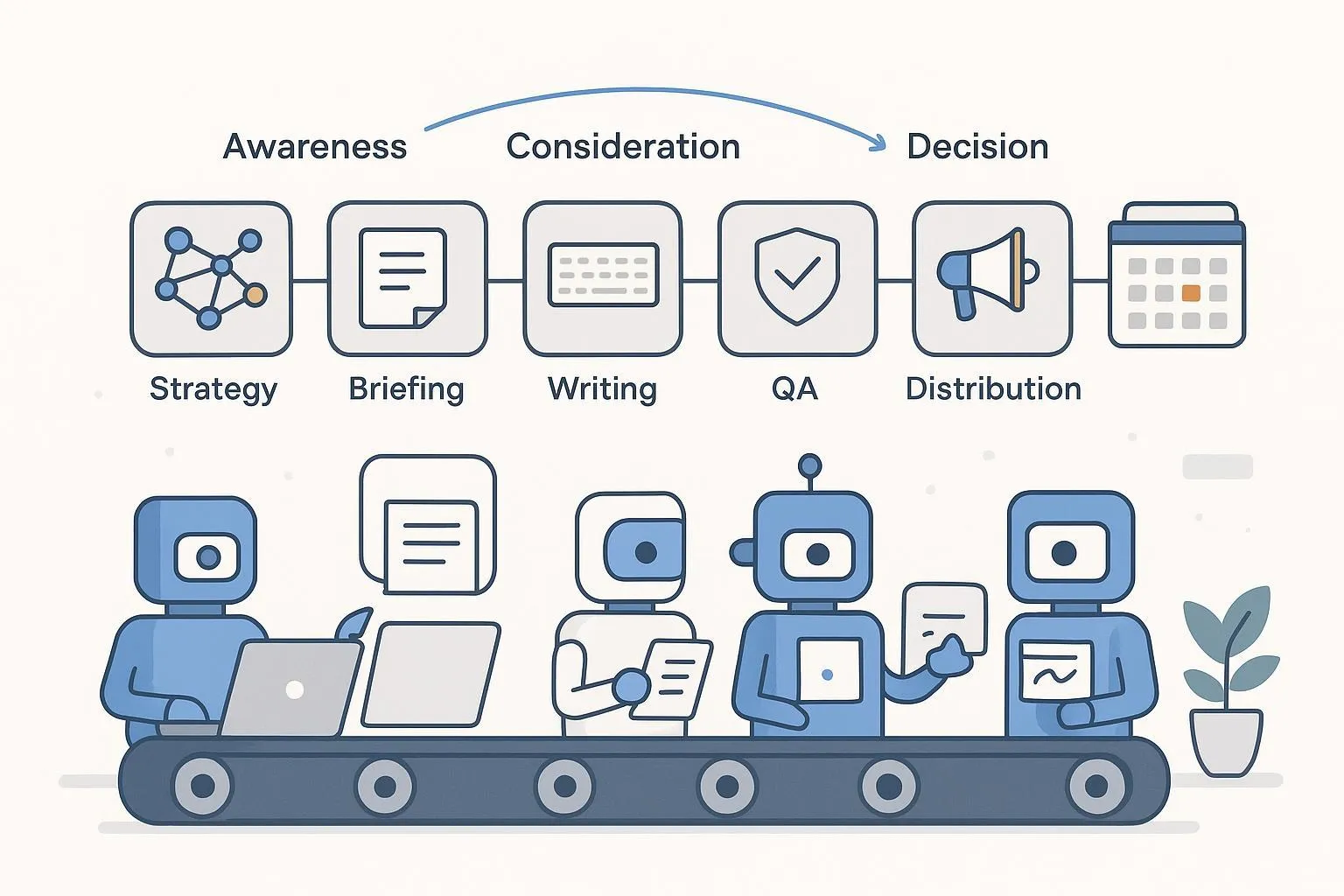

AI agents for content marketing shift the center of gravity from ad‑hoc brainstorming to a durable, buyer‑journey content roadmap. Instead of starting with outputs (posts, emails, social), you start with search intent clusters and jobs‑to‑be‑done per persona, map a topic matrix across Awareness, Consideration, Decision (and post‑purchase), and write briefs that encode audience, angle, format, E‑E‑A‑T sources, internal links, and KPIs.

Why it matters: Strategy becomes executable. Each asset traces back to a stage, a problem statement, and measurable goals. And with agents orchestrating research, drafting, QA, and distribution, the process is repeatable without exploding your headcount.

Brand safety caveat: Google’s March 2024 update on “scaled content abuse” warns against mass, low‑value output regardless of how it’s produced; the bar is people‑first usefulness. See Google’s official explanations in the Core Update and spam policy notes: Google Core Update and spam policies (2024) and broader guidance on helpful content in Succeeding in AI Search (2025).

Build your buyer‑journey topic matrix (template + example)

Think of the topic matrix as your north star. It converts raw keyword ideas and customer questions into a publishable plan with governance and measurement baked in. Use the template below. Duplicate it into your spreadsheet or project board.

Persona | Stage | Problem/Job | Intent Signals/Queries | Content Format | Angle/POV | E‑E‑A‑T Sources | Internal Links | Distribution Plan | Measurement Tags | Success Metric |

|---|---|---|---|---|---|---|---|---|---|---|

SMB Ops Lead | Awareness | “Publish faster without losing brand voice” | ai content workflow, brand voice ai, content calendar ai | Guide + checklist | Strategy‑first, human‑in‑the‑loop | Google policy explainer; NIST AI RMF | Governance hub | Blog → LinkedIn → Email | Campaign=Q2‑AI; ContentID=G‑001; UTM=awareness | New contacts; time‑to‑publish |

Content Lead | Consideration | “Standardize briefs across writers” | content brief template, topic research agents | Brief template + SOP | Buyer‑journey mapping + examples | CMI CRISP; HubSpot loop | Strategy hub | Blog → Template download | Campaign=Q2‑AI; ContentID=T‑002; UTM=consideration | Brief adoption rate |

Marketing VP | Decision | “Prove content drives pipeline” | content attribution crm, mql to sql | Case snapshot | Pilot design + dashboards | Search Engine Land flywheel; Adobe Mix Modeler | Measurement hub | Blog → Sales enablement | Campaign=Q2‑AI; ContentID=C‑003; UTM=decision | MQL→SQL; influenced pipeline |

How to use it: Start with 1–2 personas and map 3–5 high‑value problems each. For every row, add at least two authoritative E‑E‑A‑T sources you’ll cite. Create an internal link plan to connect Awareness → Consideration → Decision content. Pre‑assign Measurement Tags so attribution is not an afterthought.

Run a 90‑day pilot that proves pipeline impact

You don’t need a massive overhaul; you need a disciplined pilot a 3–6 person team can run.

Phase 1 (Weeks 1–2) — Set the scaffolding. Define KPIs (time‑to‑publish, cost/asset, approval SLA pass rate, error/rollback rate, MQL→SQL, influenced vs. sourced pipeline, sales cycle). Build the topic matrix and 6–9 briefs spanning all stages. Implement content IDs and UTMs; in HubSpot (or your CRM), associate assets to a campaign so Contact Create (MQL proxy), Deal Create (pipeline), and revenue attribution are visible. Choose an incrementality design: geo‑split or time‑based holdout, with hypotheses, duration (2–4 weeks of promotion per cluster), and power needs documented.

Phase 2 (Weeks 3–10) — Produce and distribute with human‑in‑the‑loop. Draft to brief; run fact/reference checks; legal/compliance review; final sign‑off. Publish to CMS; repurpose for email and LinkedIn; schedule refresh dates. Promote selected clusters to support your incrementality test; tag all links with campaign and content IDs.

Phase 3 (Weeks 11–12) — Read results and decide. Compare pilot vs. holdout regions/periods for incremental lifts; triangulate with CRM attribution models. Diagnose where lift came from: stage coverage, topic quality, distribution, or QA tightness. Decide to scale, refine, or pause based on pipeline efficiency and governance health. For a continuous loop, align with the measurement flywheel approach described by Search Engine Land in the 2026 marketing measurement flywheel.

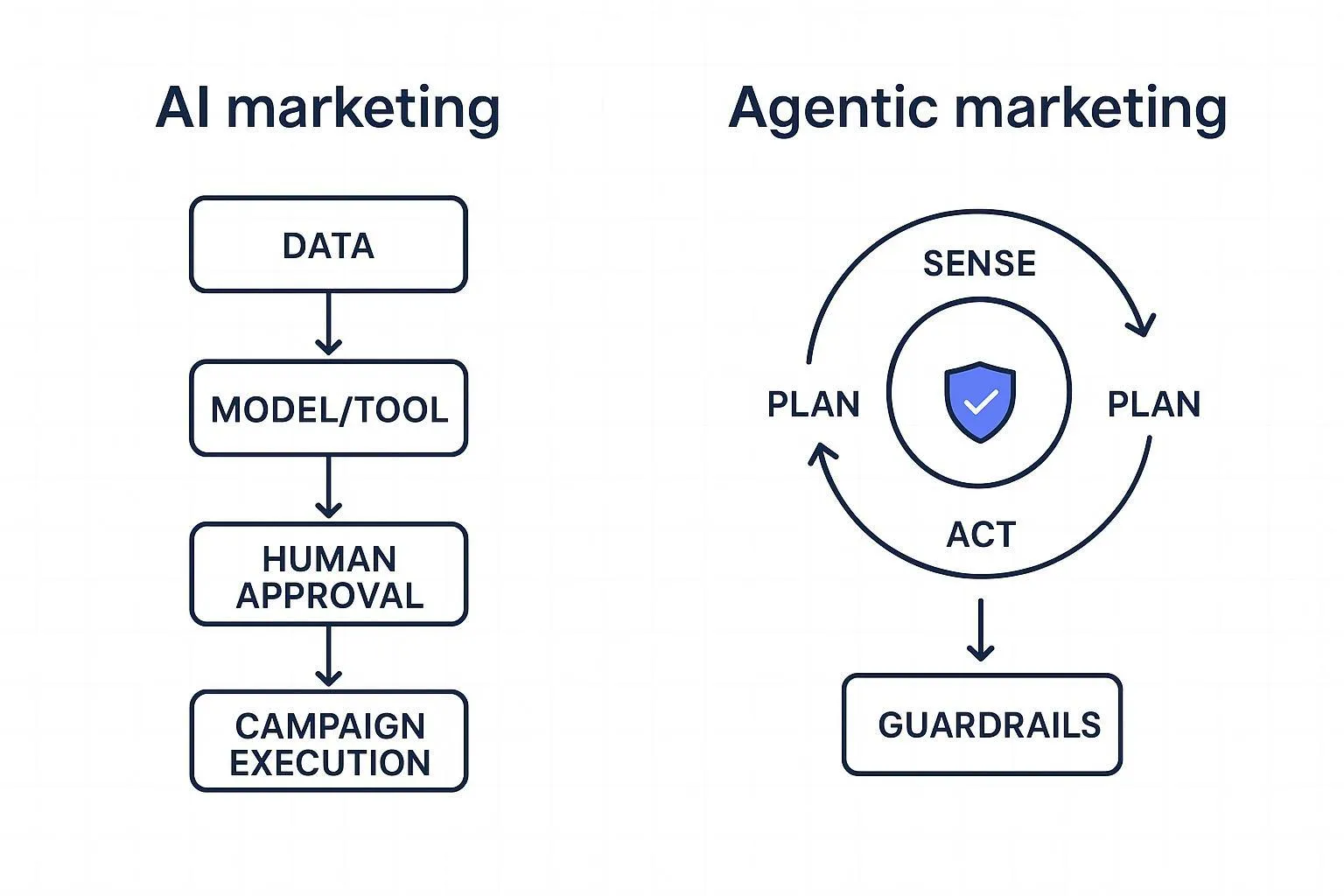

Governance to prevent off‑brand or low‑quality outputs

This is the objection we must neutralize. The creation method isn’t the risk; publishing unhelpful, off‑brand content is. Mitigate it with explicit guardrails: encode your style guide as machine‑readable rules (voice pillars, banned phrases, citation requirements, tone by stage). Insert human‑in‑the‑loop checkpoints for brief approval, fact/reference checks with links, legal/compliance review, and final sign‑off. Maintain versioning and audit logs so prompts, datasets, and edits are traceable. Post‑publish, monitor decay and factual drift, schedule updates, and label provenance where applicable.

Why this works: Google’s people‑first guidance and spam policies set the bar for quality; NIST’s AI Risk Management Framework adds accountability, transparency, and validation practices that translate to marketing workflows. See NIST AI RMF 1.0 for the governance backbone, and Google’s helpful content guidance linked earlier for SEO risk alignment.

If you want a brand‑voice system to anchor these rules, consider using a dedicated profile that ingests your site and guidelines, then enforces them across research, drafting, and QA. One example that describes this approach is QuickCreator’s voice and governance overview: Brand Intelligence Agent.

Orchestrate research → draft → QA → publish → CRM attribution

Fix the workflow before automating. Map the handoffs, approvals, and failure modes; then connect systems with low‑code tools and APIs. A vendor‑neutral flow looks like this: strategy (topic research, topic matrix + briefs), production (draft to brief with citations and internal links), QA & governance (editorial accuracy, compliance, accessibility), distribution (publish to CMS; auto‑generate email/social snippets; schedule refreshes), and measurement (associate assets to CRM campaigns; standardize UTMs/content IDs; monitor MQL→SQL, influenced/sourced pipeline, and sales cycle).

Practical example (neutral). A small team ingests their brand site and style guide into an agentic workspace; topic intelligence groups queries by journey stage and exports briefs; drafting runs against those briefs with voice constraints; a quality agent flags missing citations; approved drafts publish directly to WordPress; campaign association and UTMs sync to the CRM for pipeline reporting. A platform like QuickCreator is often used in this role, but comparable flows can be assembled with a mix of research tools, CMS plugins, and automation services. For more on phased rollouts with humans in approvals, monday.com outlines a pragmatic approach in its AI-in-content marketing guidance (2025).

Alternatives to consider (parity examples): Semrush Topic Research or Ahrefs for strategy, WordPress + Zapier/Make for orchestration, and Buffer for social scheduling.

Measure content → pipeline (MQL→SQL, sourced vs. influenced)

Measurement must ladder to revenue questions, not just traffic. Start by connecting each asset to a campaign in your CRM. In HubSpot, this exposes Contact Create, Deal Create, and revenue attribution under multiple models as described in HubSpot’s campaign association and attribution docs. Layer incrementality through geo/time holdouts or platform lift tests to estimate causal impact, then feed results back into planning. For unified, incremental ROI views that blend experiments and platform data, Adobe explains how mix modeling can ingest lift tests in its Mix Modeler overview. As a pipeline-centric analytics reference, RevSure details stage progression and influenced vs. sourced analysis in its solution for content marketing teams.

What good looks like: A dashboard where each topic cluster shows time‑to‑publish, approval SLA compliance, stage coverage, MQL→SQL conversion for engaged contacts, influenced pipeline by deal stage, and qualitative QA notes. Sprinkle the term explicitly in your reporting to keep focus: AI agents for content marketing are only successful if they move these pipeline levers.

Case snapshots (composite pilots for SMB teams)

Snapshot A — Strategy‑led pilot for an 18‑person SaaS startup. Baseline: 12 assets/month, 12‑day cycle time, ad‑hoc QA, weak campaign tagging. Intervention: buyer‑journey topic matrix; briefs with E‑E‑A‑T sources; human‑in‑the‑loop QA; CMS automation; CRM campaign association; two content clusters promoted with a time‑based holdout. Metrics to watch: cycle time, approval SLA pass rate, rollback errors, MQL→SQL delta vs. holdout, influenced pipeline, directional change in sales cycle. Readout: report lifts with methods; qualify based on reach/budget and seasonality.

Snapshot B — Multi‑channel distribution with modular content. Baseline: one blog per week, sporadic email, little repurposing. Intervention: split drafts into modular components; automate email/social snippets; schedule refreshes; run a geo‑split incrementality test on two regions. Metrics to watch: distribution coverage/freshness, incremental engagement lift, contact→deal progression rates in test vs. control. Tie the outcomes back to your buyer journey to show how AI agents for content marketing accelerate movement between stages.

Snapshot C — Governance‑first in a regulated services firm. Baseline: brand voice drift, legal rework delays, compliance anxiety. Intervention: machine‑readable style guide; mandatory citations; legal review gate; audit logs; provenance labeling. Metrics to watch: legal review SLA, error/rollback rate, rejection causes, organic visibility stability across updates.

Each snapshot is a pattern you can replicate. Your numbers will vary—what matters is the method and auditability.

Implementation essentials (condensed)

Strategy: Build a topic matrix for two personas with 3–5 problems each and pre‑assigned Measurement Tags. Create briefs with angle, format, internal links, and two E‑E‑A‑T sources.

Production and governance: Encode a machine‑readable style guide, require citations, set approval SLAs, track versions, and run accessibility/compliance checks.

Distribution and measurement: Publish to CMS with content IDs, repurpose to email/social, schedule refreshes, associate to CRM campaigns, implement UTMs, and define MQL→SQL and pipeline metrics. Design one incrementality test (geo or time holdout) for a cluster and run it for 2–4 weeks.

Appendix

Prompts and constraints (example snippet)

System: You are a senior B2B editor. Enforce voice pillars: confident, clear, non‑promotional.

Rules:

- Cite 2 authoritative sources per piece.

- Include internal link to a relevant stage page.

- Flag unverifiable claims.

- Require a human approval before publish.

Machine‑readable style guide (mini YAML)

brand_voice:

pillars: [helpful, precise, neutral]

banned_phrases: [cutting-edge, game-changing, leverage]

stage_tone:

awareness: informative

consideration: comparative

decision: directive

citations:

required: true

min_per_post: 2

qa:

checks: [facts, claims, accessibility, compliance]

approval_gates: [editor, legal]

Glossary

Buyer‑journey topic matrix: A structured plan mapping persona problems to content across stages with governance and measurement fields.

Human‑in‑the‑loop: Humans review and approve at key steps to ensure accuracy, safety, and brand fit.

Influenced vs. sourced pipeline: Influenced involves touches that assist deals; sourced originates the opportunity.

Incrementality test: An experiment (geo/time holdout or RCT) estimating the causal effect of content exposure on outcomes.