How agent-based SEO workflows boost SEO and GEO performance

Step-by-step guide to agent-based SEO workflows: intent mapping, internal linking, GEO localization, and AI citation tracking to grow non-brand organic clicks.

If you’ve watched organic clicks compress as AI Overviews crowd the SERP, you’re not imagining it. The fix isn’t more content; it’s better orchestration. This guide shows how agent-based SEO workflows map intent to revenue, wire internal links, localize for GEO/AI surfaces, and measure what actually moves non-brand clicks and qualified sessions.

TL;DR: Coordinate specialized agents to prioritize MOFU/BOFU intent, enforce internal-link governance and schema, localize genuinely, and track AI citation visibility alongside classic SEO KPIs.

Why agent-based workflows matter now

Click share is shifting. Ahrefs’ December 2025 update found that when AI Overviews appear, position-one organic CTR falls by roughly 58% across a 300k-keyword study, with the methodology published for transparency — see the analysis in Update: AI Overviews Reduce Clicks by 58% (2026). Seer Interactive’s dataset of 25.1M impressions across 3,119 informational queries reported a 61% organic CTR decline on AIO queries and a 68% paid CTR decline between June 2024 and September 2025; Search Engine Land summarized the study in Google AI Overviews drive 61% drop in organic CTR (2025).

Citations matter, but overlap isn’t total. According to a BrightEdge analysis reported by Search Engine Journal, only about 54% of AI Overview citations overlap with the top organic results, creating new discovery paths; see AI Overviews overlap organic by 54% (2025). The implication: winning today means pairing classic SEO (non-brand clicks, qualified sessions) with AI search KPIs (citation rate, inclusion share) — a perfect fit for agent coordination.

Map intent to revenue for B2B SaaS

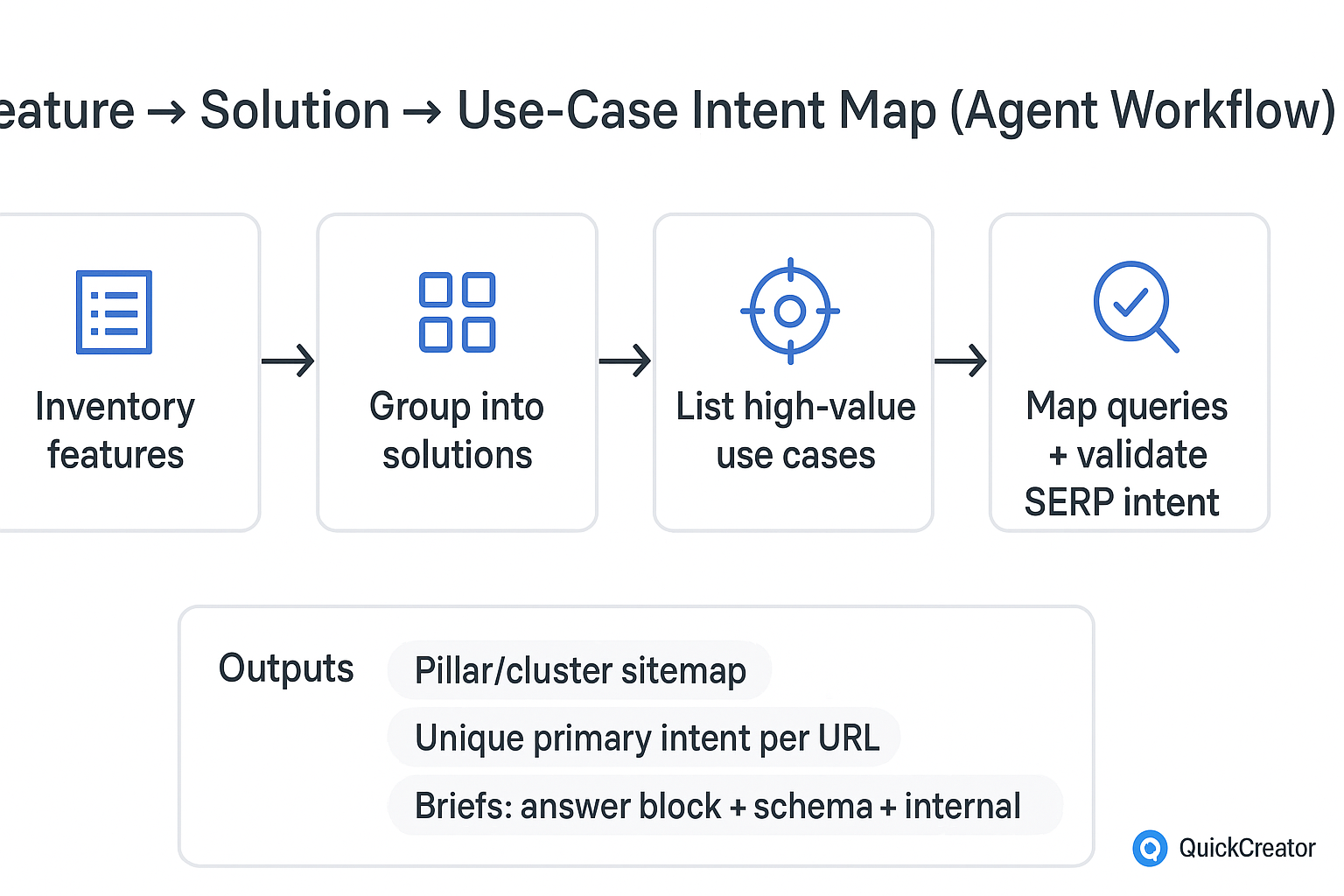

B2B SaaS wins when features, solutions, and use cases are mapped to search intent that correlates with pipeline. Start by inventorying product features, grouping them into buyer-facing solutions, and enumerating use cases. Then map queries to each node and validate the SERP to avoid cannibalization.

A simple, repeatable flow you can adopt today:

Inventory features → group into solutions → list high-value use cases. For each, pull queries from Google Search Console (GSC) and competitor gap tools. Label funnel stage (MOFU/BOFU) and revenue relevance.

Build pillars around solutions; attach feature and use-case clusters. Assign a primary keyword and entities to cover per URL. Ensure each URL owns a unique primary intent.

Validate SERP intent. Where two URLs chase the same intent, consolidate and 301 to one canonical target; update internal links accordingly.

Create content briefs that include: problem/solution framing, answer block (2–4 sentences), schema requirements, and required internal links (up to pillar, down to clusters, across to siblings where contextually helpful).

This structure turns “topics” into revenue-aligned routes for crawlers and LLMs. Think of it as giving both Googlebot and AI engines a well-signposted site map that explains what to rank and what to cite.

An SOP for agent-based SEO workflows (B2B SaaS anchor)

Objective: Increase non-brand organic clicks and qualified sessions by building and maintaining a feature → solution → use-case cluster with internal links, localization, and AIO readiness.

Agent roles and handoffs

Research Agent — Inputs: GSC exporting non-brand queries, competitor SERPs, product feature list. Output: draft intent map with MOFU/BOFU labels and revenue tags. Human QA: confirm business priorities; remove brand queries.

Cluster Architect — Inputs: approved intent map. Output: pillar/cluster sitemap with unique primary intents and entity coverage per URL. Human QA: check for cannibalization and overlap.

Briefing Agent — Inputs: sitemap. Output: content briefs with answer blocks, schema specs, required links, and E-E-A-T requirements (author bios, references). Human QA: approve tone, claims, and compliance notes.

Drafting Agent — Inputs: approved briefs and knowledge base. Output: first drafts optimized for on-page basics (title, H1/H2s, concise intro, answer block, semantic HTML). Human QA: fact-checking and editorial pass.

Linking Agent — Inputs: published or near-final drafts. Output: internal link plan with anchor text, locations, and limits per page; orphan-page fixes. Human QA: ensure anchors are descriptive and not repetitive.

GEO Agent — Inputs: locations/service areas (if applicable), testimonials, addresses or service-area statements. Output: localized copies or sections, JSON-LD (Organization/LocalBusiness/FAQ), and hreflang suggestions. Human QA: verify genuine local proof points; validate schema.

AIO/GEO Monitor — Inputs: target query set. Output: AIO inclusion count, citation rate, Perplexity/ChatGPT citation share, and weekly deltas. Human QA: interpret trends; trigger updates.

A neutral example of platform orchestration

A centralized agent platform such as QuickCreator can be used to host prompts, coordinate these agent roles, and store your brand voice and facts. Use it (or an equivalent stack) as the control room for briefs, link plans, and schema QA — the value is the orchestration, not any one component.

Internal linking that clusters and converts

Internal links are how you assert meaning and priority. Google’s guidance emphasizes logical structure, crawlable navigation, and meaningful anchor text; see the SEO Starter Guide and related documentation on sitelinks and cross-references. Translate that into pragmatic rules:

Wire the hierarchy: pillar links down to all cluster pages; every cluster links up to its pillar. Add contextual sibling links where they add clarity for readers.

Keep anchors descriptive: use the target page’s topic or promise (e.g., “role-based access control for SaaS security”), not “read more.” Don’t reuse the same anchor for different destinations.

Eliminate orphans: every cluster page should have at least two in-links (from pillar and one sibling or relevant blog post). Prioritize in-body links over nav-only exposure.

Avoid cannibalization: if two URLs chase the same primary intent, merge content, 301 the weaker to the stronger, and update anchors accordingly.

Evidence note: 2024–2026 controlled studies quantifying internal linking’s isolated lift are limited. Treat these as best-practice governance aligned with Google’s documentation and measure impacts locally.

GEO and AI Overviews: local signals that earn citations

Localization isn’t boilerplate city swapping. It’s proving local relevance and making those signals machine-readable.

Structured data: Implement Organization and, where applicable, LocalBusiness and FAQ/HowTo schema with required properties. Validate in Google’s tools per Structured data documentation. For hybrid or service-area motions, follow Google Business Profile guidance on service areas (don’t expose a private address; verify service areas correctly).

Content proof points: Add location-specific testimonials, service areas, regulatory notes, and support hours. If you serve multiple regions or languages, consider hreflang following Google’s international SEO guidance.

Why it helps AIO/LLMs: GEO — generative engine optimization — frames how content is presented to LLMs and RAG systems to increase inclusion probability. The academic treatment characterizes GEO as black-box optimization using style, structure, and citations; see the 2024 arXiv paper summarized here: Generative engine optimization framework and the Wikipedia overview of GEO.

Operationally, you’re aiming to be both rankable and citable. That means clear, source-backed answer blocks, structured data, and fresh, location-relevant context.

Troubleshooting and safe-automation guardrails

Intent collisions: If two pages start ranking for the same query, consolidate. Keep the stronger URL, 301 the other, and retest internal links in a crawl. Update the brief repository so the overlap doesn’t reoccur.

Thin local pages: Boilerplate localization rarely sticks. Add real proof (testimonials, service areas, pricing notes, support coverage) and correct LocalBusiness/Organization schema, then request indexing after deployment.

Schema errors: Treat warnings vs. errors differently but validate with the Rich Results Test before and after deployment. Monitor Google’s visibility changes for FAQ/HowTo and adapt.

Draft drift: If agents hallucinate or drift off-brief, add stricter definitions in your briefs (required sources, claims to avoid, author bios, and review steps). Keep a human-in-the-loop review before publish.

AIO volatility: Expect week-to-week swings in inclusion. Timestamp snapshots and evaluate trends over 4–8 weeks, not days.

Measure what matters: KPIs for SEO + GEO/AI

You need traditional SEO metrics and AI visibility metrics living side by side. Track weekly and review monthly.

KPI | What it measures | How to track |

|---|---|---|

Non-brand organic clicks | Primary growth outcome | GSC query filter excluding brand; track by cluster and landing page |

Qualified sessions | Traffic that matches conversion intent | GA4 segments for target pages + engaged sessions + conversion events |

AIO citation rate | How often your pages are cited in AI Overviews | Use tool modules documented in Ahrefs’ guide to tracking AI Overviews; archive SERP snapshots |

Inclusion share | % of target queries that surface an AIO citing you | Third-party SERP trackers with AIO filters; maintain a fixed query set |

Internal link coverage | Governance health of clusters | Crawl stats: in-links per cluster page; orphan count; anchor uniqueness |

Cadence and thresholds: Review non-brand clicks and qualified sessions weekly; evaluate AIO metrics monthly due to volatility. Flag clusters where non-brand clicks decline while AIO presence grows — you may need concise answer blocks, fresh citations, or schema fixes to regain inclusion.

What this looks like in practice (neutral example)

Teams often centralize prompts, briefs, internal link matrices, and schema validation inside an orchestration hub. A platform like QuickCreator supports this coordination: store your brand voice and facts, run research and drafting agents against approved briefs, generate internal link plans for review, and validate schema before publish. Keep the tone neutral, enforce human review, and prefer smaller pilots before full-scale rollout.

Next steps: a 2–4 week pilot

Weeks 1–2: Build the intent map and sitemap, QA cannibalization, and approve briefs for one solution pillar with 3–5 cluster pages. Stand up internal link rules and schema specs. Localize one variant if applicable.

Weeks 3–4: Draft, publish, and link the cluster; validate structured data; set up AIO/GEO tracking dashboards; archive SERP snapshots. Monitor non-brand clicks and qualified sessions weekly; review AIO inclusion and citation rate after four weeks.

Optional: If you prefer an agentic control room instead of stitching tools, you can adapt the templates in your own stack or explore QuickCreator to coordinate the agents and QA steps described here.

References for further study (all cited inline): Ahrefs (2026), Seer Interactive and Search Engine Land (2025), Search Engine Journal (2025), Google Search Central docs (current), Google Business Profile Help (current), arXiv GEO framework (2024).