AI marketing agents explained: architecture, data, and agentic workflows (with an SEO pipeline example)

Practical, beginner-friendly guide to AI marketing agents: architecture, data layers, and agentic workflows, plus an SEO content pipeline walkthrough and examples.

If you’re leading a small marketing team and wondering how “AI agents” actually work in practice, this guide breaks it down with plain definitions and a concrete, step-by-step SEO example. We’ll unpack what AI marketing agents are, how agentic workflows are architected, the data they rely on, and how to run a safe, human-in-the-loop pipeline from research to publish—without the hype.

Quick glossary (read this first)

Agent: An AI-powered software entity that perceives inputs, reasons, and takes actions to complete a task (for example, drafting an outline).

Agentic workflow/system: A coordinated set of agents, tools, and data that plan and execute multi-step tasks toward a goal with minimal human input.

Orchestrator: The controller that routes tasks, manages state and memory, and decides which agent or tool runs next.

Planner: A role/pattern that decomposes a goal into steps (e.g., choose keywords, then write a brief).

Executor: An agent or tool that carries out a specific step (e.g., a Writer generating the first draft).

Critic/Reviewer: An agent (often LLM-as-judge) that scores or edits outputs against rules like brand voice and SEO.

Memory: Storage for prior context and results so agents make better decisions across steps.

RAG/private knowledge base: Retrieval-augmented generation that grounds outputs in approved documents to reduce hallucinations.

HITL: Human-in-the-loop checkpoints where a person approves or adjusts outputs before the workflow continues.

What are AI marketing agents?

In simple terms, AI marketing agents are specialist software workflows that can perceive context, plan, and act on your behalf for specific marketing jobs (like keyword research, drafting, or optimization). Authoritative overviews describe agentic workflows as sequences of coordinated agents and tools with optional human approvals and long-running execution. See IBM’s explanation of agentic workflows and orchestration (2025) and MIT Sloan’s plain-language agentic AI overview (2025).

It also helps to distinguish a single “agent” from an end-to-end “agentic system.” Moveworks clarifies these differences—scope, reasoning, autonomy, and orchestration—in their agents vs. agentic systems explainer (2025). In this guide, we’ll use “AI marketing agents” as the practical building blocks, and “agentic workflows” as the complete pipelines that combine them.

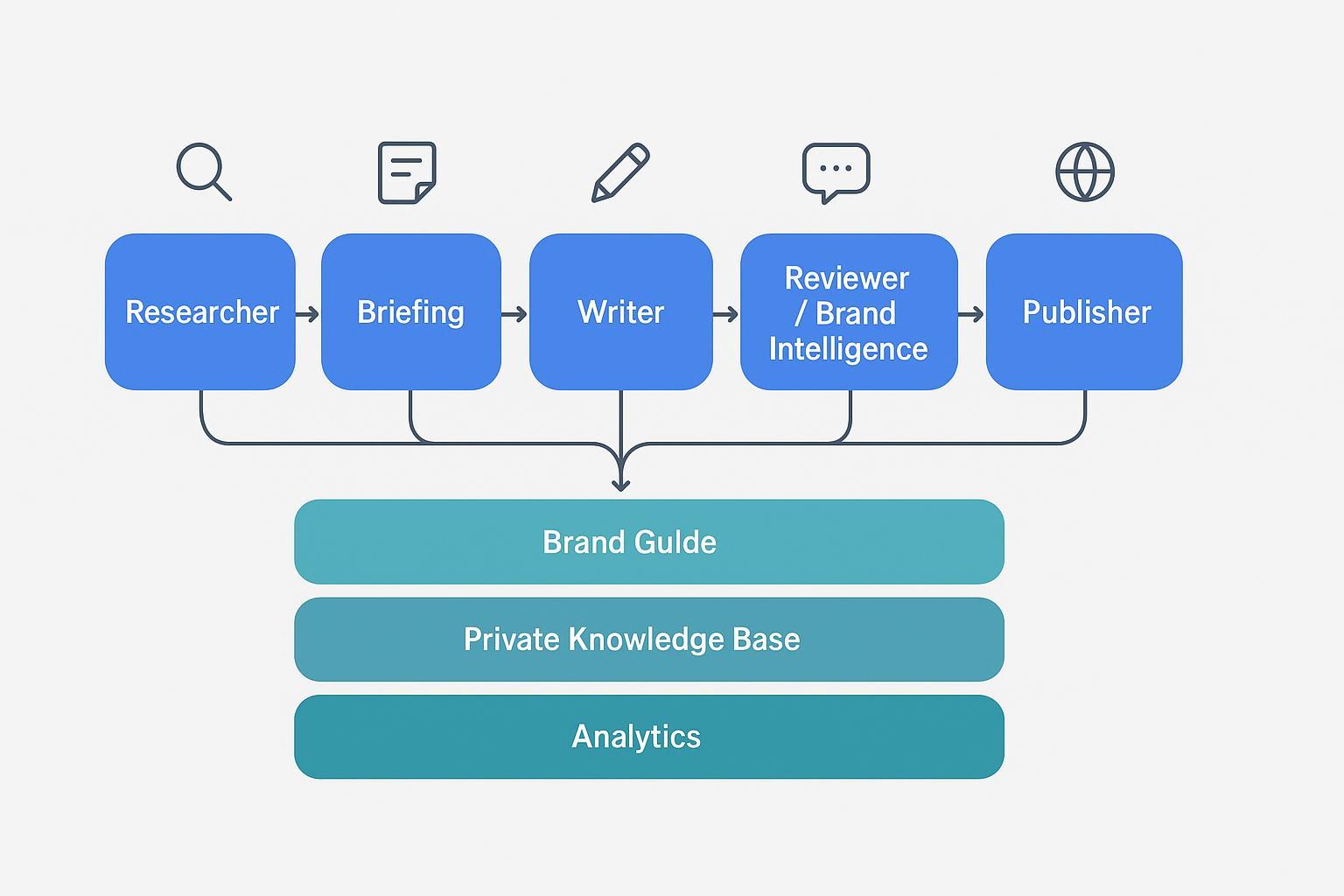

Core architecture: components and data layers

A beginner-friendly architecture includes:

Orchestrator: A controller that manages the stateful workflow, routing tasks and capturing memory. Popular frameworks document these patterns well: LangGraph (LangChain) graph-based workflows and Microsoft AutoGen teams.

Agents and tools: Specialists such as a Researcher, Briefing agent, Writer, Reviewer/Critic, Optimizer, and Publisher. Tools include web search, analytics, CMS, and editing utilities.

Memory and persistence: Storing intermediate outputs (e.g., approved briefs, voice rules) so later steps stay consistent.

Data layers: Brand style guide (voice, terms), private knowledge base for RAG (product facts, docs), and analytics (GA4, rank tracking) that feed back into optimization.

Governance: Human-in-the-loop approvals, role-based permissions, and evaluation checks to keep outputs high-quality and brand-safe.

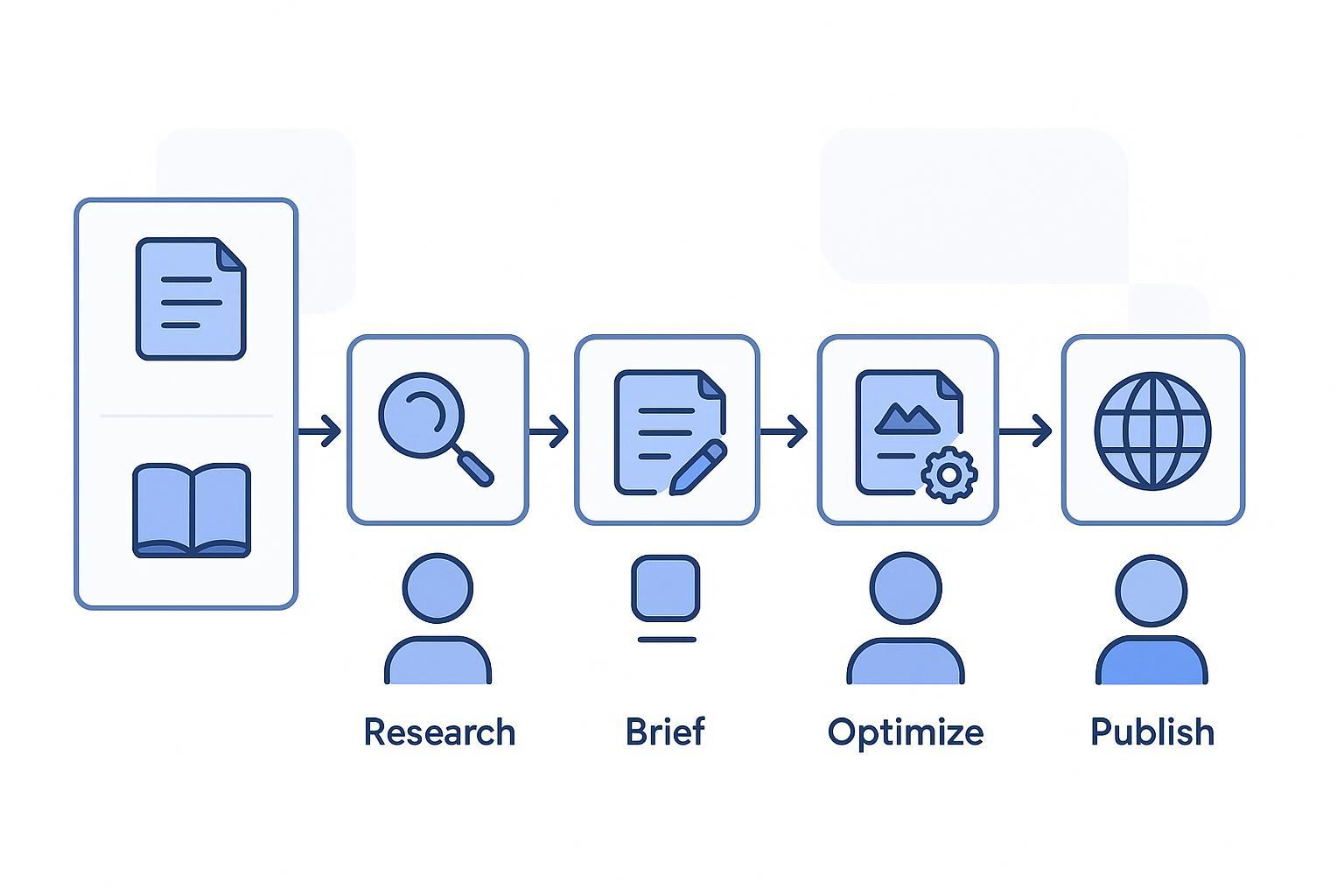

The SEO pipeline at a glance (swimlane diagram)

Caption: Research → Brief → Draft → Optimize → Publish, with Brand Guide, Private Knowledge Base, and Analytics feeding decisions across the flow.

Walkthrough: a 5-step SEO content pipeline powered by AI marketing agents

This example focuses on one hero use case: producing a high-quality, on-brand SEO blog post. Think of the orchestrator as a project manager that hands work to specialists and asks a reviewer to check the result before moving on.

1) Research → Topic and keyword discovery

Purpose: Identify search-intent-aligned topics with a realistic chance to rank.

How it works: A Topic Intelligence/Researcher agent gathers seed inputs (ICP, seed keywords, competitor URLs), analyzes SERPs, and proposes a short list of targets with intent notes and questions to answer. A private knowledge base helps align topics with product positioning and brand priorities.

Example starter prompt: “Identify keywords for ‘AI marketing agents’ with intent, SERP features, top questions, and a brief rationale for each.”

Quality checks: Focus on intent coverage over raw volume; avoid keyword stuffing later. Run a quick human sanity check.

2) Brief → Outline and sources

Purpose: Translate the target query into a clear brief (H1–H3s, FAQs, sources, meta targets) so drafting stays aligned.

How it works: A Briefing agent drafts the outline and compiles approved references drawn from your private KB and authoritative web sources. A Critic checks completeness (does the outline match search intent? are sources credible?), and a human approves the brief before writing begins.

Example starter prompt: “Create a content brief for ‘AI marketing agents’ including sections, FAQs, target subheading, and 3–5 authoritative sources.”

Quality checks: Require citations in the brief; include a HITL gate before drafting. Framework docs like LangGraph’s HITL/persistence patterns show how stateful stops and approvals can work.

3) Draft → First article version

Purpose: Generate a readable, brand-aligned draft that follows the brief and includes citations and image suggestions.

How it works: A Writer agent produces the draft, and a Brand Intelligence agent reviews tone, terminology, and structure against your style guide. Retrieval from your private knowledge base keeps product facts grounded.

Example starter prompt: “Write the opening section for the brief above in a friendly, plain style. Keep sentences varied and avoid hype.”

Quality checks: Enforce brand voice and factual grounding; have a human editor review the draft for clarity and accuracy. Google’s guidance permits AI-generated content when it’s people-first and helpful; see the policy context in Google’s AI content guidance (2024).

Helpful background on reviewer patterns: Vellum summarizes planner–executor and critic (LLM-as-judge) loops for production reliability—see Vellum’s design patterns overview (2025).

4) Optimize → On-page and helpfulness checks

Purpose: Improve intent coverage, readability, and on-page elements (metadata, internal links, schema if relevant).

How it works: An Optimizer agent reviews headings, meta, alt text, internal links, and overall helpfulness using a checklist. A Critic flags issues and the human owner resolves edge cases.

Example starter prompt: “Evaluate this draft against on-page SEO best practices. Suggest improvements to title, H2s, internal links, and meta description. Avoid keyword stuffing.”

Quality checks: Follow Search Essentials and avoid scaled low-value patterns. For fundamentals, see Google’s Search guidance on AI content and policies (2023).

5) Publish → Scheduling and distribution

Purpose: Ship the post to your CMS with an audit trail, then monitor performance.

How it works: A Publisher agent packages the final content, confirms accessibility basics (alt text, headings), and prepares the post for scheduling. The orchestrator enforces a final HITL approval gate before publish and logs a changelog/version.

Example starter prompt: “Prepare this article for WordPress with images, alt text, metadata, canonical, and category assignments. Flag any missing pieces.”

Quality checks: Confirm authorship attribution, schema where relevant, and internal links. Track analytics (GA4) and SERP position for feedback into the next iteration.

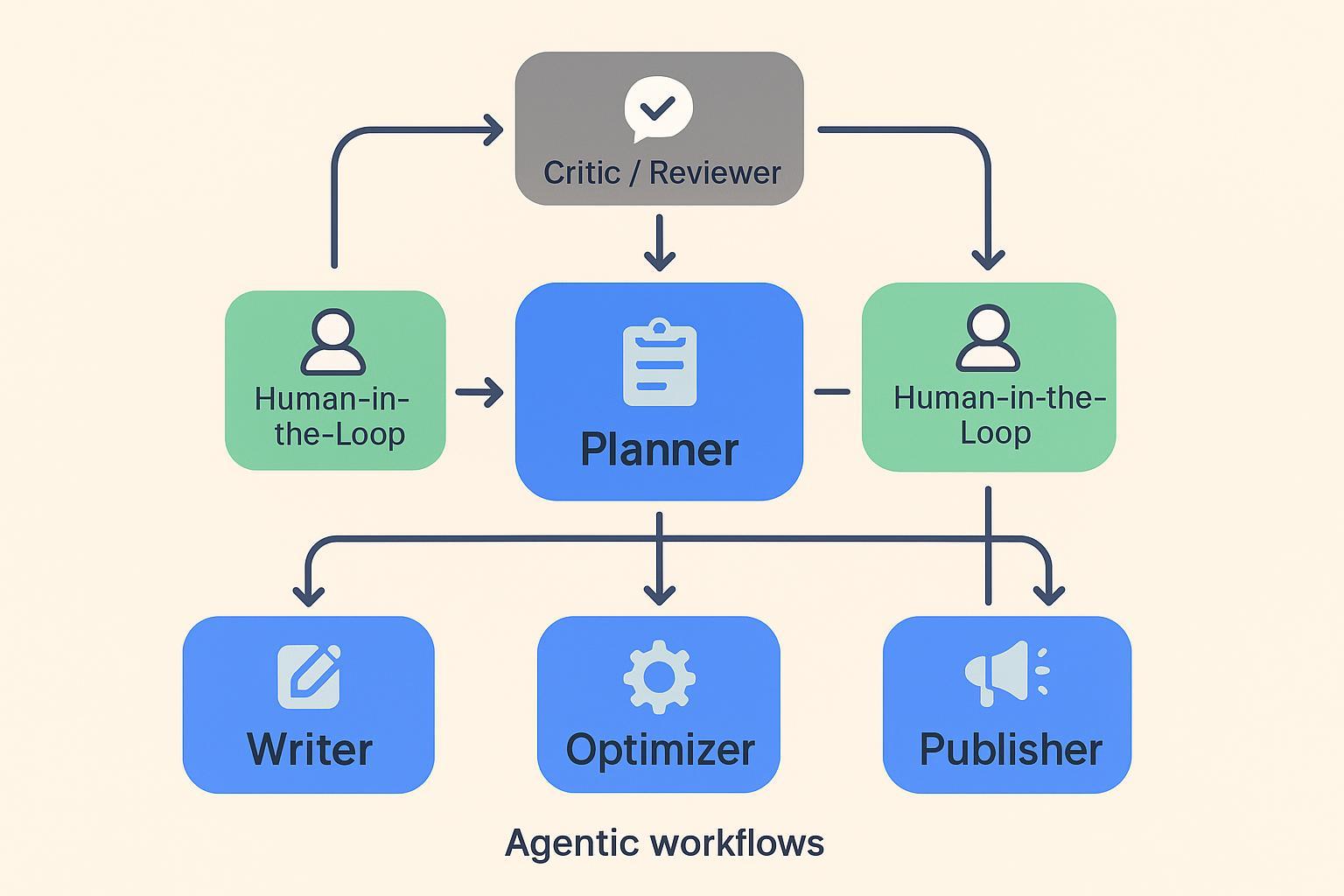

The control loop behind agentic workflows

Caption: Planner → Executors (Writer, Optimizer, Publisher) → Critic/Reviewer loop, with HITL approval gates before drafting and publishing, and memory/persistence storing decisions.

Agent-to-output mapping (quick reference)

Agent/role | Key inputs | Expected outputs | Quality checks |

|---|---|---|---|

Researcher | ICP, seed keywords, competitor URLs, brand priorities | Prioritized keywords with intent notes and SERP features | Human sanity check; intent coverage over volume |

Briefing | Target keyword, approved sources, brand voice rules | Content brief with H1–H3s, FAQs, citations, meta targets | Source credibility; HITL approval before draft |

Writer | Approved brief, voice guide, private KB snippets | First draft with cited claims and image/alt suggestions | Brand voice enforcement; factual grounding; human edit |

Reviewer/Critic | Draft and brief | Issues list and suggested edits | Style and SEO checks; LLM-as-judge + human review |

Optimizer | Edited draft, on-page checklist, link map | Improved headings, meta, links; schema suggestions | Avoid keyword stuffing; readability and accessibility |

Publisher | Final content, metadata, assets | CMS-ready post, schedule, version log | Final HITL approval; analytics tracking set |

Practical micro-example: brand voice enforcement in Draft → Review

During the handoff from the Writer to the Reviewer, a Brand Intelligence agent scans the draft for tone, terminology, and structure. It flags phrases that don’t match your style guide (for instance, replacing “AI wizardry” with a more grounded term), enforces preferred vocabulary, and suggests line edits. Retrieval from your private knowledge base ensures product facts remain accurate. One platform that can be used to orchestrate this brand voice enforcement is QuickCreator’s Brand Intelligence Agent, which works alongside private-knowledge-base grounding; human approval remains required before publishing.

For background on brand style guidance and AI content governance, see the internal primer Comprehensive guide to AI-generated content and brand style.

Governance, safety, and evaluation you shouldn’t skip

HITL approvals at critical stages (before drafting and before publishing) reduce risk and improve quality.

Require citations for factual claims, prefer primary sources, and keep link density reasonable.

Use critic/reviewer loops for style and SEO checks, and log versions for auditability. For practical patterns, see Vellum’s production design patterns and the framework docs above.

Follow Google’s guidance to keep AI-generated content people-first and compliant with spam policies.

Next steps and further learning

Beginner fundamentals: IBM on agentic workflows; MIT Sloan’s agentic AI explainer; Moveworks on agents vs. agentic systems.

Frameworks to explore: LangGraph (LangChain) stateful graphs and Microsoft AutoGen teams.

Optional: If you’re evaluating orchestration platforms for multi-agent SEO pipelines, you can also explore QuickCreator to see how brand voice enforcement and knowledge grounding fit into a governed workflow.