Agentic marketing: the clear B2B SaaS definition

Clear definition of agentic marketing for B2B SaaS — how AI agents differ from automation, PLG workflow example, glossary, governance, and an SMB adoption roadmap.

Agentic marketing is a coordinated system of specialized AI agents that plan, execute, and continually learn across channels to achieve marketing goals—operating autonomously within human‑in‑the‑loop governance and brand guardrails.

What makes this different from a rules engine? Instead of waiting for fixed triggers to fire prewritten actions, an agentic system reasons about objectives, proposes plans, chooses the right tools, and adapts based on live signals. Humans don’t disappear; they move up the stack to set goals, define guardrails, approve inflection points, and provide feedback that trains the system. Think of it as a cross‑channel “autonomous campaign manager” that never forgets your brand voice, experiments responsibly, and explains its decisions.

Why now for B2B SaaS? Teams are asked to ship more with fewer hands, while product‑led growth (PLG), sales‑assisted motions, and SEO‑led demand all demand tighter orchestration. Analyst frameworks describe organizations shifting toward agent‑native operating models where AI agents work alongside people under clear governance—see the discussion of agentic organizations in McKinsey’s overview of agentic operating models and the move to multi‑agent orchestration in Deloitte’s Tech Trends 2026.

Agentic marketing vs. traditional marketing automation

Traditional marketing automation runs predefined workflows: a form submission adds a lead to a list, triggers a nurture email, applies a score, and routes to sales. It’s powerful, but it rarely adapts unless a human rewrites the journey. Vendor docs make this clear; for example, Salesforce’s “What is Marketing Automation?” guide and HubSpot’s workflow documentation emphasize event‑ and rule‑triggered actions.

Agentic marketing raises the bar:

Initiative: Automation waits for triggers; agentic systems propose and revise plans to hit goals.

Reasoning: Automation follows static rules; agentic systems evaluate options and choose tools/paths.

Adaptation: Automation changes when reprogrammed; agentic systems adjust in near‑real time from signals.

Scope: Automation is channel/task‑bound; agentic orchestration spans tools, channels, and teams.

Learning: Automation mostly reports; agentic systems update working models and policies.

Human role: Automation treats humans as operators; agentic elevates them to strategists/governors with approval gates.

The result is not “set and forget.” It’s continuous planning → execution → learning, inside clear guardrails.

How an agentic system works (planner → execution → learning)

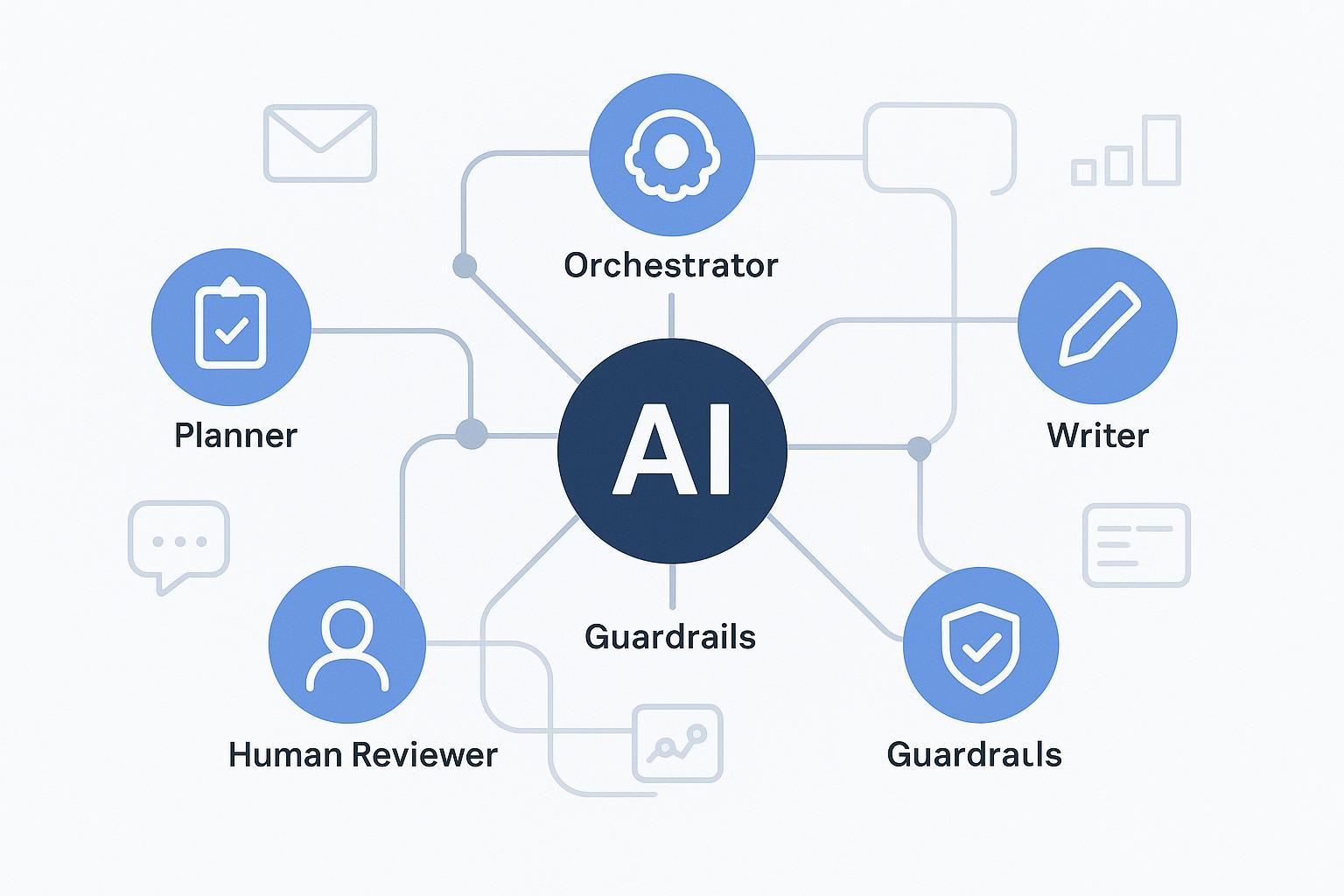

At the core are cooperating agents with distinct jobs. A planner proposes a strategy for the objective (e.g., “increase activation for new signups”). An orchestrator sequences tools (product analytics, messaging, CMS). Creator agents draft in‑app copy, emails, and posts grounded in brand knowledge. Optimizer and analytics agents read outcomes, design experiments, and feed learnings back to the planner. Guardrails enforce policy, style, and compliance, handing off to a human when needed.

Modern multi‑agent frameworks describe these patterns well. Microsoft’s AutoGen documentation covers manager‑worker and reflection patterns for agents that converse, plan, and use tools. Safety layers such as NVIDIA NeMo Guardrails’ integration with LangGraph/LangChain show how to enforce input/output policies, tool permissions, and human‑in‑the‑loop checkpoints. Organizationally, McKinsey’s “agentic organization” write‑up frames how teams govern autonomy and accountability.

Two signals make a system truly “agentic”: cross‑tool reasoning (agents pick the next best action, not just the next step) and continuous learning (models and policies update from cohort outcomes).

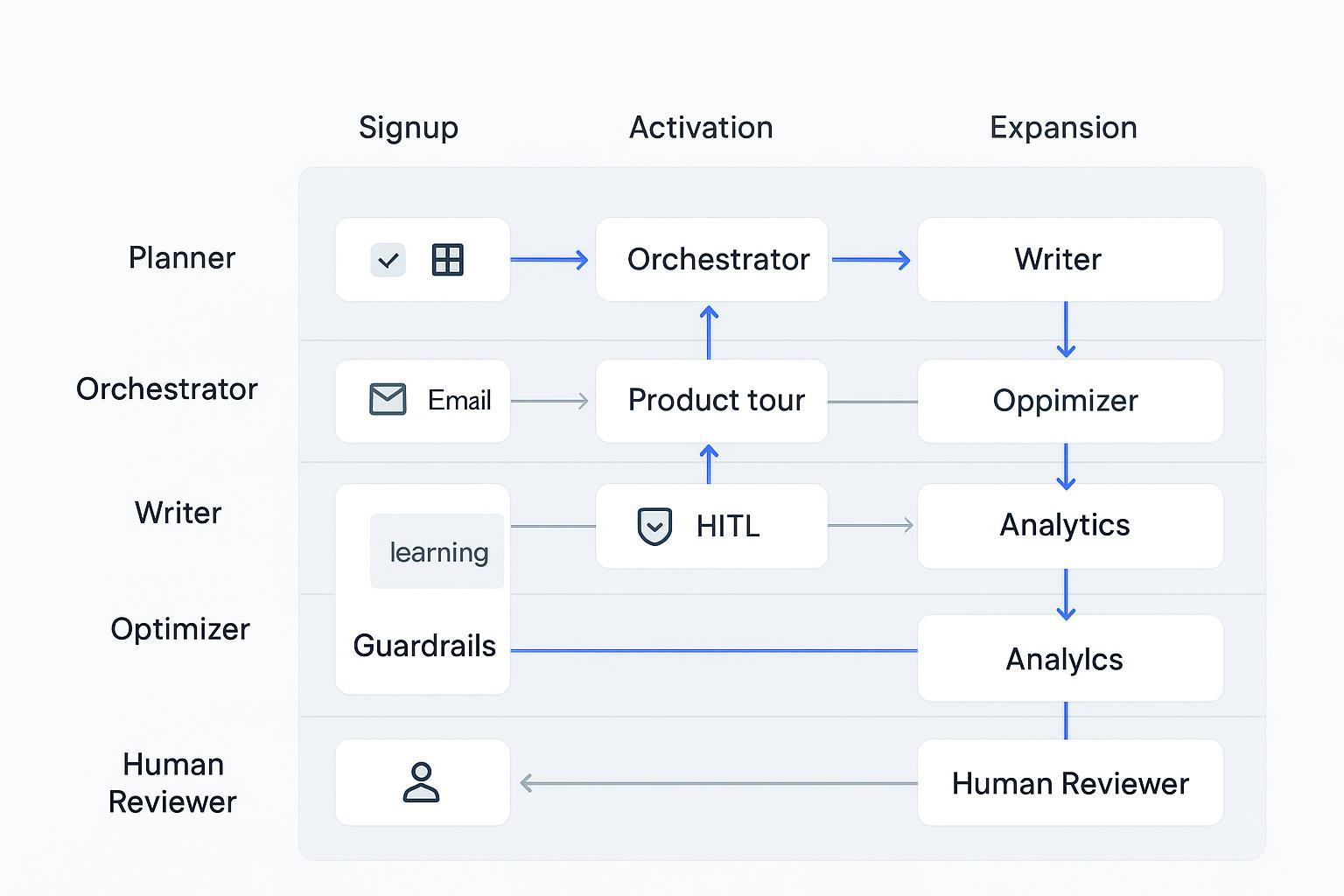

PLG hero workflow — signup to activation to expansion

The clearest place to see agentic marketing at work is a PLG lifecycle: signup → activation → expansion. Below is a simple swimlane diagram that shows agents, human gates, and feedback loops.

Here’s how the loop runs:

Signup: The planner reads product analytics and CRM traits to propose onboarding variants by segment. The orchestrator wires in‑app prompts, a product tour, and a welcome email. The writer generates copy grounded in brand voice and product facts. Guardrails check tone, claims, and compliance; high‑impact changes route to a human for approval.

Activation: As users progress, analytics agents monitor key events (first value, feature adoption). The optimizer suggests micro‑experiments (e.g., reorder steps, rewrite tooltips) and runs safe A/B or switchback tests with defined risk thresholds. Approved variants go live; results feed back to the planner.

Expansion: When usage crosses PQL thresholds or a pattern suggests upsell/cross‑sell, the system proposes targeted prompts, in‑app guides, or educational content. Humans review pricing‑adjacent nudges before rollout. Cohort outcomes update the policies for the next cycle.

PLG metrics and definitions for activation and PQL are well covered by growth sources such as Amplitude’s guides to product‑led growth and activation.

A neutral example: platforms like QuickCreator can support parts of this loop by grounding brand voice and ICPs and coordinating planner/writer/orchestrator behavior with human approval gates. For instance, the brand voice and facts can be codified once using the QuickCreator Brand Intelligence Agent, while planning and drafting content align to journey stages from the QuickCreator home page overview of its agentic pipeline. These touches reduce rework while keeping humans in control.

Governance and risk controls you actually need

Agentic systems only earn trust when governance is explicit. Start with a policy that defines autonomy levels (assisted, delegated, autonomous‑within‑guardrails), approval gates, and escalation routes. Require decision logs and rationale traces for observability and post‑hoc review. Treat PII and consent rigorously.

Two credible anchors are useful here. The NIST AI Risk Management Framework prescribes lifecycle oversight with human‑AI teaming and evaluation. And Deloitte’s Tech Trends 2026 discusses agent‑native operating models and the need for cost controls, autonomy levels, and workforce‑style orchestration.

Practical checklist to implement now: define which changes require human approval; enforce brand/compliance guardrails for all outbound content; log every agent decision that affects customers; and maintain rollback procedures.

Crawl → Walk → Run: an SMB adoption roadmap

Crawl (Assisted): Let planner/writer agents propose drafts and onboarding variants; require HITL for every outbound message. Integrate the minimum stack (product analytics, email, CMS). Capture essential telemetry: signup source, onboarding steps, feature events, cohort tags.

Walk (Delegated): Allow the orchestrator to run low‑risk A/B or switchback tests (e.g., tooltip copy). Add RAG grounding for brand/product facts. Keep guardrails on by default. Log decisions and outcomes.

Run (Autonomous within guardrails): Delegate weekly cohort optimization with policy‑gated approvals for high‑impact changes (pricing prompts, new onboarding flows). Expand patterns to sales‑assisted/ABM and SEO‑led motions. Maintain an experiment registry and dashboards.

Glossary — agentic marketing terms you’ll see

Agentic AI / AI agent: Autonomous software that plans, acts, and learns with properties like reactivity and proactiveness; applied to marketing for cross‑tool execution.

Orchestrator: The coordinator that sequences agents and tools to achieve an objective.

Planner / Executor / Reviewer: Distinct agent roles for plan generation, tool use, and quality/safety checks.

Tool use: Structured function or API calls that let agents act in CRM, email, analytics, CMS, and more.

RAG (Retrieval‑Augmented Generation): Grounding content in brand/product facts and prior performance; often seeded by a brand intelligence layer such as the QuickCreator Brand Intelligence Agent.

Guardrails / Policies: Safety and compliance constraints that validate inputs/outputs and control tool access.

HITL (Human‑in‑the‑loop): Human oversight with defined approval checkpoints and overrides.

Autonomy levels: Graduated independence from assisted to autonomous‑within‑guardrails.

Feedback loop: Telemetry‑driven learning that updates plans/policies from cohort outcomes.

Observability / Audit trail: Decision logs and rationale traces to enable governance and rollback.

GEO (Generative Engine Optimization): Structuring content so it appears accurately in AI‑generated answers; see the foundational framing in Aggarwal et al.’s Generative Engine Optimization paper.

Measurement that proves progress

Focus on cohort‑level outcomes tied to PLG: activation rate, time‑to‑activation, PQL volume/rate, conversion to paid, and expansion triggers handled. To prove agentic lift vs. a rule‑based baseline, use A/B or switchback tests so seasons and traffic shifts don’t skew results. For definitions and benchmarks around activation and PLG motion design, Amplitude’s PLG resources are a helpful starting point.

Diagnostics matter too: track drop‑offs in onboarding steps, response by cohort, model/tool error rates, frequency of human interventions, and policy violation rates. If the system learns, these numbers should improve over time.

Where this is heading (and how to start)

The ecosystem is maturing quickly. Open frameworks such as Microsoft AutoGen, stateful graphs like LangGraph, and safety layers like NVIDIA NeMo Guardrails make it practical to pilot agentic workflows without locking into a single vendor. Start with one PLG objective (e.g., activation for a key segment), define your autonomy levels and HITL gates, and run a 4–6‑week experiment.

If you want an agentic pipeline that already bakes in brand grounding, planning, writing, optimization, and human approvals, consider piloting with QuickCreator on a narrow PLG use case before expanding.