Agentic AI Marketing for SMBs: A Plain-English Primer

Beginner primer on agentic AI marketing: what it is, how it differs from chatbots/automation, plus an SMB-ready SEO blog pipeline with HIL guardrails.

If you remember just one thing, make it this: agentic AI is not a smarter chatbot. It’s a goal-driven system that can plan multi-step work, call tools and data, observe results, and adapt under human-defined guardrails. That’s the essence described by leaders like NVIDIA’s overview of agentic AI (2024) and IBM’s topic page on agentic AI (2025), and echoed by MIT Sloan’s explainer (2026).

What agentic AI is (and what it isn’t)

Agentic AI marketing uses specialized AI “agents” to pursue a marketing goal by planning, taking actions through connected tools (like your CMS or analytics), evaluating outcomes, and improving through feedback loops—all within permissions and policies you set. It isn’t the same as a reactive chatbot, and it isn’t just a fixed if-this-then-that automation. According to MIT Sloan’s explainer (2026), the difference is autonomy with tool use; IBM and NVIDIA further emphasize planning and iterative adaptation.

Capability | Agentic AI | Chatbots/AI writers | Simple automation |

|---|---|---|---|

Autonomy | Pursues goals with limited supervision | Responds to prompts reactively | Runs on rigid triggers |

Planning | Decomposes tasks, replans on the fly | No independent plan; prompt-by-prompt | No reasoning or replanning |

Tool use | Calls APIs/connectors (CMS, analytics, email) | Mostly text generation | Connectors fire on rules only |

Error recovery | Evaluation loops, escalation to humans | Manual retries | Fails or needs manual fixes |

The architecture behind agentic AI marketing (in plain English)

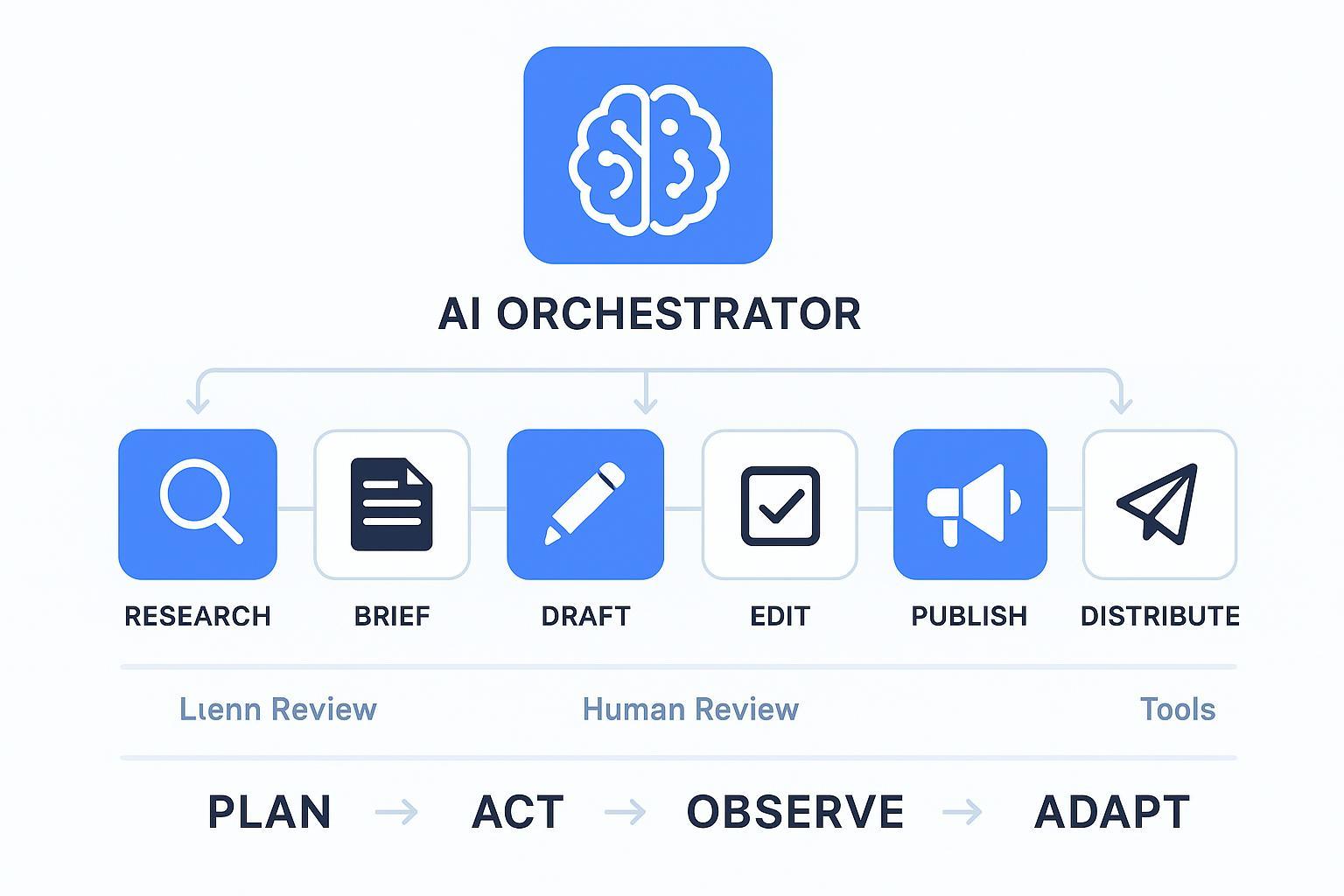

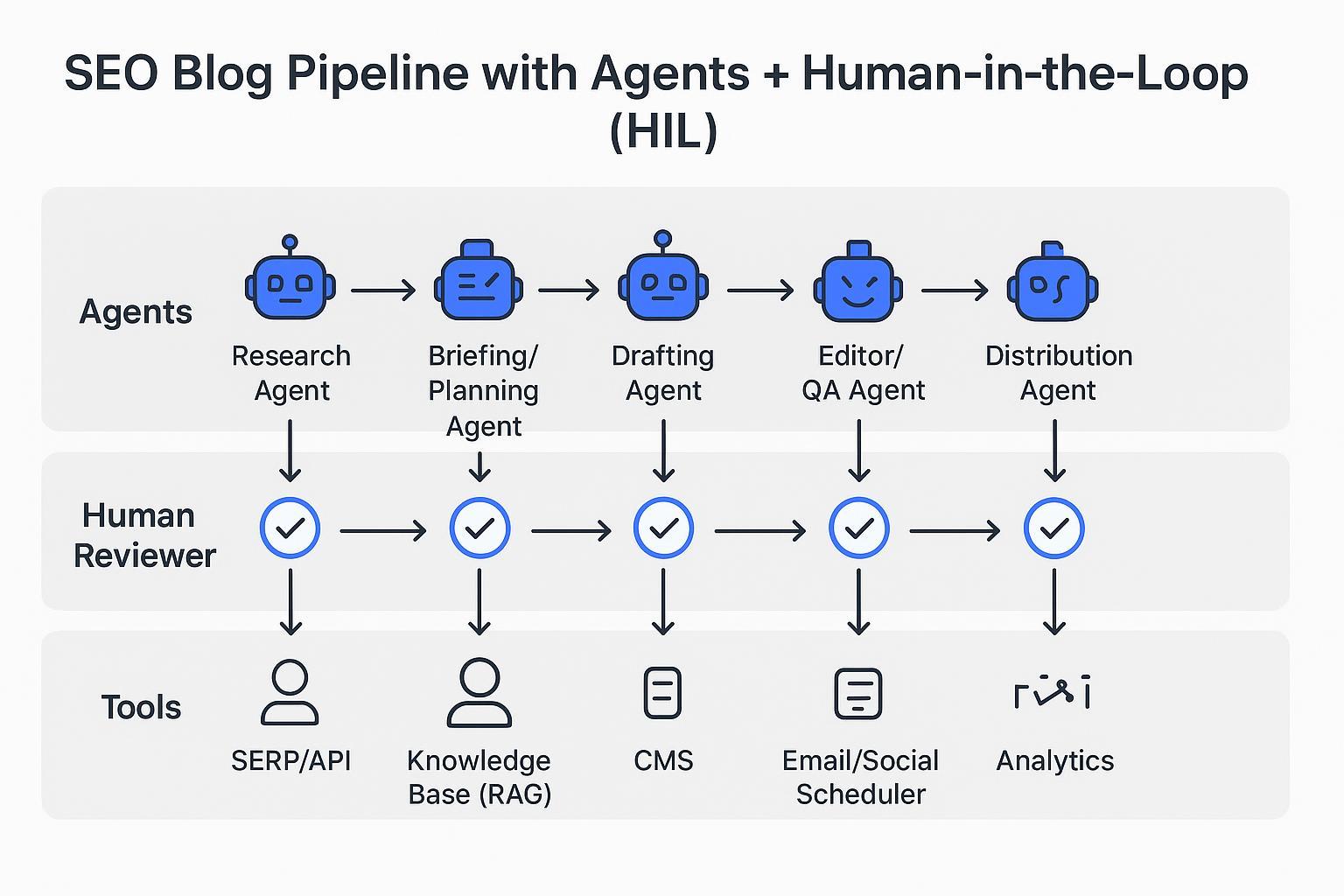

Think of an agentic setup like a small production crew. There’s an orchestrator (the producer) coordinating specialized roles: a Research Agent, a Briefing/Planning Agent, a Drafting Agent, an Editor/QA Agent, and a Distribution Agent. They share memory (past work, brand info) and can fetch facts via retrieval-augmented generation (RAG). They act through tool connectors—your CMS, SEO plugin, analytics, email, and social scheduler. Critically, there are evaluation loops and human-in-the-loop checkpoints so sensitive steps get a review. This aligns with definitions and blueprints discussed by NVIDIA and MIT Sloan’s explainer above.

Example: an SEO blog pipeline with agents + HIL

Here’s a beginner-friendly mental model you can adopt today. Picture three swimlanes:

Agents: Research → Brief → Draft → Edit → Publish → Distribute, each handled by a specialized agent.

Human Reviewer: you or your editor approve pivotal handoffs at Research→Brief, Draft→Edit, and Edit→Publish.

Tools: SERP/API and a private knowledge base for research, your CMS plus SEO plugin for publishing, an email/social scheduler for distribution, and analytics for feedback.

Agents plan and act; you review the key gates; analytics feed the loop so agents can learn and adapt. That’s agentic AI marketing in practice.

Step-by-step micro-playbook (research → brief → draft → edit → publish → distribute)

Below is a practical, platform-agnostic way to pilot this workflow. Keep scopes small at first and expand once quality and governance feel solid.

1) Research

Purpose: Identify a topic that matches search intent and your ICP.

What the agent does: Gathers SERP data, classifies intent, compiles questions and trusted sources, and checks your knowledge base for proprietary insights.

Human-in-the-loop (HIL): Approve the topic and sources; spot-check for accuracy and alignment.

Tip: Start with read-only connectors to SERP/APIs and your knowledge base to prevent unintended edits to live systems.

Neutral product example: At the research→brief handoff, some teams use a topic-mapping agent to turn intent data into an outline; for instance, QuickCreator’s Topic Intelligence Agent can support brief creation based on real search intent (Knowledge Base Source). Keep any tool’s suggestions under HIL.

2) Brief

Purpose: Translate research into a clear plan—audience, angle, outline, sources, and success criteria.

What the agent does: Proposes title options, target keyword, outline with H2/H3s, internal/external links to consider, and a checklist for E-E-A-T.

HIL: Approve the brief, adjust the angle, confirm keywords and must-cite sources before drafting.

Tip: Define “done” with a short checklist (intent match, unique angle, internal link targets, 2 authoritative sources).

3) Draft

Purpose: Produce a first draft that follows the brief and uses your brand voice.

What the agent does: Generates a draft, cites sources inline, and flags areas needing human anecdotes or data pulls.

HIL: Review voice, facts, and structure. Reject and comment where claims lack support.

Tip: Require the agent to surface every external claim it used so you can verify links and dates.

4) Edit/QA

Purpose: Strengthen clarity, accuracy, and compliance before publishing.

What the agent does: Runs a quality pass (readability, broken links, missing alt text), checks for on-brand terminology, and prepares an edit summary with suggested fixes.

HIL: Senior editor reviews changes, confirms citations, and approves or returns with comments.

Helpful resource: For E-E-A-T-style quality checks, see this public help doc on review criteria from QuickCreator’s knowledge base How to review content quality with E-E-A-T analysis (Knowledge Base Source).

5) Publish

Purpose: Move the approved article to your CMS with proper formatting and metadata.

What the agent does: Prepares the post in draft, fills metadata (title tag, meta description), sets categories/tags, and runs an SEO plugin check.

HIL: Use a pre-publish checklist and role-based approvals in your CMS.

6) Distribute

Purpose: Extend reach and capture early signals.

What the agent does: Queues a short LinkedIn post and newsletter blurb, adds UTM tags, and schedules. Pulls early metrics.

HIL: Confirm channel fit, timing, and any compliance language.

Tip: Fold early analytics into an evaluation loop so the next brief reflects what actually resonated.

Governance, guardrails, and safety (beginner-friendly)

Good governance makes agentic AI safe and useful. A helpful way to think about oversight comes from the NIST AI Risk Management Framework’s emphasis on defined human roles and continuous evaluation. See NIST’s AI RMF Core overview for how organizations formalize oversight and improvement.

Starter practices you can implement now:

Human oversight at clear gates: Require approvals at Research→Brief, Draft→Edit, and Edit→Publish.

Restricted scopes and permissions: Begin with read-only connectors and a sandbox workspace before allowing write actions to CMS or email.

Guardrails and policies: Establish off-limit topics, required citation standards, and escalation rules.

Audit trails: Keep logs of agent actions, edits, and approvals for accountability.

Evaluation loops: Track draft quality, edit effort, and performance; adjust prompts, playbooks, or permissions accordingly.

For business impact framing (and why process-level thinking matters), see McKinsey’s perspective on agentic AI automating complex processes (2025).

An SMB starter plan (30–60–90 days)

Here’s a pragmatic way to pilot one workflow without overcommitting budget or change management.

30 days: Baseline and design

Document your current SEO blog pipeline. Time each step (research, drafting, editing, approvals, publishing, distribution).

Define HIL gates and a minimal policy: citation standards, brand voice rules, and approval roles.

Configure read-only connectors to SERP/data sources and your knowledge base.

60 days: Pilot and refine

Run 3–5 posts end-to-end with agents. Keep scope narrow (no write access to production systems yet).

Capture edit rounds per draft, time-to-first-draft, and publish velocity; compare vs. baseline.

Tune prompts, playbooks, and checklists. Expand knowledge base coverage.

90 days: Expand and measure outcomes

Grant limited write permissions where safe (e.g., staging in CMS).

Add distribution automations with HIL approvals.

Start tracking organic clicks/impressions, assisted conversions, and GEO/AEO citations.

Suggested starter KPIs

Time-to-first-draft

Edit rounds per draft

Publish velocity (posts/week)

Organic clicks and impressions (GSC)

Assisted conversions/pipeline attribution (analytics/CRM)

Answer-engine/GEO citations for priority topics

Glossary: 12 plain-English terms you’ll see

Agent: A software role that perceives, reasons, and acts toward a goal, often by calling tools/APIs. Sources: IBM; MIT Sloan; NVIDIA.

Agentic AI: Goal-driven systems that plan, act via tools, observe results, and adapt under guardrails across workflows. Sources: NVIDIA; IBM; MIT Sloan.

Orchestrator: The coordinator that sequences agents and tool calls to achieve the overall goal. Sources: IBM orchestration; NVIDIA blueprints.

Tool connector (integration): The bridge (API/function/plugin) that lets agents act in systems like CMS, analytics, email, or social.

Memory: Stored context from past runs (brand info, prior drafts, decisions) used to improve future steps.

RAG (retrieval-augmented generation): A technique that pulls relevant documents/notes before generating, to ground outputs in facts.

Human-in-the-loop (HIL): Planned review/approval points where humans confirm or correct agent work. Source: NIST AI RMF’s emphasis on human oversight.

Guardrails: Policies and runtime checks that limit unsafe or off-policy actions (e.g., scope restrictions, content filters). Sources: NVIDIA guardrails concepts; NIST oversight themes.

Evaluation loop: Observe → assess → refine cycle that improves prompts, playbooks, or permissions over time.

Prompt schema: A structured prompt/template (and tool/function signatures) that standardize how agents behave.

Playbook: A repeatable workflow definition with roles, inputs, outputs, and checkpoints.

GEO/AEO (Generative/Answer Engine Optimization): Practices to help your content appear as citations or answers in AI/answer engines.

Where to learn more

If you want a neutral, practical example of brand voice guardrails and private knowledge bases in an agentic setup, see QuickCreator’s Brand Intelligence Agent (Knowledge Base Source).

To go deeper on fundamentals, start with NVIDIA’s overview of agentic AI and MIT Sloan’s explainer for accessible, high-level context.

Here’s the deal: agentic AI marketing isn’t about replacing your team—it’s about coordinating reliable, tool-using helpers with clear human guardrails so your small team ships better work, faster, and with confidence.