MTA in the U.S. in 2025: Privacy‑Safe Approaches, iOS/Android Realities, and the Stack We Recommend

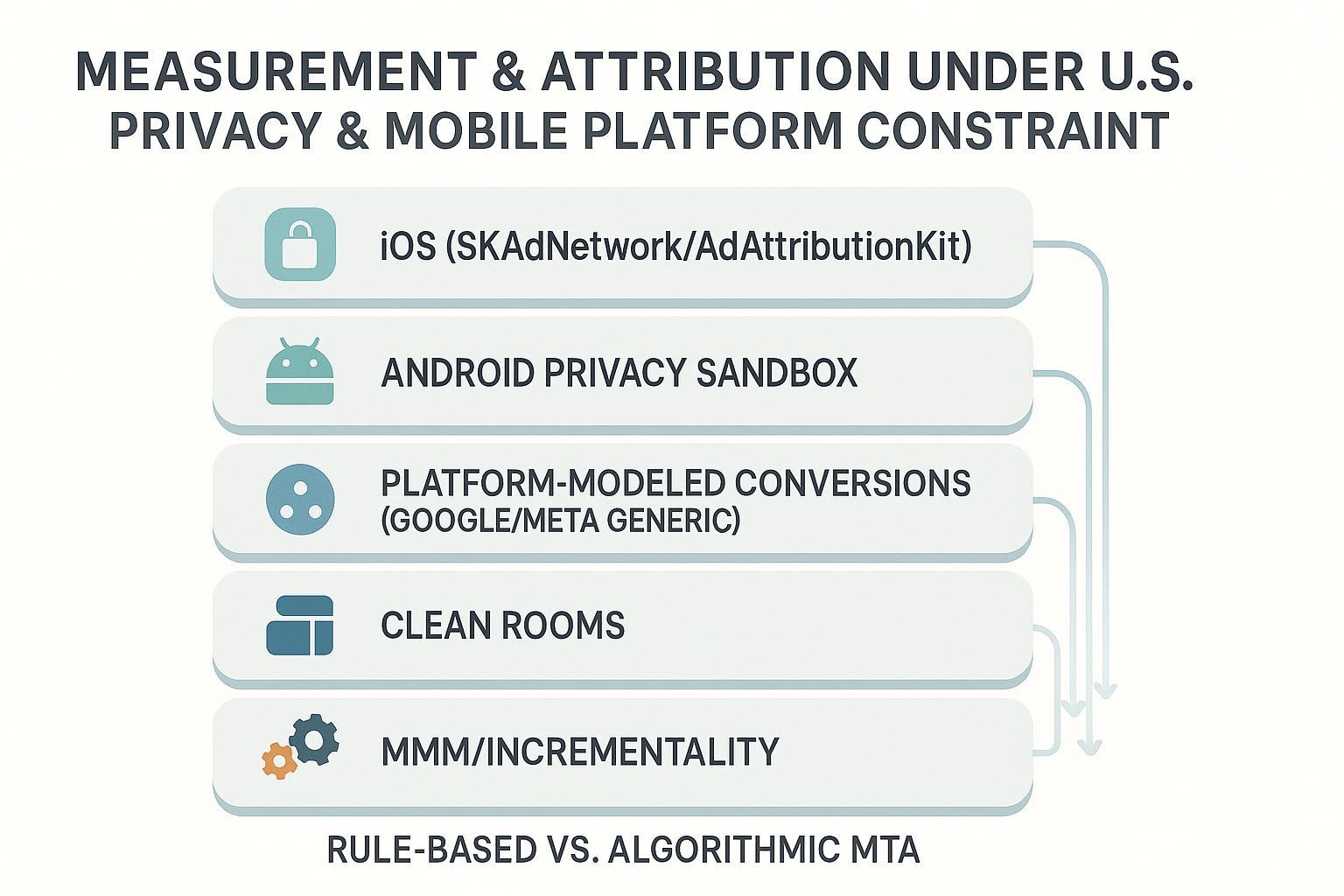

If you run performance marketing in the United States today, you’re navigating three converging currents: stronger state privacy laws (CPRA and peers), Apple’s ATT regime with SKAdNetwork/AdAttributionKit on iOS, and Android’s shift to the Privacy Sandbox. The upshot is clear: user‑level, cross‑site/device paths are scarce; aggregated and modeled signals are ascendant; and compliance expectations around consent, transparency, and sensitive data are rising.

This guide compares the major attribution approaches that remain viable in 2025, explains their trade‑offs in real use, and ends with a pragmatic, composable measurement stack you can deploy now. Legal note: this article is informational; for state‑specific interpretations, consult qualified counsel.

What materially changed (2024–2025)

- Apple continued migrating toward privacy‑safe app attribution via SKAdNetwork (SKAN) 4.x and its successor framework, AdAttributionKit (AAK). Apple confirms the two are interoperable and details postbacks and conversion handling in its developer documentation. See Apple’s description of the relationship in the concise AdAttributionKit–SKAdNetwork interoperability page (2025).

- Android’s Privacy Sandbox Attribution Reporting API is the new baseline, providing event‑level reports with limited bits plus richer, aggregated summary reports—designed without cross‑party identifiers. Google’s mobile overview summarizes the model and latency trade‑offs in the Attribution Reporting for Android documentation (2025).

- Platforms leaned harder into modeled conversions with privacy controls (e.g., Google’s Consent Mode). When consent is withheld, tags adapt and conversions can be modeled from aggregated, consent‑aware pings, as outlined in Google’s Consent Mode overview (updated 2024–2025).

- U.S. privacy enforcement matured. CPRA regulations stress opt‑out rights, honoring user‑enabled global privacy controls (like GPC), and sensitive data handling. The California Privacy Protection Agency’s CPRA regulations (PDF) are the canonical reference (2023–2024 text, enforced into 2025).

How the main approaches stack up

| Approach | Compliance posture (U.S./ATT) | Data needs | Granularity | Latency | Bias risk | Complexity/cost | Best fit |

|---|---|---|---|---|---|---|---|

| Rule‑based MTA (first/last/linear/time‑decay) | Generally compliant on consented first‑party web/app data; avoid cross‑site/device stitching without consent | Clickstream + events | Session/campaign level | Near‑real‑time | High (channel/last‑click bias) | Low | Quick directional splits; small teams |

| Data‑driven MTA (Markov, Shapley, ML) | Challenging under ATT and cookie loss; feasible with aggregated/consented data or clean rooms | Robust, well‑labeled events; identity/consent segmentation | Varies; often aggregate | Hours–days | Medium (model uncertainty); lower than rule‑based if calibrated | High | Sophisticated teams validating with experiments |

| Platform‑modeled conversions (Google/Meta) | Strong alignment with platform privacy/consent rules | Proper tagging/CAPI; Consent Mode/server‑side recommended | Channel/campaign | Hours–1–2 days | Medium (platform bias) | Medium | Tactical optimization and bidding |

| iOS SKAdNetwork/AdAttributionKit | ATT‑compatible; no user‑level cross‑app IDs | Conversion value schema, postback handling | Aggregated | Delayed postbacks | Medium (schema‑dependent) | Medium–High | iOS app UA and re‑engagement |

| Android Privacy Sandbox Attribution Reporting | Strong; no cross‑party IDs | SDK integration, source/trigger design | Limited event bits + aggregated summaries | Event soonish; summary delayed | Medium (API noise) | Medium | Android app UA and web↔app |

| Probabilistic/fingerprinting | High risk under ATT; avoid on iOS; risky vs U.S. opt‑out/GPC norms | Device/browser signals (disallowed on iOS) | Pseudo‑user level | Near‑real‑time | High and non‑compliant | Low–Medium tech; high policy risk | Not recommended |

| Clean room–assisted attribution (ADH/AMC/Meta) | Strong; aggregation thresholds; vetted environments | First‑party IDs, governance, SQL | Aggregated | Batch | Medium (walled‑garden blind spots) | Medium–High | Deep dives, pathing, incrementality |

| MMM + incrementality | Strong; fully aggregated | Spend, outcomes, promos, seasonality, macro | Strategic, channel/region | Weeks–monthly | Medium (specification risk); mitigated by tests | Medium–High | Budget planning and calibration |

Approach capsules: what to know before you deploy

Rule‑based MTA (first/last/linear/time‑decay)

- What it is: Heuristic splitting of credit across touches or the “last touch wins.”

- Data & compliance: Works on consented first‑party analytics and server‑side event streams. Avoid cross‑site/device stitching without explicit consent and contracts.

- Pros: Simple, fast, cheap, embedded in most analytics; good for directional readouts and quick QA.

- Constraints: Systematic bias (last‑click, channel mix); fragile with cookie loss; not finance‑grade without calibration.

- Who it’s for: Teams needing immediate directional feedback with minimal engineering.

- When to avoid: High‑stakes budget reallocation without any calibration.

Data‑driven MTA (Markov, Shapley, ML)

- What it is: Algorithmic credit assignment based on path removal effects or predictive modeling.

- Data & compliance: Needs robust event capture and identity partitioning by consent. Under ATT/cookie loss, requires aggregated inputs or clean rooms; avoid reconstructing user‑level identities without consent.

- Pros: Reduces channel bias; can approximate marginal contributions.

- Constraints: Data‑hungry; model mis‑specification risk; operational overhead.

- Who it’s for: Advanced teams with data science support, willing to validate with incrementality tests and MMM.

- When to avoid: Sparse data, short evaluation windows, or stringent governance with no clean‑room capacity.

Platform‑modeled conversions (Google, Meta)

- What it is: Platforms estimate conversions lost to consent or device limits and surface them in reports and bidding.

- Data & compliance: Strong alignment with platform privacy regimes; relies on correct consent signals and server‑side or enhanced tagging. Google describes how tags adapt and models estimate gaps in its Consent Mode overview.

- Pros: Baked into ad platforms; improves optimization even with signal loss.

- Constraints: Model opacity; platform bias; reconciliation needed against finance and tests.

- Who it’s for: Most advertisers as a tactical baseline.

- When to avoid: Never entirely; but don’t treat as single source of truth.

iOS SKAdNetwork (SKAN) / AdAttributionKit (AAK)

- What it is: Apple’s privacy‑safe app attribution frameworks. SKAN 4.x supports multiple postbacks, hierarchical conversion values, and web‑to‑app; AAK is the next‑gen framework interoperable with SKAN.

- Data & compliance: ATT‑compatible by design; no cross‑app IDs. Apple clarifies the relationship and behaviors in AdAttributionKit–SKAdNetwork interoperability.

- Pros: Policy‑safe measurement on iOS; broader coverage as networks adopt AAK; cryptographically signed postbacks.

- Constraints: Aggregated and delayed signals; schema design matters; limited pathing; evolving partner support for AAK.

- Who it’s for: iOS app marketers needing compliant install and re‑engagement measurement.

- Practical tips: Design a conversion value schema that balances early revenue proxies with retention; consider using lockWindow strategically to accelerate feedback while accepting later signal loss.

Android Privacy Sandbox Attribution Reporting

- What it is: The Android API for measuring ads without cross‑party identifiers, offering limited event‑level data plus richer aggregated summaries.

- Data & compliance: Strong privacy posture by construction. Google’s mobile documentation outlines event versus summary reports, noise, and timing in Attribution Reporting for Android.

- Pros: Future‑proof foundation; works for app↔web; standardized across the ecosystem.

- Constraints: Limited event bits; delayed and noisy aggregate reports; evolving tools.

- Who it’s for: Android app growth teams and mixed web↔app funnels.

- Practical tips: Separate pipelines for event and summary reports; use debug channels in non‑prod; align optimization goals to the bits you can reliably capture.

Probabilistic attribution/device fingerprinting

- What it is: Inference using device/browser signals to identify users without consent.

- Data & compliance: Apple’s ATT policy defines “tracking” broadly and bars circumvention; using derived identifiers without consent conflicts with that policy. See Apple’s User Privacy & Data Use for ATT tracking definitions and restrictions.

- Pros: Short‑term apparent granularity.

- Constraints: High enforcement and compliance risk on iOS; likely conflicts with U.S. opt‑out and GPC honoring obligations.

- Who it’s for: No one on iOS; on web, avoid where opt‑outs/GPC are in play.

- Recommendation: Do not use on iOS; prefer platform‑modeled and aggregated APIs.

Clean room–assisted attribution (Google ADH, Amazon AMC, walled gardens)

- What it is: Query environments where your first‑party data is temporarily co‑analyzed with platform event‑level data, but only aggregated outputs are allowed.

- Data & compliance: Strong—privacy checks and thresholds block small‑cell leakage. Google’s ADH documents its safeguards in privacy checks. Amazon details aggregation thresholds in AMC data aggregation thresholds.

- Pros: Deeper pathing, reach/frequency, and incremental lift insights across walled‑garden media.

- Constraints: SQL/engineering lift; query costs; blind spots outside each walled garden; export constraints.

- Who it’s for: Mid‑to‑large spenders needing high‑confidence insights for planning and calibration.

- Practical tips: Version your queries and maintain a privacy log; triangulate clean‑room results with geo or audience holdouts.

MMM (Marketing Mix Modeling) + incrementality testing

- What it is: Aggregated statistical modeling (MMM) of spend vs. outcomes, calibrated with experiments such as geo lift or audience holdouts.

- Data & compliance: Strong—no user‑level identifiers required. Works well alongside platform‑modeled conversions and API aggregates.

- Pros: Finance‑grade budget guidance; resilient to signal loss; explicit uncertainty estimates.

- Constraints: Requires disciplined data ops; refresh cadence; careful specification to avoid confounding.

- Who it’s for: Any advertiser allocating material budgets across channels, especially with iOS and Privacy Sandbox constraints.

- Practical tips: Include promotions, seasonality, and macro controls; run periodic holdouts to tether MMM elasticities to reality.

Compliance guardrails for U.S. marketers

- Apple ATT and fingerprinting: Apple defines prohibited “tracking” broadly, including linking data across companies’ apps or websites for advertising or measurement without consent. Deriving or combining device signals to bypass ATT is out of bounds; see Apple’s User Privacy & Data Use page for the 2025 policy language.

- CPRA obligations: Maintain clear notices, honor opt‑out of “sale/sharing,” process user‑enabled global privacy controls, and apply heightened safeguards for sensitive personal information. The CPRA regulations (PDF) outline these duties. Operationalize with a CMP, consent logging, and data‑contracted service providers.

- Consent propagation: Ensure consent signals travel with events (client and server). Google documents how Consent Mode adapts tag behavior and supports modeled conversions in its Consent Mode overview. Keep similar rigor across non‑Google stacks.

- Documentation: Keep a measurement governance log: SDK versions (SKAN/AAK/Android), schema revisions, consent configurations by state, and experiment calendars. This speeds audits and helps finance reconcile modeled vs. observed outcomes.

The recommended, composable stack (2025)

Think in layers. You won’t get a single source of truth; you’ll reconcile multiple privacy‑safe signals into a coherent narrative that finance can endorse.

- Foundation: consent, tagging, server‑side events

- Deploy a robust CMP that honors state‑level rights and GPC; propagate consent to all tags and server‑side pipelines.

- Use server‑side tagging where possible to standardize events, attach consent state, and reduce client‑side noise.

- Normalize event names and parameters across platforms; partition data by consent status for modeling and reporting.

- Tactical optimization (channel‑level)

- Enable platform‑modeled conversions in Google and Meta with correct consent signaling and CAPI/server‑side tags. Treat them as optimization inputs, not final truth.

- On iOS, implement SKAN 4.x or AAK via your MMP or direct integrations. Design conversion value schemas reflecting early revenue proxies (e.g., tutorial complete, subscription trial start) and retention signals; use lockWindow judiciously to speed feedback.

- On Android, integrate the Privacy Sandbox Attribution Reporting API. Map optimization goals to the bits available in event‑level reports; build pipelines for both event and summary reports.

- Calibration and truth‑reconciliation

- Run incrementality tests for high‑spend channels: geo lift, market holdouts, audience exclusions, or PSA/ghost ads variants where supported.

- Stand up a lightweight MMM refreshed monthly or quarterly. Ingest spend, outcomes, promotions, and seasonality; apply adstock/lag and saturation curves; compare elasticity‑implied ROI to platform‑reported ROAS.

- Reconcile: Build a finance‑facing “measurement ledger” that records, per channel/region, platform‑reported results, modeled conversions share, test‑measured lift, and MMM elasticities. Use deltas to adjust budgets.

- Deep dives via clean rooms when justified

- Use Google ADH to analyze YouTube/Google Ads pathing, frequency, and incremental reach; export only aggregated results consistent with ADH privacy checks.

- Use Amazon Marketing Cloud to understand retail media exposure and conversion paths under its aggregation thresholds.

- For Meta and other walled gardens, leverage their clean‑room equivalents or partner MMP templates. Always carry consent segmentation into joins and outputs.

Scenario playbooks

-

Web‑first ecommerce (U.S.)

- Must‑haves: CMP honoring GPC; server‑side tagging; platform‑modeled conversions (Google/Meta) with proper consent signals.

- Optimization: Data‑driven attribution inside platforms plus controlled audience/geo experiments on big channels.

- Calibration: Quarterly MMM; test windows during promos to avoid contamination.

- What to skip: Probabilistic fingerprinting; opaque cross‑site stitching without consent.

-

iOS app acquisition (subscriptions/IAP)

- Must‑haves: SKAN 4.x or AAK with carefully balanced conversion value schema; postback QA; partner parity.

- Optimization: Blend SKAN/AAK aggregates with platform‑modeled signals (e.g., on‑platform conversion objectives). Expect delayed learning and design guardrails for bid volatility.

- Calibration: Run geo market tests; triangulate with MMM; push finance to accept aggregated evidence with documented uncertainty.

- What to skip: Any probabilistic identification on iOS; it’s not ATT‑compliant.

-

Android app acquisition

- Must‑haves: Attribution Reporting API integration; source/trigger configurations; dual pipelines (event + summary reports).

- Optimization: Use event‑level bits for faster feedback; fold in summary reports for richer performance reads as they arrive.

- Calibration: Large‑network holdouts each quarter to pin down noise/model error; reconcile with MMM.

-

Omnichannel with retail media/CTV

- Must‑haves: Clean‑room access (ADH, AMC, and equivalents); cross‑garden taxonomy; server‑side event normalization.

- Optimization: Channel‑level goals respecting each garden’s measurement primitives.

- Calibration: Continuous MMM plus garden‑specific incrementality tests; a shared reconciliation layer for finance.

Implementation patterns and pitfalls to avoid

- Conversion value schema drift (iOS): Small schema changes can break year‑over‑year comparability. Version schemas, document field meanings, and rehearse postback QA before flipping in production.

- LockWindow misuse: Accelerating postbacks is tempting; ensure the early signals you retain are truly predictive of value or you’ll amplify noise.

- Android event‑bit starvation: Don’t spread limited bits across too many outcomes. Prioritize one primary trigger and a small set of secondary indicators.

- Platform bias and over‑reliance: Platform‑modeled conversions are invaluable for bidding, but always triangulate with tests and MMM. Treat each as a lens, not the truth.

- Consent gaps: If a material share of traffic withholds consent, report performance by consent segment. Use modeled conversions transparently and keep an audit log.

- Data contracts and governance: Create a single schema/taxonomy shared by engineering, analytics, and media teams. Add automated checks for missing consent flags or mislabeled events.

How we synthesized this guide

- Sources and versions: We prioritized canonical developer and regulatory documentation as of 2024–2025, including Apple’s AdAttributionKit–SKAdNetwork interoperability, Google’s Attribution Reporting for Android, Google’s Consent Mode overview, the CPPA’s CPRA regulations (PDF), Google ADH privacy checks, and Amazon’s AMC aggregation thresholds. For ATT policy context, we referenced Apple’s User Privacy & Data Use.

- Method: We compared approaches on compliance posture, data needs, granularity/latency, bias risk, complexity/cost, and use‑case fitness. We mapped these to practical decision scenarios and layered a stack that balances optimization needs with compliance and finance reconciliation.

- Uncertainties: AAK partner adoption is evolving; Android Privacy Sandbox reporting and debugging are still maturing; Meta AEM limits can change—verify current setup in platform UIs. Pricing for MMM/MTA vendors varies widely and is often unpublished.

Bottom line

- Treat attribution as a system, not a single model. Combine platform‑modeled conversions with SKAN/AAK on iOS and Attribution Reporting on Android, and calibrate it all with incrementality tests and MMM.

- Avoid probabilistic or fingerprint‑based methods on iOS; they’re not compatible with ATT and raise U.S. law compliance risk.

- Invest early in consent, server‑side tagging, and governance. The quality of your measurement stack depends on the fidelity of these foundations.

With this layered approach, you can keep optimizing tactically while maintaining a defensible, privacy‑first measurement program that finance can trust in 2025.