How to Set Up an AI-Powered SEO System

What “AI-powered SEO” really means—and what success looks like

An AI-powered SEO system isn’t just “using a tool to write posts.” It’s a reproducible operating model that turns research into plans, plans into people-first content, and content into measurable outcomes—while protecting quality and brand trust. Your north star: create original pages that help real visitors, meet technical health thresholds, and earn visibility across classic results and answer engines. Google’s current guidance stresses helpful, unique content and technical readiness for crawling, indexing, and AI experiences; it also notes you can manage preview behavior via snippet controls. See Google’s perspective in Succeeding in AI search (2025) for the policy baseline and mindset shift toward people-first outputs and technical readiness: Google Search Central on succeeding in AI search (2025).

Get your foundations in place (prerequisites)

Before building workflows, assemble the data and environment your system will depend on. Aim to complete this setup in 4–8 hours the first time; after that, upkeep is light.

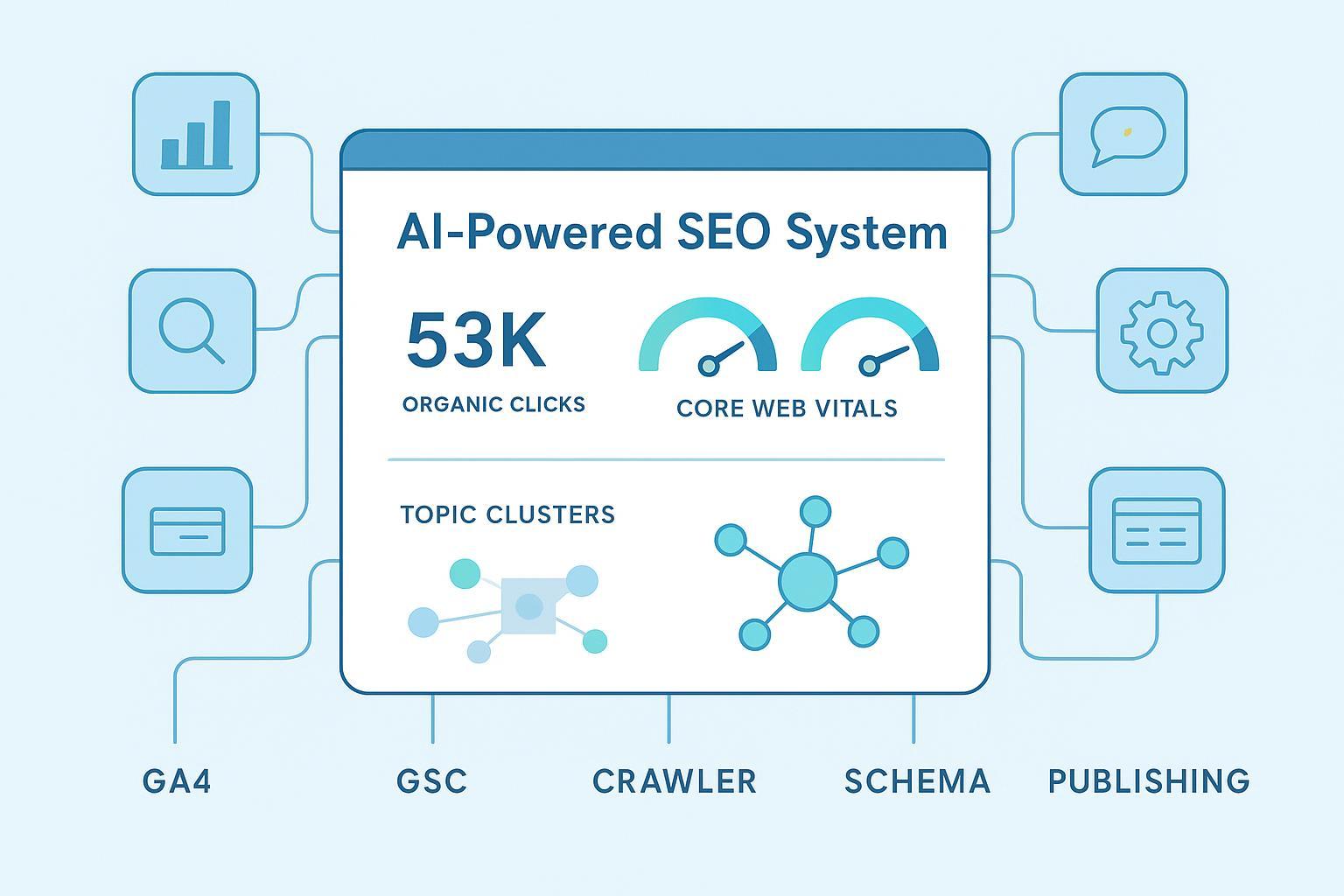

- Data sources: Connect Google Search Console, GA4, and your crawler. Add Core Web Vitals (CWV) lab/field sources and maintain a content inventory with metadata (author, publish/update dates, schema types, cluster IDs, internal links, E-E-A-T status).

- Environment: Ensure your CMS or hosted platform supports clean URLs, schema injection (JSON-LD), and a publishing API. Prepare a Looker Studio dashboard wired to GA4 and GSC with filters for clusters and templates.

- Governance: Define your editorial gate: source citations required for claims; originality scan; an E-E-A-T checklist; and a change log for refreshes. Keep intent and entity notes inside each brief to avoid commodity content. Google evaluates content quality and intent, not just production method—avoid scaled, low-value pages that violate spam guidance: Google Search spam policies (incl. scaled content abuse).

Build your stack step by step

Think in modular layers that work together. Each layer has minimum viable steps and acceptance criteria before anything ships.

1) Research and strategy

Cluster topics by intent and entities, then snapshot the SERP for your target queries to understand patterns: content types, answer formats, and gaps. Your acceptance test: every cluster defines a pillar page, 3–8 supporting articles, and an FAQ set mapped to People Also Ask queries; each page has a distinct primary intent with target entities and a unique angle stated in the brief.

For beginners needing a refresher on terminology, a concise primer on the relationship between keywords and topics helps set expectations.

2) Content planning

Translate clusters into an editorial calendar tied to funnel stages. Assign authors, cite needed sources, and specify which proprietary data, examples, or quotes will make the piece truly non-commodity. Include internal link targets so interlinking work is automatic at publish time.

3) Content creation and on-page optimization (AI-assisted, human-led)

Use AI to speed up ideation and drafting, but make editors accountable for accuracy, originality, and voice. Your brief should include audience, intent, target entities, outline with question-based headers, sources to cite, schema plan, and internal link targets. Require direct answers near the start of key sections (40–60 words) to support extraction by answer engines.

- Drafting guardrails: instruct the writer (human or AI) to cite sources for factual claims and avoid unsupported superlatives. Confirm title/H1 alignment, descriptive headings, alt text for images, and a validated JSON-LD schema block.

- Acceptance criteria: originality scan passes; facts verified with two sources; E-E-A-T checklist meets the threshold (e.g., ≥80/100); schema validates; internal links added; author bio and updated date present. For technical health, set CWV targets at the 75th percentile: LCP < 2.5 s, INP < 200 ms, CLS < 0.1. See the current thresholds in Google’s documentation: Core Web Vitals thresholds and measurement.

For teams adding AI to drafting workflows, an “AI blog writer” can help accelerate outlines and SERP-informed drafts while preserving editorial control. Explore a capability overview here: AI blog writer (feature overview).

4) Technical SEO automation

Automate the boring, catch the risky. Schedule weekly delta crawls and monthly full crawls to find broken links, canonical issues, orphan pages, and indexability problems. Validate structured data using the Rich Results Test and type-specific documentation from Google’s Search Gallery: Structured data Search Gallery (supported types). Keep sitemaps fresh and verified in Search Console. Remember: the official Indexing API is limited to JobPosting and BroadcastEvent within VideoObject; rely on sitemaps, internal links, and the URL Inspection tool/API for diagnostics.

5) Internal linking system

Adopt a pillar/cluster structure. On each new publish, add at least two inward links from relevant existing pages, one link from the new page back to its pillar, and lateral links to related cluster peers where natural. Use a crawler to spot orphan pages and pages with missing or excessive links. Descriptive anchors beat generic “learn more” text every time. Think of internal links as the veins of your topical authority—if they’re clogged or missing, nothing flows.

Be ready for answer engines and AI Overviews

Answer engines and AI Overviews favor content with clear definitions, stepwise instructions, concise direct answers, and citations. Structure sections so a reader can get a 40–60 word answer up top, followed by details and examples. Where appropriate, use supported structured data (Article, HowTo, FAQPage—mind eligibility changes) and include Organization/Person schema for publisher and author.

For a practical, current playbook on structuring sections and FAQs for answer visibility, see this industry resource: Search Engine Journal’s step-by-step AEO guide (2024). It complements Google’s emphasis on people-first, technically sound pages.

Measurement reality: There’s no official Search Console filter for AI Overviews. Build a proxy tracker: maintain a list of queries known to trigger AI summaries, perform spot checks on a cadence, and analyze GSC performance for those cohorts. Treat observed inclusions as directional, not definitive KPIs.

Governance and E-E-A-T in practice

Make “human-in-the-loop” non-negotiable. Editors should verify facts, check for original insight, confirm citations, and approve the E-E-A-T checklist before publication. Keep a brief note on pages where substantial AI assistance was used if that aligns with your transparency policy. Maintain change logs for content refreshes and schema updates.

To operationalize the editorial gate, many teams adopt a standardized checklist and quality scoring before publish. A dedicated review against Experience, Expertise, Authoritativeness, and Trustworthiness reduces errors and prevents thin or duplicative outputs. For teams that prefer a structured review aid, see: AI E-E-A-T Checker (overview).

Practical workflow example (neutral)

Disclosure: QuickCreator is our product.

Here’s a reproducible micro-workflow you can mirror with your preferred stack:

- Create a brief from SERP patterns and entity targets, including question-based headers and a schema plan. Draft an outline and request citations for any statistics or definitions.

- Generate a first draft with cited claims; require direct answers near section starts and ensure the piece promises a unique angle not found in current top results.

- Run your editorial gate: originality scan, fact check against two sources, and an E-E-A-T review. Validate the JSON-LD block and add internal links to and from relevant cluster pages.

- Publish via your CMS or API and schedule a follow-up internal link pass from high-traffic evergreen pages.

Teams using an AI blog platform can streamline parts of this flow—drafting with citations and a systematic E-E-A-T pass—while keeping human review in control.

KPIs, dashboards, and operating cadence

You don’t need a massive team to run this. A realistic rhythm:

- Weekly (90–120 minutes): SERP/intent drift check for top clusters; produce/optimize/publish 1–3 pieces; add internal links; pick 1–3 URLs for refresh.

- Monthly (2–4 hours): Full crawl, fix technical issues, validate schema; review CWV trends; run a topic coverage gap analysis.

- Quarterly (4–8 hours): Re-cluster entities and content; prune/merge underperformers; refresh templates and schema; reset the roadmap to match results.

Below is a compact KPI starter set. Use cluster IDs and schema types as dimensions in Looker Studio for segmented views.

| KPI category | Metric | Target/Notes |

|---|---|---|

| Outcome | Non-brand organic clicks | Up-and-to-the-right vs. baseline; tie to conversion trends |

| Outcome | Conversions from organic | Form fills, trials, revenue; use GA4 conversion definitions |

| Visibility | Share of voice on target entities/topics | Cohort-based tracking across clusters |

| Visibility | Top 3/10 rankings and impressions | Watch for intent drift and cannibalization |

| Quality | E-E-A-T checklist pass rate | ≥80% of pages pass before publish |

| Quality | Factual error rate per 1,000 words | Trending toward zero; investigate misses |

| Technical | CWV pass rate (LCP/INP/CLS) | ≥75th percentile passing by template |

| Operational | Cycle time (brief → publish) | Trend down; keep quality gate intact |

For step-by-step structured data implementation and validation, rely on Google’s canonical documentation in the Search Gallery; it’s the most reliable source when templates evolve.

Troubleshooting: fix issues before they compound

- Thin or duplicative drafts: Strengthen the brief. Enforce a unique angle, add proprietary data or SME quotes, and require citations for claims. If originality and value aren’t clear, don’t publish.

- Indexing delays: Check robots and meta tags, internal links, sitemap freshness, and the URL Inspection tool. Avoid Indexing API hacks outside official scope.

- CWV regressions: Investigate template-level issues. Optimize render paths (critical CSS, preconnect/preload), reduce main-thread work, and prevent layout shifts (explicit dimensions, font strategies).

- Internal link gaps: Run crawler reports for orphans; add contextual links from high-traffic evergreen pages; review pillars monthly to incorporate new cluster links.

- AEO visibility stalls: Put concise answers at the start of sections, tighten definitions, clarify entities (Organization/Person schema), validate structured data, and expand related questions coverage.

Next steps

If you’re ready to formalize this system, start with the foundations and one cluster. Build the research → brief → draft → editorial gate → publish → interlink loop, then add technical automation and AEO tracking. If you want an all-in-one place to run the workflow with AI assistance and human oversight, you can explore our platform to see whether it fits your stack.