How to Use AI Data to Improve GEO (Generative Engine Optimization) — with notes on geo experiments

GEO gets thrown around in two very different conversations. Some marketers mean Generative Engine Optimization—getting your content cited inside AI answers from Google, Perplexity, Copilot, or ChatGPT. Others use GEO for geographic experiments that measure incremental lift by region. This guide prioritizes Generative Engine Optimization and, at the end, includes a short primer on geo lift tests so you aren’t talking past a stakeholder.

According to Search Engine Land’s primer, GEO is about being included and cited in AI-generated answers rather than only ranking in blue links—an expansion of classic SEO into answer engines. See the overview in the publication’s explainer for framing and examples: Search Engine Land’s GEO explainer.

The AI data that actually moves GEO

To raise your odds of being cited, you need specific data sets and a predictable cadence. Think of it like building an evidence-driven newsroom.

- Question universe and intents: a prioritized list of real questions you want to be cited for (from People Also Ask, forums, on-site search, support/chat logs) clustered by topic and intent.

- Answer-engine visibility logs: weekly or biweekly checks across Google’s AI features, Perplexity, Copilot/Bing, and ChatGPT (with browsing) capturing if/where your site is cited, with screenshots.

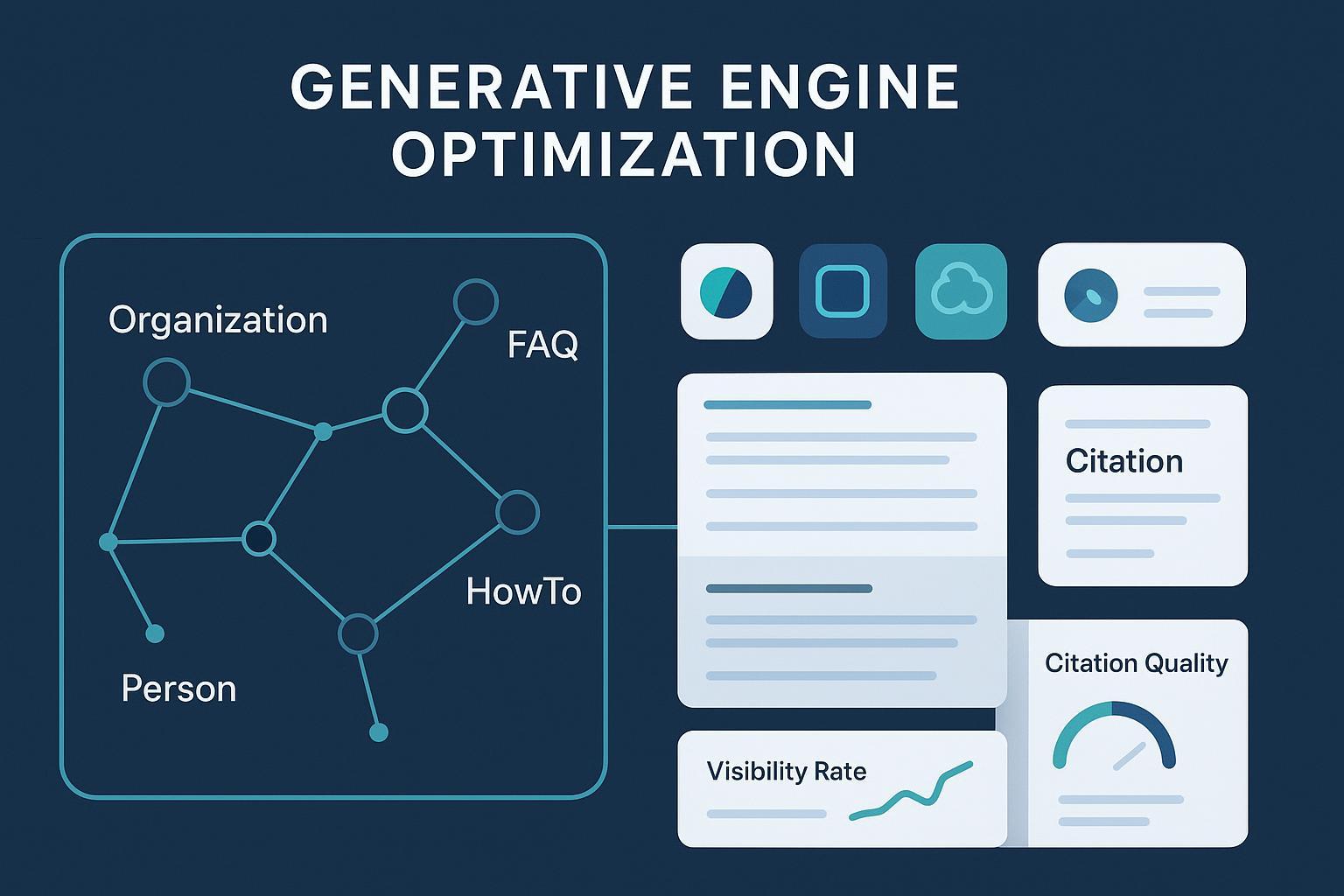

- Entity and source graph signals: clear Organization/Person identity, author pages, expert profiles, and structured data (Organization, Person, Article, FAQPage, HowTo, Product) plus authoritative sameAs links.

- Evidence inventory and provenance: first-party studies with methodology, third-party primary sources, last-updated timestamps, version IDs, and public changelogs.

- Freshness and crawl eligibility: accurate sitemaps with lastmod, visible “last updated,” and bot controls that match your policies.

| Data set | Where it comes from | How you’ll use it |

|---|---|---|

| Question universe & intents | PAA/related searches, forums, on-site search, support/sales logs, embedding expansion | Map questions to canonical pages, uncover gaps, draft FAQs/HowTos |

| Answer-engine visibility logs | Manual checks or trackers across Google AI features, Perplexity, Copilot/Bing, ChatGPT browsing | Measure citations, capture competitors, trigger content refreshes |

| Entity & source graph signals | Author/org pages, JSON-LD (Organization, Person, Article, FAQPage, HowTo, Product), sameAs | Reduce ambiguity, strengthen credibility, enable “copy-and-cite” sections |

| Evidence & provenance | First-party studies, primary sources, change logs, versioning | Bind claims to auditable proof; keep content trustworthy and current |

| Freshness & eligibility | XML sitemaps with precise lastmod, visible “last updated,” robots/allowlists | Speed recrawl, control access, ensure engines can reach what you want cited |

A repeatable GEO workflow (run weekly or biweekly)

Here’s a pragmatic SOP you can operate with a small team. Time boxes assume 50–150 priority questions.

-

Build and cluster your question set (2–4 hours)

- Combine PAA/related searches with first-party logs. Use embeddings to cluster by intent and entity. Assign each cluster an owning page and “supporting” subtopics.

-

Check answer engines on a fixed day (2–3 hours)

- Google: where available, check AI Overviews/AI Mode and record whether an AI answer appears and which sources are cited. Google’s site-owner documentation for AI features explains general behavior and eligibility: Google’s AI features for site owners.

- Perplexity: run each prompt, record numbered citations and domains. Their docs outline bots/crawlers and search modes: Perplexity search quickstart.

- Copilot/Bing: capture the answer card and citations. While Microsoft hasn’t published a single “citation eligibility” guide, Bing has shared guidance on features like generative captions; accurate sitemaps matter: Bing on lastmod importance (2023).

- ChatGPT with browsing/Search: run the prompt, note any surfaced URLs. OpenAI explains how publishers can control crawling: OpenAI’s publisher FAQ on GPTBot and OAI-SearchBot.

-

Log rigorously (1–2 hours)

- For each query x engine: store prompt text, timestamp, whether your domain is cited, cited URLs, citation position, competitors cited, and a screenshot path. Keep filenames standardized: YYYY-MM-DD_engine_queryID.png.

-

Diff and prioritize (1 hour)

- Compare to last week: where did visibility rise or fall? Flag clusters with losses for refresh.

-

Refresh for “copy-and-cite” (2–4 hours)

- Add a concise definition box, a numbered HowTo, and a sourced FAQ. Bind claims to first-party data or primary sources. Ensure on-page “last updated” is visible and factually true.

-

Strengthen identity and structure (1–2 hours)

- Add/validate JSON-LD for Organization, Person, Article, FAQPage/HowTo/Product as appropriate. Validate with Google’s Rich Results Test and confirm URL status in Search Console. Start with the schema intro and gallery: Google’s intro to structured data and the Search Gallery.

-

Ship and surface freshness (30–45 minutes)

- Update XML sitemaps with precise lastmod. Consider a public changelog feed and an on-page “Updated on” stamp. Google’s guide is useful: Build sitemaps the Google way.

-

Re-measure next cycle (30–45 minutes)

- Repeat step 2. Track progress and annotate major content changes.

Guardrails to keep quality high

- Require citations to primary or highly authoritative sources and show dates. Avoid weasel words. Keep screenshots for auditability.

- Respect robots.txt and platform terms. If a crawler controversy affects you (for example, Cloudflare alleged undeclared crawling related to Perplexity in 2025), verify bots and IPs server-side and adjust policies accordingly: Cloudflare’s 2025 post on stealth crawling claims.

Technical foundation: schema, sitemaps, and crawl controls

- Structured data: Use JSON-LD for Organization, Person, and Article on editorial content; FAQPage/HowTo where the content truly fits; Product on product pages. Validation helps catch mistakes but doesn’t guarantee features. Begin here: Google’s intro to structured data.

- Sitemaps and freshness: Keep XML sitemaps accurate and lastmod precise; it helps both Google and Bing discover updates. Guidance: Build sitemaps the Google way.

- Identity consistency: Align author names, bios, and sameAs links (e.g., Wikipedia/Wikidata, social, Crunchbase). Use consistent brand naming across site and profiles.

- Bot controls and eligibility:

- OpenAI (GPTBot/OAI-SearchBot): controllable via robots.txt and supported headers; see OpenAI’s publisher FAQ.

- Microsoft/Bing: ensure Bingbot access; consult Bing Webmaster resources and quality guidelines; their post on lastmod underscores freshness signals: Bing on lastmod importance (2023).

- Perplexity: review their bot/search docs and decide your allow/deny stance: Perplexity search quickstart.

AI/ML workflows that raise your citation odds

- Query expansion and clustering: build embeddings for your question set; cluster by intent/entity to uncover gaps and route queries to the right canonical pages.

- LLM-assisted drafting with guardrails: prompt templates should enforce citations to diverse, recent, primary sources. Require a references block with URLs for editorial review.

- Entity disambiguation and schema enrichment: apply NER and link entities to canonical IDs (e.g., Wikidata). Reflect them via sameAs/identifier in JSON-LD.

- Evaluation loops (LLM-as-judge + human QA): score drafts for accuracy, coverage, helpfulness, and citation quality. Use models to pre-screen, humans to finalize. Track scores over time.

- Internal linking via entity graphs: use vector similarity to connect related pages and consolidate topical authority. Think of it like laying clear roads so answer engines can follow context.

Measurement that proves GEO is working

Define a small set of metrics you can maintain every week and report monthly.

- AI-Generated Visibility Rate (AIGVR): (AI responses with your cite/mention) / (responses checked) × 100. Directionally, brands new to GEO often start below 5%, while established authorities can reach double digits; validate locally before setting targets. For context on answer engines and tracking, see Search Engine Land on answer engines and models.

- Citation Quality Score (0–100): weight for source type (primary/government/peer-reviewed), recency, and link presence. Require screenshots and URLs as evidence.

- Prominence/Placement Index: where your cite appears in the panel, number of citations, and whether your brand is quoted verbatim.

- Conversational Engagement Rate (CER): (clicks on AI-cited links to your site) / (AI answer displays with your cite) × 100 where link-outs are visible (e.g., Perplexity). Expect lower CTR than classic SERPs; monitor multi-touch outcomes.

- Downstream proxies: branded search lift (Search Console), identifiable referral sessions (e.g., from Perplexity), and assisted conversions in GA4.

Dashboards and cadence

- Weekly: AIGVR, citation counts by engine, prominence, top gains/losses with screenshots.

- Monthly: trend lines by cluster, refresh impact notes, experiments run.

- For context on CTR shifts as Google’s AI features roll out, see Dataslayer’s commentary on AI Overviews and CTR (2025).

Troubleshooting: quick diagnoses and fixes

-

Low citations despite strong rankings

- Likely issues: entity ambiguity, thin/derivative content, stale pages, poor semantic coverage.

- Fixes: add Organization/Person JSON-LD with authoritative sameAs links; add unique evidence and examples; refresh with truthful “last updated”; expand FAQ/HowTo sections and consolidate duplicates.

-

Perplexity prefers a competitor

- Likely issues: their content is fresher, clearer, or cites stronger primary sources.

- Fixes: audit the exact cited URL; add definition boxes and step lists; cite primary sources explicitly; use schema that matches the content type.

-

Misattributed brand or author

- Likely issues: inconsistent bylines or brand naming; missing Person/Organization markup.

- Fixes: tighten identity, align bylines and bios, add sameAs links, verify canonical URLs.

-

Sudden drop in Google AI citations

- Likely issues: recent update interactions, schema errors, sitemap lastmod inaccuracies.

- Fixes: validate with Rich Results Test, check sitemaps, run a focused refresh, and re-measure in a week.

Appendix: GEO can also mean geo experiments (geo lift)

If your stakeholder actually meant geographic experiments, here’s the 60-second version.

- What they are: region-level tests assigning treatment/control to estimate incremental lift when user-level experiments aren’t feasible. A concise industry primer makes the case for randomized geo tests as a gold standard: AdExchanger’s 2025 perspective.

- Modern methods: synthetic control and Bayesian synthetic control. For a practitioner walk-through and method docs, see Eppo’s Geolift documentation (2024).

- Setup and pitfalls: choose comparable regions, collect a stable pre-period, and watch for confounders like seasonality and local events. For a pragmatic explainer with examples, Wayfair’s tech blog is helpful: How Wayfair uses geo experiments to measure incrementality (2023).

Your 30–60–90 day action plan

- Days 1–30: Build the question universe; stand up the manual logging sheet; add Organization/Person/Article schema to the top 10 pages; expose last updated; fix the sitemap lastmod.

- Days 31–60: Run two measurement cycles; refresh the top five pages using “copy-and-cite” patterns; add FAQ/HowTo schema where it fits; begin entity linking to Wikidata and authoritative profiles.

- Days 61–90: Automate parts of visibility checks; formalize the evaluation rubric; expand to 150–300 questions; pilot one geo experiment if you need causal validation for a big content refresh.

Keep it tight, measured, and auditable. If you do the boring things right—identity, evidence, structure, and cadence—your odds of being quoted by answer engines go up steadily.