How to Build a GEO Content Calendar

When AI answers are the front door to discovery, a generic editorial calendar won’t cut it. You need a GEO-ready system that plans for answer engines, not just blue links. This guide gives you a replicable framework—fields, workflows, governance, and metrics—to build a GEO (Generative Engine Optimization) content calendar that scales across regions.

What GEO means (and what it doesn’t)

GEO is the practice of structuring and evidencing your content so AI-powered answers can accurately cite it. Think of it like stocking a well-labeled pantry for a chef: if your ingredients (claims, data, entities) are clean and easy to grab, the engine can compose better dishes and give you credit.

To avoid confusion: GEO here is Generative Engine Optimization, not location-only geo-targeting. It sits alongside SEO and AEO. SEO focuses on ranking in classic SERPs; AEO targets concise answers and voice/snippets; GEO emphasizes clarity, evidence, and attribution for AI summaries. For context, see Search Engine Land’s overview of what GEO is and why it matters (2024) and Google’s explanation of AI features and your site, which notes AI Overviews draw on the same core ranking systems and cite multiple sources.

Inputs and prerequisites

Before building the calendar, confirm these foundations:

- Regional personas and priority questions by market

- Locale-level question/keyword research and competitor citations in AI answers

- Existing content audit by region; note freshness and technical health

- Evidence inventory (original data, SMEs, third-party sources) and authorship/E‑E‑A‑T plan

Tip: Capture gaps by locale—not just topics, but missing formats (definitions, checklists, glossaries) that answer engines love to quote.

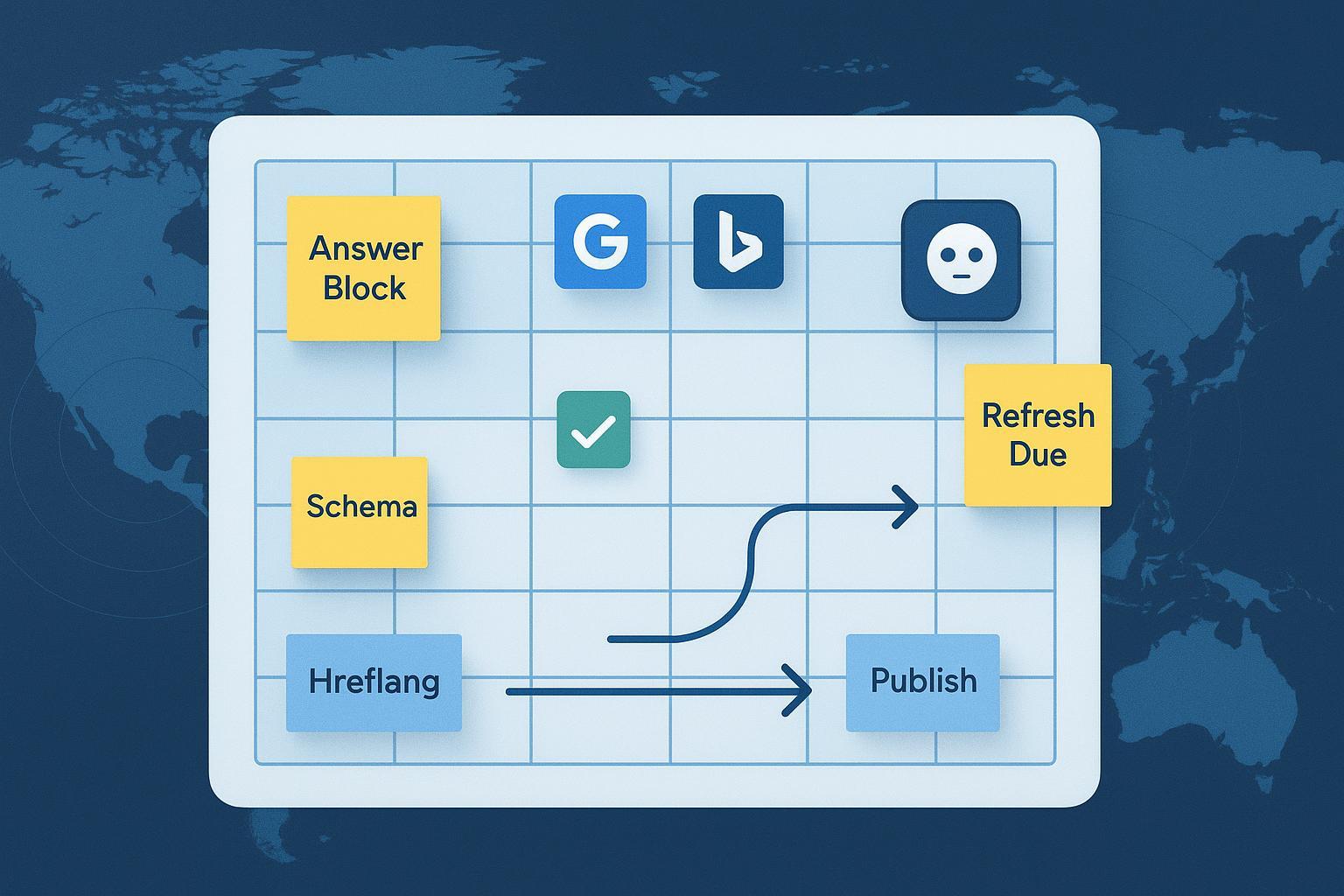

The calendar schema (fields you actually need)

A practical GEO calendar combines standard editorial logistics with GEO/AIO elements and international metadata. Start with these field groups.

| Group | Representative fields | Why it matters |

|---|---|---|

| Logistics & governance | Title; Content type; Owner; Contributors; Approvers; Status (idea → briefed → drafting → editing → localized → legal → scheduled → published); Publish date/time (local TZ); Version; Asset links; Dependencies; RACI | Creates accountability, clear gates, and realistic timelines. See SEL’s content calendar fundamentals. |

| GEO/AIO planning | Primary user question(s) per locale; 100–200 word Answer block; FAQ set; Target entities/definitions; Evidence/citations; Author and reviewer notes; Planned structured data (Article, Organization, Product, QAPage); Last refreshed; Next refresh due | Makes your content quotable, attributable, and up to date for AI systems. Google documents AI features but no “opt‑in” markup; strong content + clarity win. |

| International/locale | Market (country/region); Language/locale (e.g., en-US, fr-CA); Currency; Units; Local holidays/cultural hooks; Legal/compliance notes; Hreflang/canonical plan; Intended URL/slug pattern | Aligns with user intent and avoids duplication. Plan hreflang/canonical targets per Google’s canonicalization guidance. |

| Distribution & adaptation | Channels per region (site, search, social, email, paid); Localized variants for copy/assets; Partner/PR hooks; Repurposing plan | Ensures each market gets the right format and angle at the right time. |

| Metrics & targets | Baseline vs target conversions; Search KPIs by locale; AI citations observed by engine/query; Share of voice in AI answers; Notes | Because official AI-citation reporting is limited, you’ll rely on proxies and manual logs. See notes below. |

Step-by-step workflow (with approval gates)

Here’s a lean, repeatable path from idea to refresh. Who owns what? Use RACI across SEO, content, localization, design, compliance, and analytics.

- Ideation and market validation

- Source questions from SERPs/AI answers, on-site search, support tickets, and sales. Log by locale.

- Check which competitors are cited in AI results; note gaps you can fill.

- Brief and entity review

- Draft the primary question, answer block, entities/definitions, and evidence plan.

- Gate: SME/editor sign-off for accuracy and scope.

- Drafting and design

- Write with extractable statements and inline citations. Keep one concise, quotable answer block per key question.

- Prep required assets (diagrams, charts) and alt text.

- Expert, legal, and localization

- For YMYL topics, route to SMEs and legal.

- Localize by market (translation vs transcreation). Add local examples, units, and holidays.

- Pre-publication GEO QA

- Validate structured data types in plan using Google’s Rich Results overview and testing tools.

- Technical checks: indexability, canonical, hreflang pairings, speed.

- Publish and distribute

- Schedule per local time zones; adapt snippets for social/email per market.

- Measure and learn

- Monitor Search Console/Bing Webmaster data by locale and keep a manual “AI citations observed” log. Microsoft’s evolving Copilot support for site owners is covered in Search Engine Land.

- Refresh and iterate

- Tier your content (e.g., hubs quarterly; support semiannually). Update the calendar’s last/next refresh fields.

GEO-specific optimization inside the calendar

- Answer blocks and FAQs: Treat them as products inside your article. Short, accurate, cited, and distinct per locale.

- Evidence discipline: Log your sources with author/year; prefer primary research and SMEs. Engines reward clarity and credibility.

- Entity control: Define the terms you want to be known for. Use consistent names and synonyms; avoid ambiguity.

- Structured data planning: Note intended types early (Article, Product, Organization, QAPage) and validate pre-pub. Google’s AI features don’t require special markup, but structured data still helps machines interpret your page.

International planning (the planning-level essentials)

- Locale codes and markets: Track BCP 47 codes (en-US, pt-BR) and target countries. Keep a locale matrix.

- Holidays and hooks: Add region-specific events to your calendar to time launches and examples.

- Hreflang and canonicals: Plan reciprocal pairs and self-canonicals; avoid mixing canonical to one locale while also signaling alternates. Use Google’s consolidate duplicate URLs guidance when deciding canonical targets.

- Distribution by region: Stagger posts by local work hours; adapt creative; adjust CTAs to currency/units.

Measurement and dashboards

Here’s the deal: there’s no official, granular reporting for AI Overview citations in Search Console today. Work with proxies and logs.

- Baselines: Use Google Search Console and Bing Webmaster Tools by query/page/locale to establish trend lines. Keep link usage minimal and descriptive; a single mention here is sufficient.

- AI citations log: Add fields for “engine,” “query,” “snippet text,” and “cited URL” with the date observed. Review monthly.

- Third-party monitors: Treat them as directional, not canonical. Cross-check with your own tests.

- Outcomes: Tie AI visibility to engagement and conversions. Compare markets and content tiers.

For grounding on what Google discloses about AI features, refer to the Search Central page on AI features and your site.

Refresh cadence and governance

Don’t leave freshness to memory. Bake it into the calendar.

- Tier 1 (hubs, definitional pages): refresh quarterly or when data changes.

- Tier 2 (supporting guides): refresh semiannually.

- News/volatile topics: ad-hoc updates with reviewer notes.

- Governance: Owners must update “last refreshed/next due” fields and close the loop with analytics in the monthly review.

Troubleshooting playbook

If your pages aren’t cited in AI answers, ask:

- Is the core question explicit, and is there a crisp answer block with citations?

- Are you indexable, fast, and correctly canonicalized, with clean hreflang for alternates?

- Do you demonstrate E‑E‑A‑T (clear authorship, expert review where needed, date transparency)?

- Are competitors providing clearer statements, unique data, or better evidence?

- Is the content localized beyond translation (examples, units, cultural relevance)?

Google’s documentation reiterates that success in AI features starts with quality content and technical hygiene; see the Search Central AI features overview for scope and limits.

Starter template: copy/paste headers

Use this CSV header set to spin up your first calendar (Sheets/Notion). Add validation on locale codes and statuses.

Title,Content Type,Owner,Contributors,Approvers,Status,Publish Date,Publish Time Zone,Market,Language-Locale,Primary Question (Locale),Answer Block (100-200w),FAQ Set (Locale),Target Entities,Planned Schema Types,Evidence/Citations,Author E-E-A-T Notes,Reviewer,E-E-A-T Review Date,Intended URL,Canonical Target,Hreflang Pair(s),Assets Links,Dependencies,Distribution (by Region),Local Holidays/Notes,Legal/Compliance Notes,Baseline KPI,Target KPI,AI Citations Observed (Engine/Query/Date),Last Refreshed,Next Refresh Due,Version,Notes

What to do next

- Create your calendar with the schema above

- Pilot with one market and one Tier‑1 topic cluster; document outcomes

- Expand to additional locales; tighten governance and refresh cadences

- Build a simple monthly “AI citations” review and share highlights with stakeholders

Want a sanity check on your first draft? Share your field list and a sample row, and I’ll suggest improvements within 24 hours.