How E-E-A-T Impacts AI Search (GEO): What Actually Matters

If you’ve been testing Google’s AI Overviews, Bing Copilot, or Perplexity, you’ve likely asked: why do certain sites keep getting cited while others—often with solid SEO—don’t? The short answer: signals aligned with E-E-A-T help engines trust and select sources, even though E-E-A-T itself isn’t a ranking factor. In the GEO (Generative Engine Optimization) era, those signals shape whether your content is retrieved, summarized, and credited.

This article clarifies what E-E-A-T is (and isn’t), defines GEO, and shows how to build entity- and content-level trust with technical enablers and measurement. You’ll leave with a pragmatic action plan you can run weekly.

Definitions, without the confusion

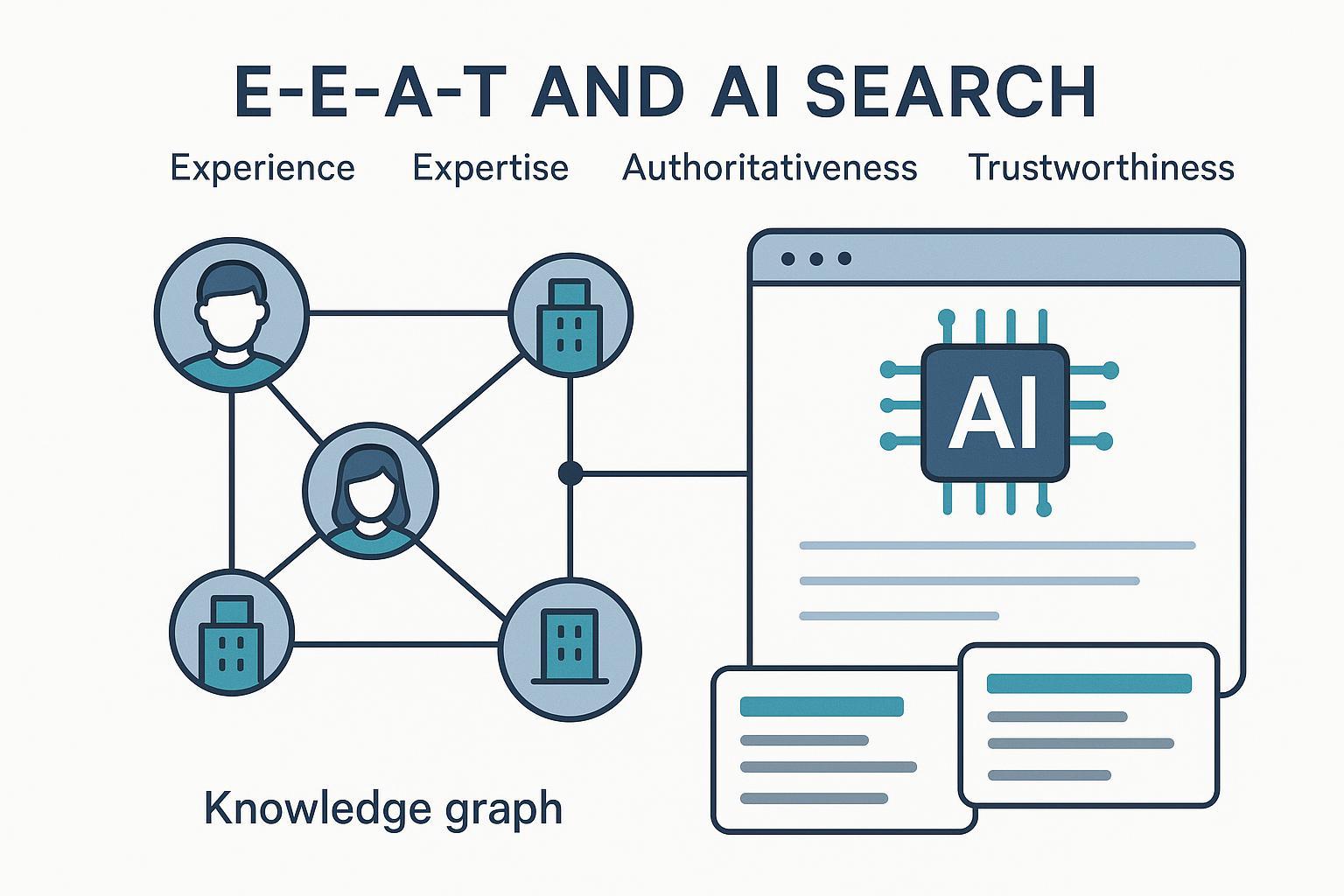

- E-E-A-T: Experience, Expertise, Authoritativeness, Trustworthiness. It’s a quality framework used by Google’s human Search Quality Raters to evaluate the helpfulness and reliability of pages; it’s not a direct ranking signal. Google explains the role of E-E-A-T in the publicly available Search Quality Rater Guidelines (latest public version) and reiterates people-first quality principles in the Search Central SEO Starter Guide.

- GEO (Generative Engine Optimization): The practice of optimizing content, entities, and web signals so LLM-driven engines (e.g., AI Overviews, Copilot, Perplexity) retrieve, cite, and summarize your work. For a compact industry overview, see Search Engine Land’s explainer on GEO (2024) and Ahrefs’ GEO guide (2025).

Relationship: E-E-A-T informs what “high-quality, trustworthy” looks like. GEO applies that to how AI systems choose sources—via entity recognition, evidence, and technical clarity.

How AI engines pick sources (and where E-E-A-T shows up)

Most generative search experiences follow a version of retrieval-augmented generation (RAG): a query triggers retrieval over indexed content or web results, relevant passages are fed to a model, and an answer is generated with citations. Selection biases often favor:

- Recognizable entities (authors/organizations) with consistent identity and third‑party signals

- Pages that demonstrate first-hand experience and cite primary sources

- Clean technical markup that makes passages easy to extract and attribute

Industry tests have observed that Google AI Overviews frequently reference authoritative domains and pages with strong entity clarity and evidence. For an accessible summary of observed patterns, see WhitePeak’s analysis of AI Overviews source selection (2025). For broader optimization ideas across engines, Backlinko’s AI optimization overview (2025) covers Perplexity’s citation transparency and practical page setups.

Think of your entity identity—author and organization—as a “reputation passport” that lets AI systems quickly verify who you are and what you reliably cover.

E-E-A-T → GEO: a practical mapping

Below is a compact mapping from the four E-E-A-T components to GEO-ready actions that raise your odds of being retrieved and cited.

| E-E-A-T component | GEO-ready actions and examples |

|---|---|

| Experience | Publish first-hand walkthroughs, original screenshots, and replicable methods; include notes on what you tested and why; document constraints and outcomes. |

| Expertise | Add clear bylines; comprehensive author bios with credentials and topic specialization; link to external profiles (e.g., ORCID, LinkedIn, conference talks); use reviewer markup for expert-verified content. |

| Authoritativeness | Strengthen organization identity with Organization/WebSite schema, SameAs links to authoritative profiles; maintain consistent naming across the web; earn third-party mentions and references. |

| Trustworthiness | Cite primary sources with descriptive anchors; display editorial standards, corrections policy, and contact details; use HTTPS; show publish/update dates and transparent authorship. |

Build entity-level trust (authors and organizations)

Start with clarity:

- Author identity: Use explicit bylines, Person schema, and detailed bios that show credentials, first-hand experience, and topical focus. Link to external authoritative profiles and publications.

- Reviewer workflows: For sensitive topics (akin to YMYL), add expert reviewers and mark them up. Multi-party review boosts credibility and helps machines connect your content to recognized experts.

- Organization identity: Implement Organization and WebSite schema; add SameAs to official profiles (industry directories, professional associations). Keep naming consistent everywhere—ambiguity dilutes authority.

Entity clarity helps engines disambiguate who said what, across which domain, and why it should be trusted.

Build content-level trust (evidence and transparency)

Content selection tends to privilege pieces that show their work:

- First-hand evidence: Screenshots, data tables, and step-by-step methods that another practitioner can reproduce.

- Source hygiene: Link to primary standards, official docs, datasets, and precise definitions using descriptive anchor text. Include publish and update dates.

- Editorial standards: Display a corrections policy and fact-checking notes. Make ownership and contact info easy to find.

Pages that read like a field report—clear, accountable, and replicable—are easier for LLMs to quote.

Technical enablers for GEO

Technical clarity accelerates retrieval and clean citation:

- Schema: Use Article/NewsArticle, Person, Organization, Review, HowTo, FAQ where appropriate. Validate markup and keep it crawlable.

- Structured summaries: Clear headings, concise definitions, and scannable sections help engines extract kernels and attribute them.

- Canonicals and crawlability: Resolve duplicates, ensure the canonical carries your strongest evidence, and keep robots directives consistent.

- Performance and UX: Core Web Vitals influence discoverability and user trust; fast, readable pages make extracted passages cleaner.

- Site architecture: Stable URLs, logical taxonomy, and sitemaps help engines find and contextualize entities and content.

For baseline quality expectations, Google’s SEO Starter Guide is still the best official primer.

Measurement: know if your GEO signals work

You can’t improve what you don’t measure. Build a simple, repeatable framework:

- Assemble a query panel: 25–50 representative queries per topic cluster. Test weekly across Google AI Overviews, Bing Copilot, and Perplexity.

- Log inclusion and citations: Track whether your pages or brand are cited, how often, and which passages are pulled. Note competing sources.

- Attribute traffic: Tag links where possible and review referral logs for AI engines. Segment by topic and page type.

- Record changes: Maintain a changelog of E-E-A-T improvements (e.g., new bios, added reviewer markup, structured evidence) and correlate with inclusion shifts over time.

Over a quarter, patterns emerge: clusters with stronger entity clarity and first-hand evidence tend to see more stable citations.

YMYL and risk management

If your niche touches health, finance, legal, or high-stakes advice, tighten review and disclosures. Use expert reviewers, explicit disclaimers, and conservative language. When you see hallucinations or misattributions in AI answers, publish clarifications and reach out to platforms with precise evidence. The goal isn’t to “win” every answer; it’s to be consistently credible when an assistant needs a trustworthy source.

Competitive benchmarking and freshness signaling

- Benchmark sources that appear in AI answers for your queries. What do they do differently—entity clarity, evidence density, or schema depth?

- Highlight recency: Add clear update stamps, changelogs, and periodic refreshes to surfacing pages. Maintain a crawl-friendly update cadence so engines re-evaluate passages.

- Track topic clusters: Identify where rivals dominate citations and prioritize strengthening those clusters with first-hand work and expert review.

Freshness isn’t just a date; it’s visible progress backed by new data, examples, or policies.

Pitfalls and myths to avoid

- “E-E-A-T is a ranking factor.” It’s not; it’s a rater framework. Algorithms approximate similar signals.

- Vanity bios: Credentials without first-hand evidence won’t carry you in GEO contexts.

- Schema without substance: Markup helps machines, but you still need replicable methods, primary sources, and transparent policies.

- Over-optimization: Don’t chase every micro-signal. Focus on clear identity, evidence, and technical hygiene.

Your 10-step action plan

- Add or update author bios with credentials, first-hand experience, and links to authoritative external profiles; implement Person schema.

- Implement Organization/WebSite schema and SameAs; standardize naming across profiles and directories.

- Create reviewer workflows for sensitive topics; add reviewer markup where relevant.

- Rework key pages to show first-hand evidence: screenshots, data tables, and step-by-step methods.

- Audit sourcing: replace vague references with descriptive anchors to primary docs and datasets; add publish/update dates.

- Validate and keep schema crawlable (Article, HowTo, FAQ, Review as appropriate).

- Clean up canonicals, robots directives, and site architecture; prioritize the canonical page with the strongest evidence.

- Improve performance and readability; make summaries scannable so engines can cleanly extract passages.

- Build a query panel and weekly inclusion log for AI Overviews, Copilot, and Perplexity; track citations by cluster.

- Maintain a changelog of E-E-A-T improvements and correlate with citation movement; iterate quarterly.

Further reading and touchpoints

- E-E-A-T framing: Google’s Search Quality Rater Guidelines (current public release) and the Search Central SEO Starter Guide for people-first quality.

- GEO and AI visibility: Search Engine Land’s GEO explainer, Ahrefs’ GEO guide, and Backlinko’s AI optimization overview for practical experiments and cross-engine patterns.

Ready to see where you stand? Run the query panel this week, then pick two clusters to upgrade with entity clarity and first-hand evidence. Why not start today?