The E‑E‑A‑T Framework for AI Content Writers: A 2025 Best‑Practice Playbook

AI can speed up production, but quality still wins. Google evaluates helpfulness and trust signals regardless of how a page is created. Treat E‑E‑A‑T as your operational quality model—not a single “ranking factor.” Google’s own guidance makes this clear: generative AI is acceptable when content is helpful, original, and transparent, and when you avoid scaled, low‑value outputs described in Google’s generative AI content guidance and the March 2024 spam policy update on scaled content abuse and site reputation abuse in Google’s core update and spam policies.

The Human‑in‑the‑Loop Editorial Workflow

A simple, role‑based flow keeps AI drafts anchored in experience and accuracy:

- Prompter: crafts prompts aligned to the brief and E‑E‑A‑T criteria; documents target sources and constraints.

- SME: injects firsthand experience, verifies topical nuance, and adds unique commentary or examples.

- Editor: enforces style, structure, transparency, completeness; ensures trust signals and structured data.

- Fact‑checker: validates claims against primary sources; records citations; flags unresolved issues.

Think of this as your content “quality circuit.” Each role closes an integrity gap AI alone can’t see.

Build On‑Page Trust Architecture

Trust is partly how your page “feels” to a reader—and to machines. Make credibility visible and machine‑readable.

- Author bylines and expert bios: link authors, add credentials, affiliations, publications, and authoritative profiles. Mark up with Person schema.

- Publisher credibility: clear About page, editorial standards, corrections policy, and contact details; Organization and WebSite schema with publishingPrinciples.

- Transparent sourcing: inline citations to primary sources; visible last updated dates; clear corrections/errata.

- Technical trust: HTTPS, strong Core Web Vitals, and structured data that mirrors visible content.

For structured data, follow Google’s guidance and policies. See Structured data policies and Organization structured data. Implement Article/BlogPosting, Person, Organization, and WebSite with publishingPrinciples. Ensure what’s marked up matches what users can see.

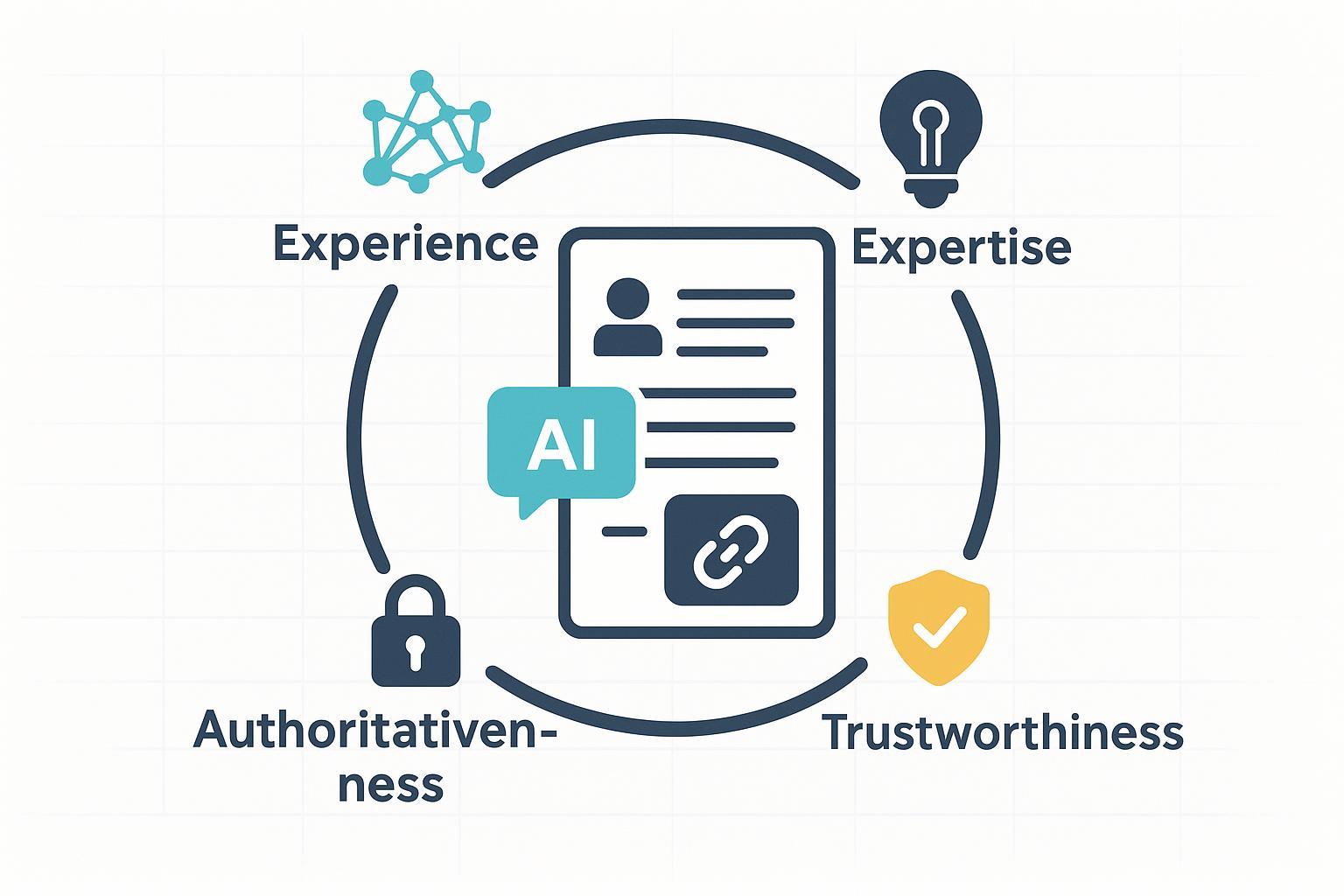

Operationalize the Four Pillars

You don’t “check a box” for E‑E‑A‑T; you design workflows that make each pillar tangible.

Experience: Show the human touch

Readers trust content that shows lived context. Add:

- Firsthand accounts: what you tried, measured, or learned on real projects.

- Case notes: mini case studies with constraints and outcomes.

- Experiments: simple tests and results; even null results build credibility.

In practice: incorporate SME callouts and “What we found” sidebars. The Quality Rater Guidelines explain how evaluators weigh experience within E‑E‑A‑T; see Google’s QRG (2025) described in the Search Quality Evaluator Guidelines PDF.

Expertise: Credentials and review

Demonstrate qualification and appropriate review:

- Credentials in bios: degrees, certifications, publications, notable roles.

- ReviewedBy for sensitive topics: add a qualified reviewer and display the review date; reflect it in schema where applicable.

- Editorial standards: link to publishing principles; keep terminology accurate (AP Style guidance around gen‑AI helps avoid confusion). The Associated Press published updates to generative AI standards in 2025; see AP’s coverage of updates to generative AI standards.

Authoritativeness: Stand on solid sources

Authority is earned by connection to reputable knowledge.

- Cite primary sources with descriptive, in‑sentence links.

- Maintain consistent identity: sameAs links to authoritative profiles (LinkedIn, journals, conference talks).

- Encourage reputable mentions and backlinks through original research and useful frameworks.

A practical cue: ensure citations prefer canonical pages over summaries, and limit link density so the narrative stays readable.

Trustworthiness: Accuracy, transparency, and safe tech

Trust is the sum of accuracy plus transparent process.

- Accuracy: verify key facts against primary sources; avoid over‑precision without data.

- Transparency: disclose AI assistance when material; document updates and corrections.

- Policies: publish editorial standards, corrections, and contact info.

- Technical: stable site, HTTPS, good performance, and honest structured data.

Google’s March 2024 update and spam policy changes tightened enforcement against scaled, unhelpful content; see the product team’s update summary. Keep outputs human‑reviewed and genuinely useful.

Tooling Snapshot: Editors and QA/Dectors

Below is a pragmatic snapshot. Pricing changes—verify on vendor sites before you buy.

| Tool | Primary Use | Typical Starting Point | Notes |

|---|---|---|---|

| SurferSEO | SEO content editor and audits | ~$99/mo (verify) | Strong optimization; no native AI detector. |

| Clearscope | Premium SEO editor | ~$189/mo (verify) | Clean SERP‑aligned guidance; higher cost. |

| Frase | Briefs, SERP analysis, AI drafting | < $100/mo (verify) | Beginner‑friendly; watch for generic AI outputs. |

| Jasper | AI drafting with brand voice | Varies (verify) | Fast generation; needs human editing; has plagiarism/AI checks via partners. |

| Originality.ai | AI detection & plagiarism | Varies (verify) | Use as triage; detectors have false positives/negatives. |

| Copyleaks | AI/plagiarism detection | Varies (verify) | Helpful signals; treat results cautiously. |

Detectors aren’t judges. Mixed‑authorship content, paraphrasing, and model drift can fool them. Use them as part of QA, not as gatekeepers without human review.

SMB/Solo‑Creator Quick Start

Short on time? Here’s a compact routine you can run in about 15 minutes before publishing.

- Verify three key facts against primary sources; add 2–3 authoritative citations.

- Add an author bio with headshot, credentials, and 2–3 sameAs links; mark up with Person schema.

- Insert a clear disclosure if AI assisted: “This article was assisted by generative AI tools. All facts were verified and the content reviewed by [Name/Role].” Place near the byline.

- Add Article schema with author and publisher; ensure WebSite includes publishingPrinciples pointing to your editorial guidelines.

- Run a quick language/style pass and ensure Core Web Vitals aren’t failing.

Detector Reliability and Publishing Ramp

Should you block content that tests “AI‑written”? Not necessarily. Most detectors are best used as triage signals. False positives are real, and sophisticated human text can be mislabeled. Start with a gradual ramp:

- Pilot: publish a small set of AI‑assisted pages with full human review and E‑E‑A‑T signals.

- Monitor: track engagement and rankings; record corrections and updates.

- Iterate: refine prompts, deepen SME involvement, and expand only when quality holds.

Why rush when a measured rollout tells you what’s actually working?

Next Steps

Audit your most important pages: do they show experience, demonstrate expertise, connect to authority, and earn trust? Align your workflow to make those pillars unavoidable. Then, implement structured data so machines can see what readers already feel. For policy clarity and ongoing alignment, bookmark Google’s generative AI content guidance and the March 2024 core update and spam policies, and keep your editorial standards page up to date.