Auto-Localization at Scale: 2025 Best Practices That Actually Ship

If you’re expanding into 10, 20, or 50+ markets, the bottleneck is rarely translation itself—it’s the orchestration: getting the right content into the right workflow, with guardrails, and delivering continuously without breaking quality or compliance. After leading and auditing several large-scale programs, here’s the playbook I’ve seen work reliably in 2025.

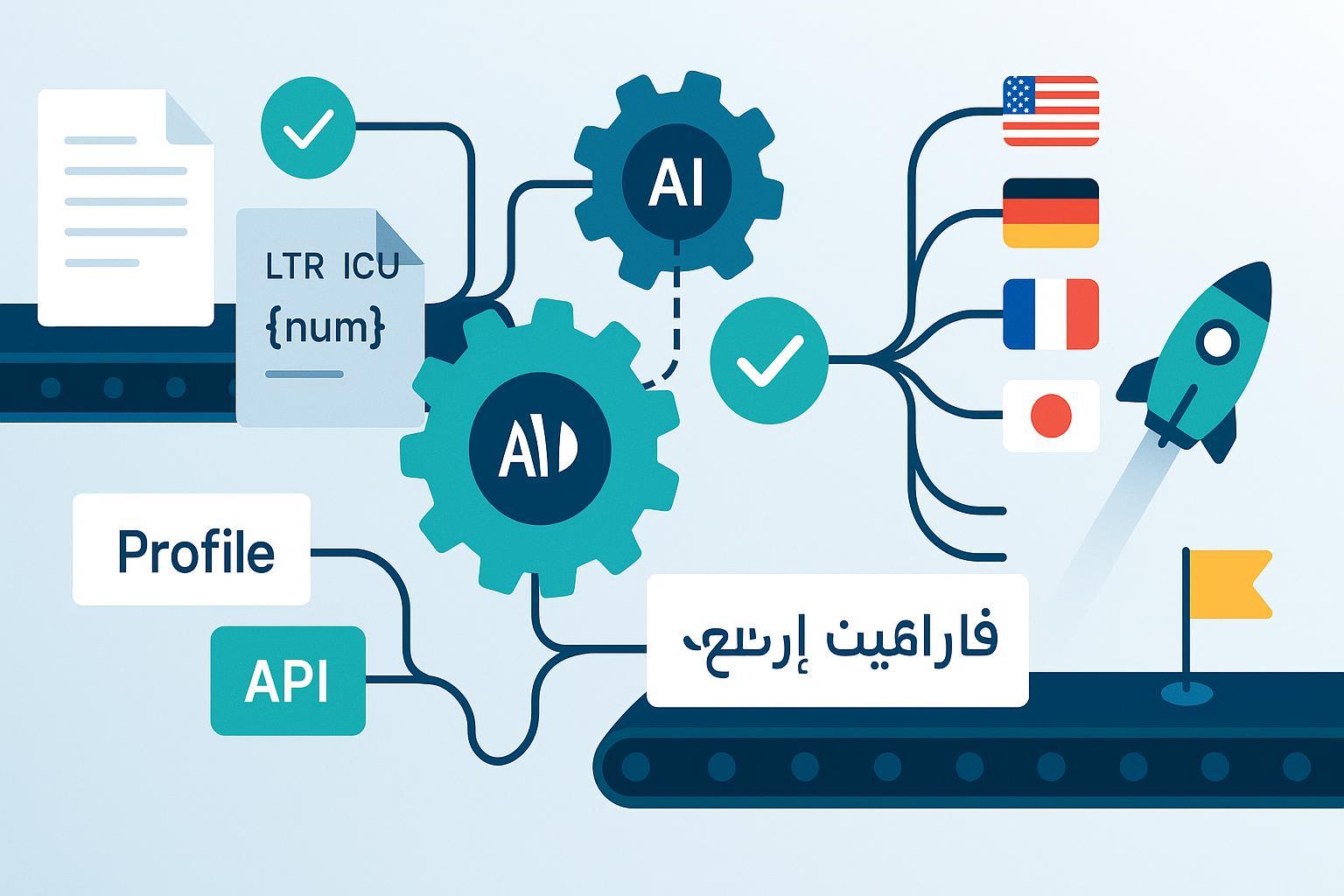

The end-to-end blueprint (tool-agnostic, proven in practice)

Follow these ten steps as your backbone. Adapt details based on your stack and risk profile.

- Source preparation and i18n foundations

- Externalize every translatable string and adopt canonical locale identifiers using IETF BCP 47 language tags per RFC 5646 and RFC 4647.

- Use ICU MessageFormat so plural/gender/selection variants render grammatically at runtime (see the ICU MessageFormat guide (Unicode)).

- Normalize dates, numbers, currencies via the Unicode CLDR locale data to avoid custom per-locale logic.

- Run pseudo-localization in CI to catch truncation, encoding, ICU placeholder, and bidi issues before you translate.

- Content classification and routing

- Classify content into risk tiers (e.g., UI core, help/support, marketing, legal). Route each tier:

- Low-risk: MT + automated QA.

- Medium-risk: MT + light post-editing.

- High-impact or legal: Human translation and review end-to-end.

- Define “go/no-go” thresholds with measurable metrics (see Quality section below) so routing is auditable and repeatable.

- Terminology and style management

- Maintain a single source of truth for glossaries and style guides; enforce terminology adherence at QA gates before release.

- Keep termbases versioned; changes should trigger rechecks for affected locales.

- MT enablement and adaptation

- Connect multiple MT engines and select per language/domain. Where possible, apply domain adaptation or custom glossaries.

- Collect feedback via MTQE or post-editor inputs to guide engine selection and adaptation.

- Human-in-the-loop post-editing

- For MT output that requires human correction, align your process with ISO 18587:2017 requirements for MT post-editing. Define “light” vs “full” post-editing criteria per content class.

- Provide context (screenshots, product states, user roles) to editors. Context reduces error rates more than any single MT tweak in my experience.

- Automated LQA and validation gates

- Validate ICU patterns, placeholders, and length constraints automatically.

- Enforce terminology and spelling checks before content reaches human review; reserve human time for meaning and tone.

- Use an operational framework like TAUS DQF to standardize quality categories and track error rates over time.

- Pseudo-localization and RTL readiness

- Keep pseudo-localization as a blocking check in PR pipelines. Expand text ~30–50% and bracket strings to reveal truncation/concatenation.

- For RTL, adopt CSS logical properties and appropriate dir attributes, validating with real RTL content (see W3C CSS Text guidance summarized in WCAG 2.2 techniques).

- CI/CD, feature flags, and OTA delivery

- Decouple release from deploy. Use feature flags for locale rollouts and instant rollback to reduce recovery time (see LaunchDarkly’s RTO vs RPO explainer (2024)).

- Automate string synchronization with your TMS via CLI/API and CI workflows; vendors document GitHub Actions patterns (e.g., Lokalise developers—GitHub Actions).

- For apps, prefer over‑the‑air (OTA) string updates or edge delivery for faster fixes; see the delivery trade-offs discussed in Vercel’s edge delivery primer.

- Keep a fallback locale strategy that fails gracefully when a string is missing.

- Accessibility in every locale

- Ensure localized content and UI meet WCAG 2.2 success criteria. Localize alt text, labels, and error messages and test with assistive tech.

- If you serve EU public sector or sell into it, be mindful of ETSI EN 301 549 alignment.

- Monitoring, analytics, and feedback loops

- Instrument logs and analytics with locale context. Track translation defects, runtime ICU/placeholder errors, and user metrics by locale.

- Feed production findings into terminology updates, MT engine selection, and content routing rules.

Summary: Treat localization as a productized pipeline. Strong i18n, risk-based routing, and automated QA are what make scale sustainable.

What to automate vs. what to keep human (how to decide in 2025)

The right split isn’t ideological; it’s risk-based and measurable.

Automate by default for:

- Repetitive, low-risk, high-volume content (e.g., knowledge base, changelogs) with strong terminology coverage.

- In-product microcopy variants when intent is unambiguous and ICU constraints are validated.

Keep humans in the loop for:

- High-impact flows (checkout, legal terms, medical/financial content) where tone and liability matter.

- Brand voice and persuasive marketing where cultural nuance drives conversion.

Guardrails that work:

- Define MQM/DQF-style error categories and acceptable thresholds per content class; gate promotions based on those thresholds (leveraging TAUS DQF operational metrics).

- Require ISO-aligned post-editing for classes routed to MT+PE (see ISO 18587:2017).

- Mandate context artifacts (screenshots, design links) for any human-edited work.

Technical foundations you should not skip

- Locale identifiers: Use BCP 47 language tags as your canonical identifiers (RFC 5646, RFC 4647).

- Runtime formatting: Lean on CLDR-driven libraries for dates/numbers/currencies (Unicode CLDR).

- Message rendering: Implement ICU MessageFormat for plural/gender/select (ICU User Guide).

- Accessibility: Apply WCAG 2.2 throughout the localized experience.

- Compliance: Use ISO 17100 when vetting translation service providers for process maturity (ISO 17100:2015).

CI/CD operationalization and release safety

This is where many teams stumble. The goal is safe, frequent, and observable releases.

- Feature-flag locales: Tie locale enablement to flags so you can ramp or roll back without redeploy. The concept is standard in modern engineering practices (see GitLab’s engineering roadmap notes on feature flags).

- OTA and edge delivery: For mobile and hybrid apps, OTA strings reduce app store dependency; for web, edge delivery can minimize latency for global audiences (see Vercel’s edge delivery overview).

- Automated LQA gates: Break builds when ICU placeholders mismatch, when glossary terms are violated, or when strings overflow UI constraints.

- Fallbacks and resilience: Don’t block UI when a translation is missing; degrade gracefully to a base language and log the event with locale context.

- Observability by locale: Segment error tracking and UX metrics by locale so issues are visible and testable.

Quality management at scale (without drowning in reviews)

- Define a taxonomy and thresholds: Adopt a quality framework that your translators, PMs, and engineers can all understand. DQF categories and dashboards help standardize review and reporting (TAUS DQF).

- Separate form from meaning: Let automated checks handle form (placeholders, capitalization, terminology). Reserve humans for meaning, tone, and cultural appropriateness.

- Terminology governance: Changes to termbases should trigger re-validation of impacted strings and, if needed, retranslation workflows.

- Context is king: Provide screenshots and environment links in TMS tickets. It prevents most “correct” but contextually wrong edits.

Governance, privacy, and regulatory guardrails (don’t skip this)

If you process user-generated content, support tickets, or any text containing PII, you need explicit controls.

- Data classification and routing: Classify strings by sensitivity and default to redaction/anonymization before sending to external MT/LLM services.

- Cross-border transfers and data residency: Map data flows and align with GDPR transfer mechanisms (adequacy, SCCs, BCRs). The European Data Protection Board’s Opinion 28/2024 on AI models and its 2025 guidance updates underscore lawful basis and transparency expectations; see the EDPB’s 2025 final guidelines on Article 48 transfers news page for cross-border considerations.

- Vendor agreements: Ensure retention controls, access logs, and DPAs are in place; prefer enterprise MT/LLM offerings with explicit data handling switches.

Measuring ROI you can defend

Public, apples-to-apples ROI metrics for localization automation are still scarce. Research venues like the WMT 2024 shared task series and the MT Summit 2025 proceedings focus primarily on quality metrics (human judgments, BLEU) rather than operations. That’s your cue to measure internally with discipline.

Instrument these KPIs pre- and post-automation:

- Speed: Translation lead time per locale; cycle time from string commit to production.

- Throughput: Words/person-hour; automated vs human-translated volume share.

- Quality: Error rates per 1k words using a standardized taxonomy; defect leakage to production; rework rate.

- Cost: Cost per word by content class; cost per release per locale.

- Business: Organic traffic and conversion uplift by localized page; support ticket deflection in local languages.

Implementation tips:

- Add timestamps to each localization step in CI/CD to compute cycle times automatically.

- Export LQA reports via TMS APIs into your data warehouse to trend error categories.

- Use feature flags to A/B rollout locales and isolate impact.

Toolbox: Where each tool actually fits

Use a mix that matches your content types and workflows. Objective placements below are based on publicly documented capabilities.

-

QuickCreator — AI blogging and content localization support for marketing teams

- First mention: QuickCreator

- Disclosure: We may use or partner with this product in some projects; evaluate independently for your needs.

- Fit: Multilingual content generation, SEO localization, collaboration, and publishing orchestration. It complements (not replaces) a TMS for string-level app localization.

-

Lokalise — Continuous localization platform for apps

- Fit: CI/CD integrations, mobile SDKs, automation via GitHub Actions, and support for OTA-style workflows (see Lokalise developers—GitHub Actions).

- Trade-offs: Strong developer workflows; you’ll still need marketing CMS workflows elsewhere.

-

Smartling — Enterprise localization platform

- Fit: Vendor/linguist management, QA checks, MT routing, and enterprise connectors (per Smartling help/docs). Good when you centralize multiple content types.

- Trade-offs: Enterprise power with corresponding complexity; plan onboarding carefully.

Selection criteria you should apply regardless of vendor:

- Integrations: Git, design tools, CMS, ticketing, analytics.

- QA automation depth: ICU/placeholder checks, terminology enforcement, length/constraints, spellcheck.

- MT routing and adaptation: Multi-engine connectors; custom glossaries.

- Governance: Roles/permissions, audit logs, data residency options, DPAs.

- Analytics: Exportable quality metrics, API access, webhooks.

A simple workflow example: marketing localization without drama

Scenario: You’re rolling out a product blog and landing page to 15 languages.

- Authoring and SEO localization: Generate source and multilingual drafts with an AI content platform geared to marketing workflows like QuickCreator. It provides multilingual generation and SEO optimization, then hands off locale drafts to your editors.

- Quality and consistency: Enforce terminology, style, and placeholders within your TMS, using automated QA gates before human review.

- Publishing: After review, publish to your CMS or WordPress. For app strings (e.g., banners), sync through your TMS via CI and optionally OTA for faster fixes (see Lokalise developers—GitHub Actions patterns).

- Measurement: Track localized page traffic and conversions per market; compare to baseline and feed learnings back into termbases and prompts.

Note: This pattern keeps creation and publishing velocity high while preserving quality and governance. It’s not tied to any one vendor and scales well when you add languages.

Common pitfalls and how to avoid them

- Mixing locale codes: Inconsistent language tags create cache and routing bugs. Standardize on BCP 47 (RFC 5646).

- Skipping ICU validation: Runtime errors from malformed placeholders are avoidable—validate in CI.

- Treating accessibility as optional: Localized alt text and form labels are mandatory for inclusive UX and compliance (WCAG 2.2).

- Sending PII to public MT: Classify content; redact by default; align with EDPB guidance on AI models and data transfers (EDPB Opinion 28/2024).

- No observability by locale: If errors aren’t segmented, you’ll revert to guesswork.

Rollout and change management (make it stick)

- Pilot one or two locales per content class and measure. Expand once KPIs stabilize.

- Enable feature-flag rollouts to uncouple code from content, and to test safely in production (see GitLab feature flag practices).

- Train translators and editors on your termbase, style guide, and context tools. Publish “what good looks like” examples.

- Document the routing matrix and QA thresholds so anyone can audit decisions.

Field checklist (copy/paste into your next sprint)

-

Locale hygiene

- [ ] BCP 47 tags standardized across code, CMS, and analytics

- [ ] CLDR-driven number/date/currency formatting implemented

- [ ] ICU MessageFormat used for plural/gender/select

-

Risk-based routing

- [ ] Content classes defined with owners (UI, support, marketing, legal)

- [ ] Routing rules (MT-only, MT+PE, human) documented with thresholds

- [ ] Context artifacts required for human reviews

-

Automation & QA

- [ ] Pseudo-localization runs in CI with expansion and bidi tests

- [ ] Automated gates: ICU/placeholder, terminology, length, spelling

- [ ] LQA metrics tracked via DQF categories and dashboards

-

Delivery & release

- [ ] CI/CD sync between repo, TMS, and CMS established

- [ ] Locale feature flags in place; rollback tested

- [ ] OTA/edge delivery strategy defined for apps/web

-

Accessibility & compliance

- [ ] WCAG 2.2 checks across locales

- [ ] DPAs and data handling documented; sensitive data redaction in MT

- [ ] GDPR transfer mechanisms mapped for vendors

-

Measurement

- [ ] KPI baselines captured (speed, throughput, quality, cost, business)

- [ ] Locale-segmented analytics and logging implemented

- [ ] A/B or staggered locale rollouts for impact analysis

Final thought

Sustainable auto-localization at scale isn’t about chasing a “perfect” engine or tool. It’s the discipline of strong i18n, risk-based routing, automated QA, and safe delivery practices—measured relentlessly. If you ship with those habits, adding the twentieth language feels like adding the second.