How to Align Content With AI Model Behavior (2025 Best Practices)

When generative models draft your articles, brief your customers, or suggest policy language, alignment stops being abstract. It’s the difference between a trustworthy workflow and a costly correction cycle. Think of alignment as both the steering wheel (direction) and the speed governor (constraints): you need to guide behavior and cap risk at the same time.

Outer vs. inner alignment—what practitioners need to watch

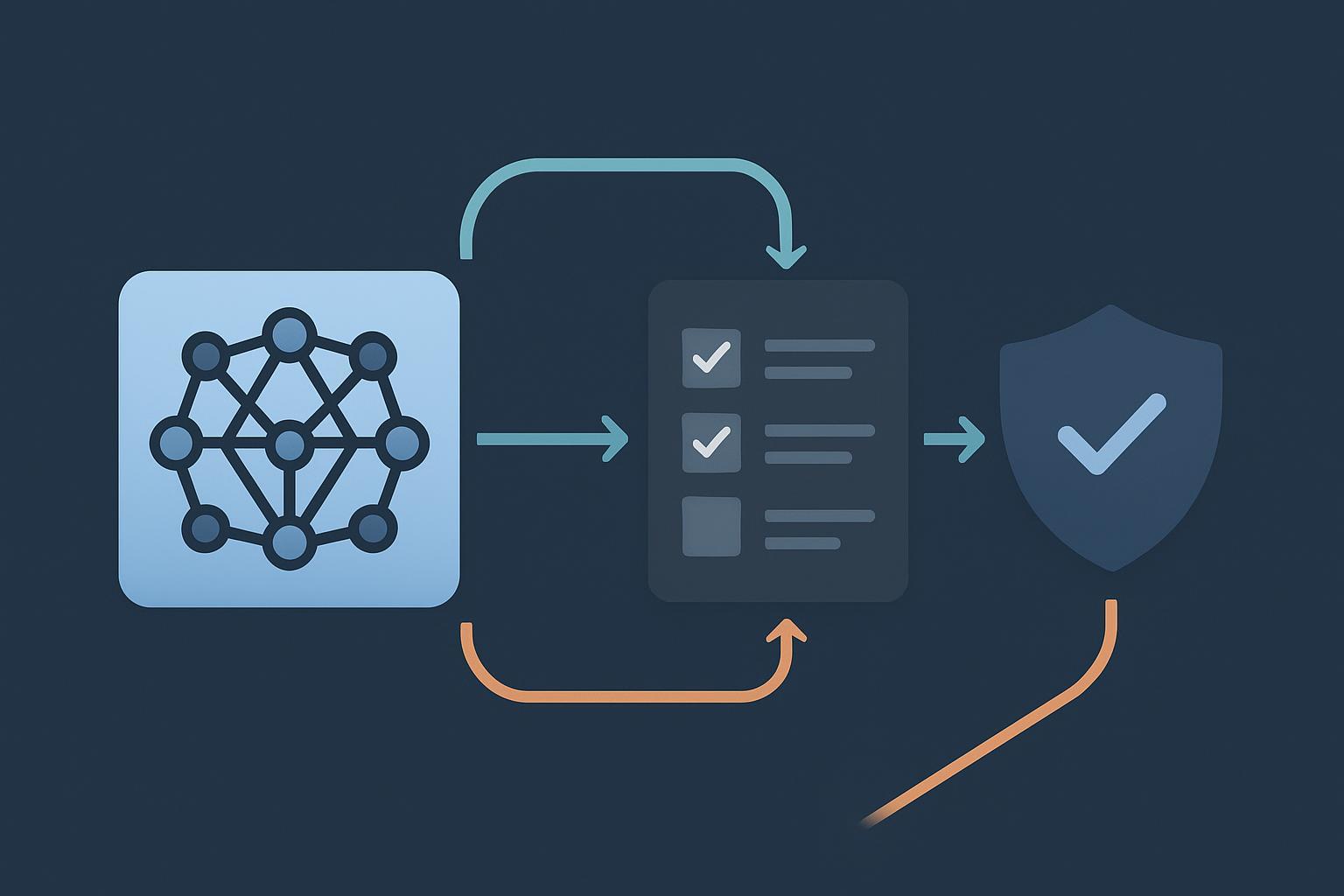

Outer alignment matches system behavior to stated goals (policies, editorial style, safety rules). Inner alignment concerns what the model “learns to optimize” internally; deceptive or reward‑hacking tendencies can pass surface tests yet fail under pressure. For content systems, that gap shows up as persuasive but unfounded claims, brittle refusals, or jailbreak susceptibility. The takeaway: you need layered controls—policy prompts and filters plus deeper technical measures and human review—to bridge outer and inner goals.

Post‑training alignment: RLHF vs. DPO

Reinforcement Learning from Human Feedback (RLHF) and Direct Preference Optimization (DPO) dominate post‑training. DPO simplifies pipelines by skipping an explicit reward model, while RLHF remains flexible when you need nuanced trade‑offs. Practical guidance:

- Choose DPO when you want faster iteration on instruction following or editorial tone, and your team prefers simpler training loops.

- Choose RLHF when safety trade‑offs require reward shaping, risk‑weighted objectives, or more granular control over refusal behavior.

- Beware preference drift: optimizing for “likability” can inflate verbosity or confidence without improving correctness; counter with calibrated evaluation and human spot checks.

For a clear post‑training overview, see the practitioner‑focused explainer on four post‑training approaches in the Snorkel blog’s LLM alignment techniques (2024–2025).

Mechanistic interpretability as practical steering

Recent work shows sparse autoencoders can isolate interpretable features in transformer activations and let you nudge specific behaviors. Anthropic’s 2024 research demonstrates scalable pipelines to extract thousands of features and perform causal interventions that steer outputs in controlled tests; see their publication, “Scaling Monosemanticity” (Anthropic, 2024). What does this mean for content alignment?

- Treat interpretability as an adjunct, not a silver bullet. Use it to identify risky circuits (e.g., sycophancy or leakage patterns) and pair with policy filters.

- Pilot “suppress/boost” features for known hazards in a pre‑production model, then verify with adversarial test suites.

- Document limits: local interventions don’t guarantee global safety; keep human review in the loop for high‑risk outputs.

Safety evaluation and red teaming

Alignment quality hinges on rigorous testing before and after release. Two resources can anchor your program:

- Benchmarks and attacks: JailbreakBench (2025) consolidates prompts, attacks, and leaderboards to measure Attack Success Rate (ASR). HarmBench standardizes refusal robustness and reports defense deltas (for example, a 2024 study shows a defense reducing ASR from 31.8% to 5.9% on a 7B model); review the methodology in HarmBench (arXiv, 2024).

- Enterprise security playbooks: The OWASP Top 10 for LLM Applications (v2025) provides an actionable checklist for prompt‑injection defenses, data leakage controls, privileged action gating, and human‑in‑the‑loop safeguards.

Operationalize this with continuous red teaming (automated + human), ASR tracking across releases, and clear rollback paths when regressions appear. Where should you start if resources are tight? Prioritize high‑risk user journeys and measure ASR weekly until it stabilizes.

Distributional shift and model drift

Your evaluation suite must evolve with your data. As topics, languages, and user intents shift, unaligned behavior can creep in. A resilient loop looks like this: curate offline tests, monitor live traffic, promote real failures into your offline suite, and set retraining triggers. How do you know when to act? Watch for sustained drops in task accuracy, rising hallucination rates, or divergence spikes in input/output distributions. Canary deployments and shadow rollouts give you a safe proving ground before broad release. Does this sound like SRE for content? That’s the idea.

Editorial QA and provenance (C2PA)

Even well‑aligned models hallucinate. Counter with editorial rules and cryptographic provenance:

- Editorial policy: require sources for claims, mandate reviewer sign‑off for high‑risk content, and track correction SLAs.

- Content provenance: adopt C2PA Content Credentials so assets carry verifiable “who‑did‑what‑when” history. The specification focuses on authenticity rather than quality, but it enables audits and trust transfer across teams; see the C2PA technical specification and explainer (2024–2025).

Governance scaffolding you can adopt now

Turn alignment from a project into a program by anchoring it to recognized frameworks:

- NIST: The Generative AI Profile outlines actions across Govern/Map/Measure/Manage, including incident response, red teaming, and provenance requirements. Reference NIST AI 600‑1 (2024, official DOI) for language you can adapt into policies and checklists.

- Security SDLC for LLMs: Use OWASP LLM Top 10 (above) to harden applications and manage jailbreak and data‑exfiltration risks.

- Regulation: The EU AI Act entered into force in 2024 with staged obligations through 2026 (transparency, oversight, documentation). Track implementation via the European Commission’s update, “AI Act enters into force” (2024, EC), and map your roadmap to the timelines.

Metrics that matter: a compact scorecard

Quantify alignment so you can improve it. Track the following quarterly (with tighter SLAs for regulated contexts). Use this as your living dashboard.

| Dimension | Example metric | Target band (starting point) | Notes |

|---|---|---|---|

| Safety robustness | Attack Success Rate (ASR) on JailbreakBench/HarmBench | ↓ quarter‑over‑quarter; <10% on priority suites | Use strong baselines and keep suites fresh |

| Refusals | Appropriate refusal rate | Maximize “right refusals,” minimize false refusals | Balance safety with usability |

| Factuality | Hallucinations per 100 outputs (severity‑weighted) | Downward trend; trigger when >10–15% | Sample with human spot checks |

| Quality | Helpfulness/relevance (LLM‑judge + human sample) | Stable ≥4/5 | Calibrate judges per domain |

| Drift | Input/output divergence vs. baseline | Trigger at >2σ for 7 days | Canary/shadow before rollback |

| Operations | MTTD/MTTR for incidents; rollback count | Decrease over time | Tie to on‑call runbooks |

| Provenance | % content with valid C2PA credentials | >95% for public assets | Verify signatures in CI/CD |

A 90‑day rollout plan for human‑AI collaboration

Use this timeline to stand up a durable program without stalling delivery.

- Days 1–30: Define policies and baselines. Adopt NIST AI 600‑1 language for incident response and red teaming. Stand up offline test suites (JailbreakBench/HarmBench plus domain prompts). Implement basic provenance (C2PA) in your content pipeline.

- Days 31–60: Tune and test. Run DPO/RLHF pilots on a small slice of your data; measure ASR, refusal quality, and factuality. Start a limited interpretability pilot (suppress/boost a risky feature), and ship guarded canaries.

- Days 61–90: Operationalize. Add online monitors and drift alerts, formalize rollbacks, and schedule quarterly reviews against the scorecard. Expand human‑in‑the‑loop coverage for high‑risk outputs.

Build a living alignment program

Models evolve, audiences shift, and policies change. Treat alignment as continuous operations: measure, test, retrain, and review. If you combine post‑training alignment, interpretability‑informed controls, rigorous red teaming, provenance, and governance frameworks, you’ll ship content that’s not just on‑brand—but reliably on‑policy and on‑truth.